Linear Dependence and Linear Independence

Breaking down linear combinations, span, and what it actually means for vectors to be linearly independent — all in plain terms.

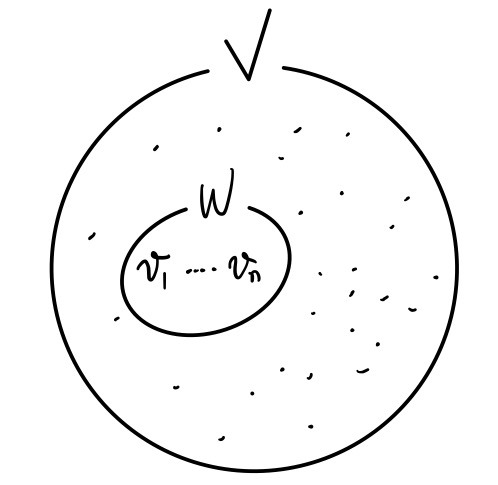

So picture this. We’ve got some vector space $V$, and floating around inside it are a bunch of elements. And then there’s this subset $W$.

Is $W$ a vector space?!?!?!

OK so for $W$ to actually count as a vector space,

and

whenever these guys live in $W$,

then this thing

has gotta be in $W$ too.

And also — if some vector

is in $W$, then multiplying by any old constant $k$,

that also has to live in $W$.

Only then do we get to call $W$ a vector space.

So basically,

only when this holds, $W$ earns the title.

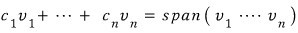

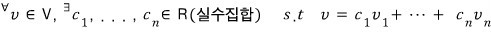

Now here’s a concept!!

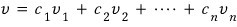

This thing — apparently it’s called a linear combination.

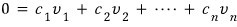

Written out as math,

that’s how it goes.

Anyway, when this is the deal, $W$ sits inside $V$, AND $W$ also gets to be called a vector space!!!

Also — the span can get big. Like, big enough that it just becomes $V$ itself.

If every single element inside $V$ can be written as a linear combination of

?!?!?!!!

This idea is gonna come in handy real soon when we hit basis vectors.

I’m sure y’all caught that. Moving on.

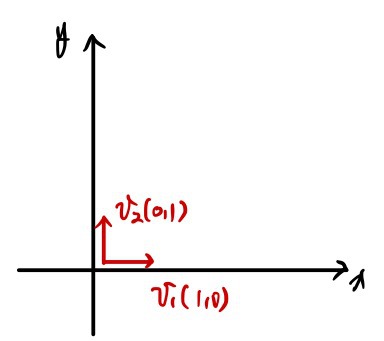

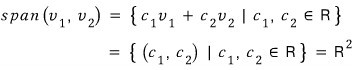

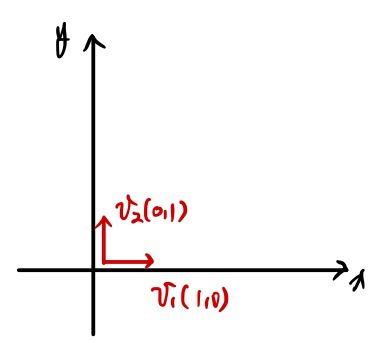

Let’s get concrete for a sec.

,

∈

The space spanned by these

,

is…

it just turns into the

space!!!!!!

OK cool, I get linear combinations.

So what’s linear independence?!!

Here’s the thing — sometimes there’s exactly!!!! one way to write an element of a vector space, and sometimes there are a bunch of ways.

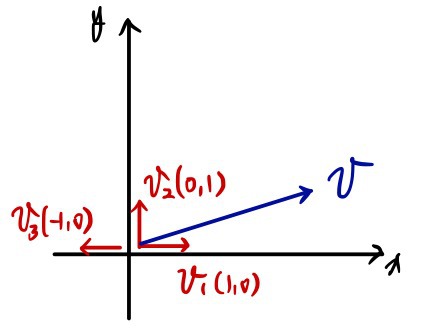

For example,

if I try to write the vector $v$ using $v_1, v_2, v_3$…… there’s gonna be a ton of ways.

Honestly just eyeballing it, there are an absurd number of ways to mash those three vectors into $v$.

But,

with these vectors? There was exactly!!! one way to write $v$.

OK !!!!

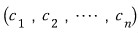

In a case like this,

,

we call this guy ’linearly independent.’

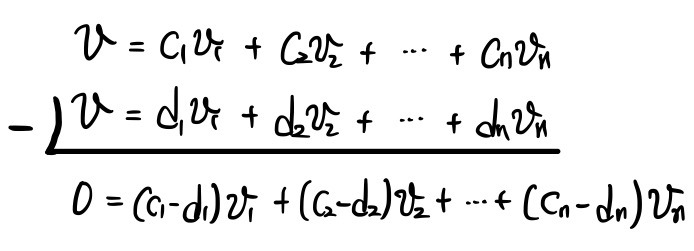

Let’s say it more generally.

Linearly independent means: when you try to express some element $v$ of the vector space using those linearly independent vectors,

if you can pull off this linear combination,

then the

— that ordered tuple representing it — there’s only ONE such tuple!!!!!

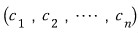

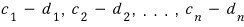

And the fact that the ordered tuple

is unique means

every single one of these has to be 0!!!

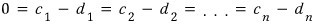

So — to be linearly independent, this condition’s gotta hold!!!!

Now let’s push just a tiny bit further into what linear independence actually means!!!!!

What I just said is: if

this holds,

the tuple satisfying it

is all zeros.

Huh?!??!?! Does it really have to be all 0!??!?!

OK to see why, let’s flip it.

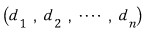

Suppose the tuple is not all 0 — suppose some other tuple exists.

Some tuple of coefficients is

let’s call it that.

So it’s a tuple of coefficients that makes the linear combination zero, but they’re not all 0.

Let’s say one of those nonzero coefficients is

(and yeah, there might be other nonzero ones in there too).

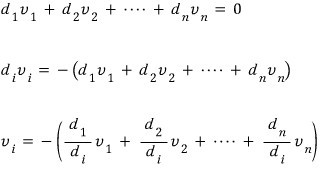

This is saying that $v_{(i)}$ can be written as a linear combination of the other vectors.

This — this is what we mean when we say $v_{(i)}$ is dependent on the others.

Yep. We call it “linearly dependent.”

Linear dependence is the flip side of linear independence!!!

In other words, when all the vectors are linearly independent,

NO vector can be written as a linear combination of the others!!!!!!!!

You’re gonna run into this concept again and again and again,

so really chew on it before moving on!!!

I was gonna just barrel right through into basis too, but —

I’ll save basis for the next post.

Reason being, basis was something I was so so so so curious about back in the day,

because I have this memory of being SO hyped about whatever was coming after this???

This one’s a little short,,,,heh heh heh

We’ll roll with it heh heh heh

Originally written in Korean on my Naver blog (2016-01). Translated to English for gdpark.blog.

Comments

Discussion happens via GitHub Discussions. You'll need a GitHub account to comment.