Matrices and Matrix Multiplication

We dive into linear algebra by unpacking functions as set mappings, then zoom in on linear mappings and how they all secretly boil down to matrices.

Now we’re properly diving into linear algebra.

First up — let’s take a look at “functions (mappings).”

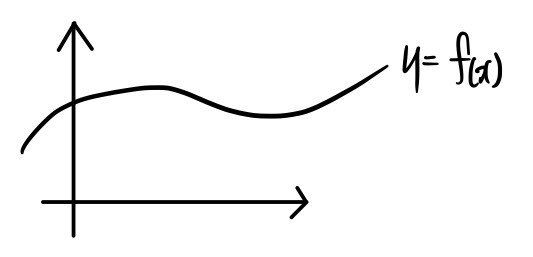

When we say “function,” us middle-and-high-school grads picture something like this

or this, right~~

Yep. Not wrong.

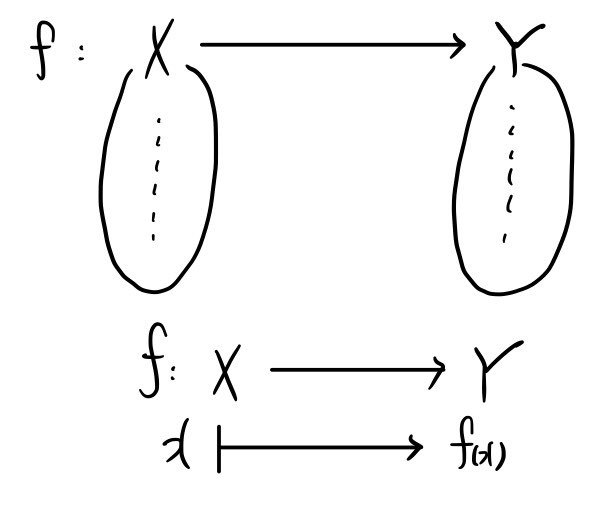

But instead of the coordinate-plane interpretation on the left, thinking of a “function” as something that sends one set to another set — like the picture on the right — is, apparently, juuust a tiny bit more fundamental.

So from here on out, almost everything we say about functions is going to be interpreted set-style.

Oh, by the way — I wrote “mapping” in parentheses next to “function” up there. What’s that about?

Mapping is basically the idea of “copying a shape” from one place to another. Let me try mapping my ID photo.

MA!!!!!!!!!!!!!!!!!!!!!!!PPING!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!

Ta-daaaa~~~~

Does it resonate?? T_T T_T T_T T_T sob sob

This is how I think about it. Subjective, sure, but —

some vector

gets passed through

function f,

and becomes this……

is “mapping” really the right word for that…?? hmm

I dunno lol

Anyway — “function” and “mapping” get used pretty much interchangeably, and you can basically think of “mapping” as roughly the same thing as “function.”

Linear function and linear mapping

Now, among the absurdly many functions/mappings out there, we’re going to zoom in on the “linear” ones.

Because this is linear algebra!!!!

Notation usually looks like this:

That alien-language thing means: a linear function L (or linear mapping L) that sends some vector space V to another vector space W.

OK so now let’s look at the conditions for L to actually count as a linear function (mapping).

I talked about this waaaay back in the very first post.

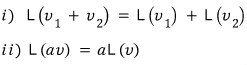

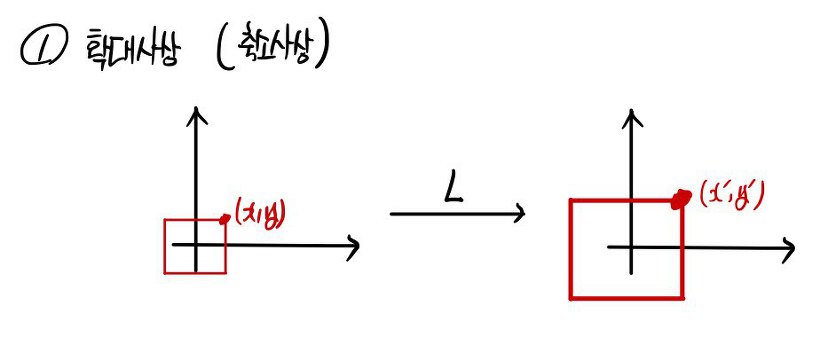

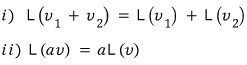

Conditions for being a linear mapping:

Easy, right.

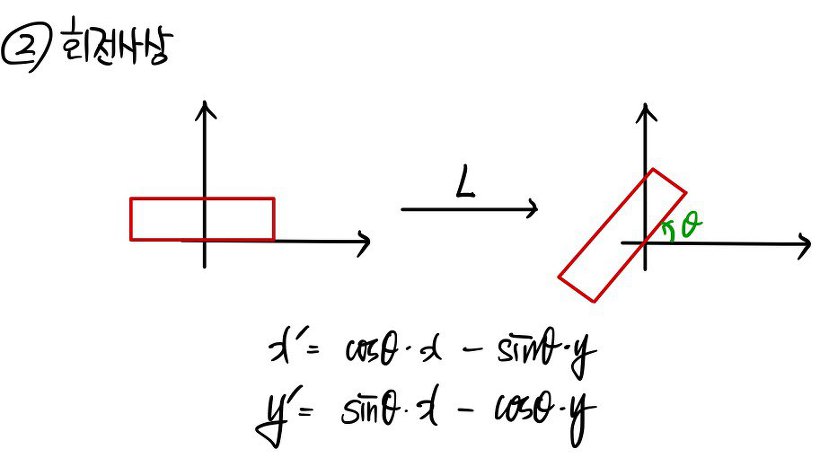

Now let me throw a few examples of linear mappings at you.

(Let’s pin it down to 2-dimensional space, and also pin down the basis β.)

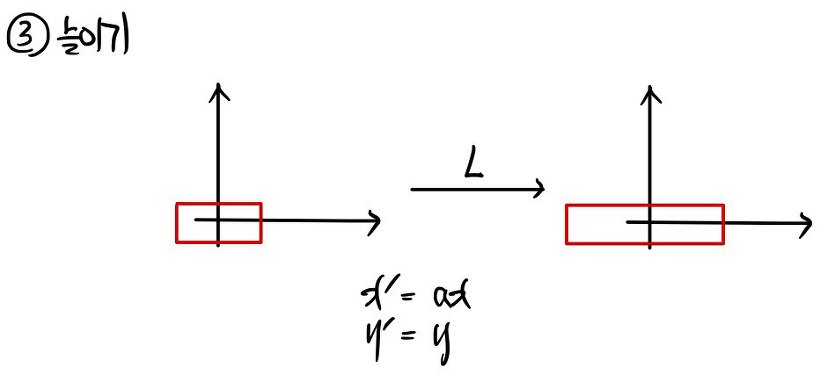

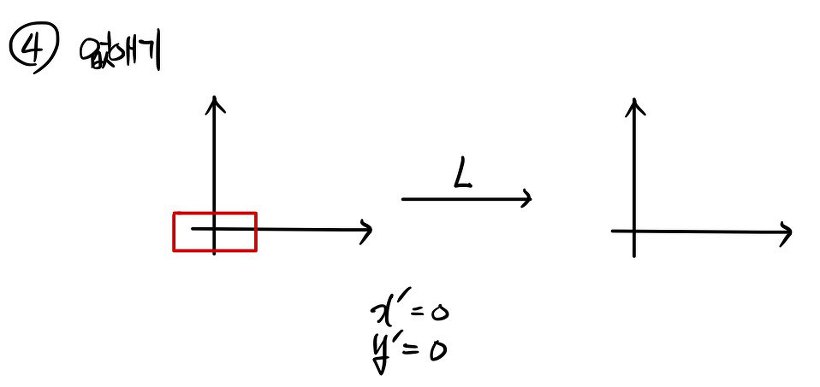

What this says is: passing the point (x, y) through the linear mapping gives you the point (x’, y’).

The collection of all those points draws out a line.

But there’s an enlargement happening — what’s the relationship between x, y and x’, y’?!?!

I’m guessing

If we kept listing types like this, we’d literally never finish.

So we’ll cut it off there. Even from just those few, we can spot something all linear mappings have in common.

(Side note) When we usually represent a vector on a coordinate plane,

we write it like this a lot,

but you can also write it as

We’re more used to the first one.

But is there a difference between the two?!?

For now — nope. None.

Whether you write a point on the coordinate plane one way or the other, no difference.

OK, so we now know what a linear mapping is.

Then when we run into some specific linear mapping, we might be curious — what kind of linear mapping is it, out of all the linear mappings out there?

So when we run into one, what info do we need to extract before we can say “yep, I’ve conquered this linear mapping”???

Same vibe as: when we run into a first-degree linear equation, knowing one point it passes through plus the slope is enough to say conquered!!!!

Turns out there’s a similar “got it, conquered!!” condition for linear mappings too.

In general, fully knowing a function means knowing what’s in the “domain” and the “codomain,” and knowing what’s in the “range” among those — and then we get to say we know the function inside out, right?!?!?!?

To be able to say we’ve conquered a linear mapping in its enti~~~rety,

it’s enough to just know where the “basis” archers shoot their arrows. From that we can figure out where all the other archers shoot theirs.

From here on, for the sake of the discussion,

let’s call the domain “the archers” and the codomain “the targets.”

And let’s call the range “the targets the arrows actually hit”!!!!

(That’s our setup.)

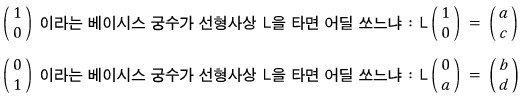

Out of the insanely many archers, we just need to know where these two shoot.

Why!!!!

Because of the two principles of linear mapping:

Let me lay out the reasoning.

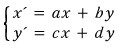

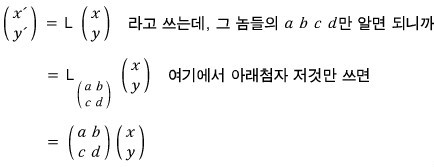

So if we can pin down a, b, c, d here, we can call this linear mapping L analyzed! We know everything about it!!

That is —

Almost there. The true identity of this thing we thought we knew called a “matrix” is about to be revealed!!!!

Definition of a matrix

2 x 2 matrix

The meaning of a matrix like this is: it’s a certain function. And it’s written using only the subscripts of

The rule for transforming the vector (x, y) into the vector (x’, y’) under that mapping is

x’ = ax + by

y’ = cx + dy

— meaning it follows a rule like that.

There’s another notation for matrices too.

The thing you learned back in 11th grade!

That’s how you write it, and it represents the elements of the matrix.

The position-value for each spot in the matrix is indicated like this — i is the “row” and j is the “column.”

(For anyone who gets confused~~ we usually say “horizontal then vertical,” not “vertical then horizontal,” right? In a matrix, “row” is horizontal and “column” is vertical!!!)

Also, the matrix A can be written as

or alternatively as

<Notation isn’t that important — it’s the kind of thing you just gradually get used to.>

OK!!! Let’s keep going.

The 2 x 2 matrix was

found among functions that move from 2-dimensional space to 2-dimensional space, like this!!

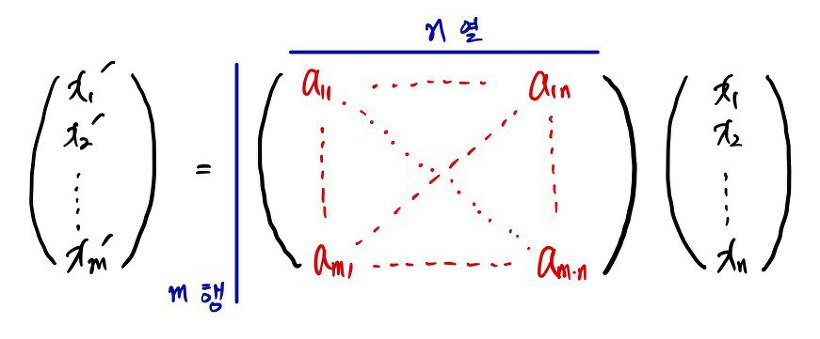

Extrapolating —

what does the matrix form of a linear function from n-dimensional space to m-dimensional space look like???

Probably

a shape like this, and

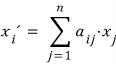

the equation that used to be x’ = ax + by becomes

so that’s how we’d write it.

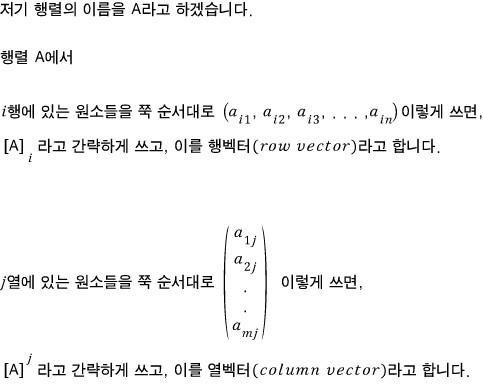

Row vectors and column vectors

A matrix like the one above can also be torn apart by row, or torn apart by column.

No wait —

tearing it by row is called a row vector, and tearing it by column is called a column vector.

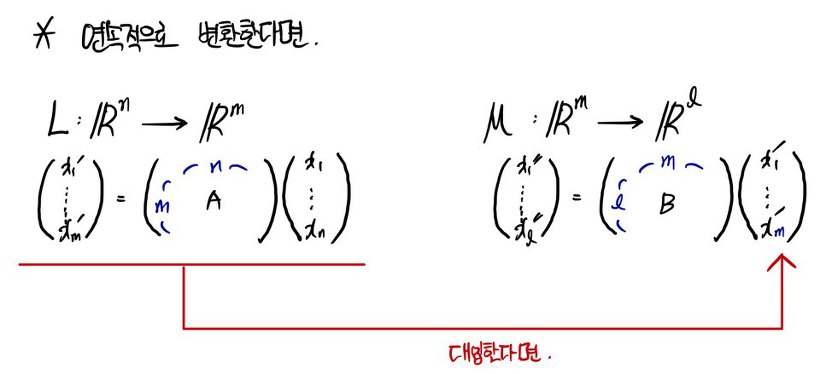

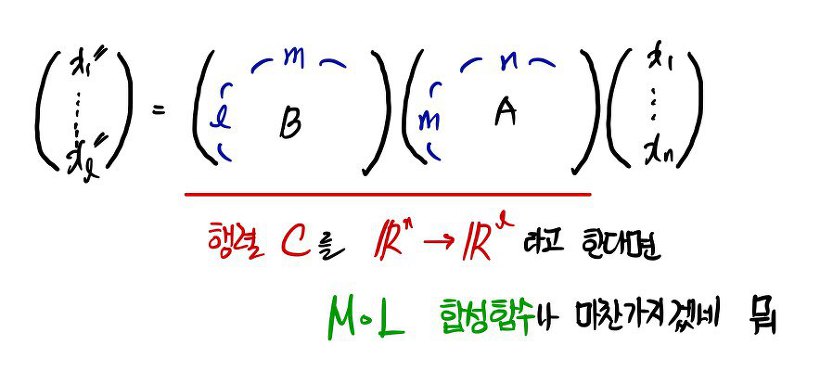

What if linear mappings happen back-to-back?!?!?!

This is just composite functions, a.k.a. matrix multiplication — you all know this right?!?!?! (I’m dying to just skip this part)

Matrix multiplication is something everyone can do on autopilot!!!! Right!

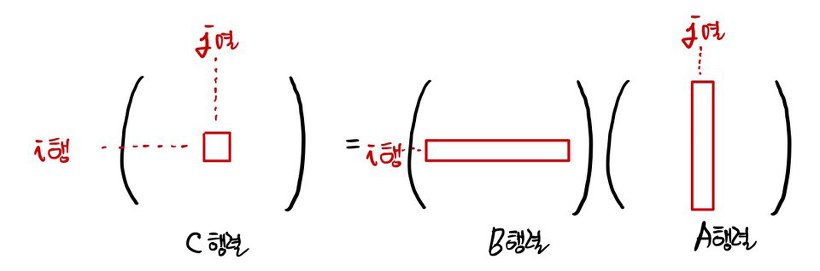

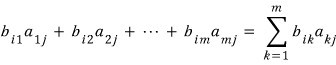

The (i, j) element of matrix C — where C is the product of matrices B and A — is

right?!

OK then!! Does the commutative law hold for matrix multiplication???

Of course no-no it’s no-no?!?? That’s nono (AB ≠ BA)

But since a matrix represents a function, and multiplying matrices means composing functions, the fact that the commutative law doesn’t hold for composite functions is totally natural — and so matrix multiplication not commuting is also totally natural.

I’m gonna move on to the next post fast!!!!

One more thing (side note)

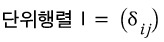

About the identity matrix I — back in high school we kind of grasped I as “roughly the number 1, but for matrices.”

But now that we know a matrix is actually a “function” (more specifically a mapping), if we think about what kind of mapping the identity matrix is —

it’s a “copy mapping”!!!! It just hands you back exactly what you gave it.

Also — tip!!!! Have you learned the Kronecker delta?!?!

Turns out the Kronecker delta is a concept defined in linear algebra.

The identity matrix I can be expressed super cleanly using the Kronecker delta!!

Originally written in Korean on my Naver blog (2016-01). Translated to English for gdpark.blog.

Comments

Discussion happens via GitHub Discussions. You'll need a GitHub account to comment.