Surjective, Injective, and Bijective Functions

We nail down surjective, injective, and bijective functions — and prove why same-dimension linear maps only need one condition to guarantee the other.

Alright, now then — let’s really head for the inverse matrix this time. For real for real.

That was a lie.

We’ll do the inverse matrix properly later. But there’s a concept we need to nail down first.

And that concept is:

When $V$ and $W$ in $L : V \to W$ have the same dimension,

① If $L$ is surjective $\to$ it automatically satisfies the injective condition $\to$ so $L$ is bijective.

② If $L$ is injective $\to$ it automatically satisfies the surjective condition $\to$ so $L$ is bijective.

That’s it. That’s the thing.

We’re gonna prove it. Let’s gooo!!

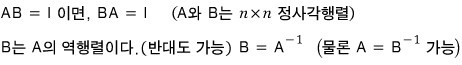

OK so — we learned about inverse matrices back in high school too.

Back then it went like this:

This thing called an inverse matrix? Not every matrix has one. Only some matrices do.

And what was an inverse matrix again — it means an inverse function, right?!?!?!

So then —

if an inverse function exists, the function has to be a 1:1 correspondence… that’s what we drilled in high school, right?!?!?!

Not every function has an inverse function. Only 1:1 correspondences had inverse functions!!!

We’re gonna use that exact same logic, but now in matrix-land. And in linear algebra, the 1:1 correspondence story goes like this:

When $V$ and $W$ in $L : V \to W$ have the same dimension,

① If $L$ is surjective $\to$ injective for free $\to$ bijective.

② If $L$ is injective $\to$ surjective for free $\to$ bijective.

We need to prove this before going anywhere… we’re gonna need it later. (sob)

Alright, destination set. Let’s keep moving.

So — before we get into it, everything we’ve been talking about so far has been:

linear maps between an $n$-dimensional vector space and an $m$-dimensional vector space, like that.

The reason was to keep things as general as possible.

One thing to flag here: this is a finite-dimensional vector space!!! Apparently the story gets a little different in infinite dimensions.

OK. The linear map represented by some matrix $A$ —

let’s say it’s this.

If $A$ has an inverse matrix, that means $L$ has an inverse function, right!?

And “having an inverse function” means $L$ has an inverse function, so —

we can say $L$ is a 1:1 correspondence!!!! Right!?!?!

(For the record — in high school we got pretty hard-brainwashed with: “inverse function exists $\to$ 1:1 correspondence.” That’s why I keep saying it like that.)

If we describe a 1:1 correspondence using the archers-and-targets picture — “no empty targets, and no target with two or more arrows in it” — does that ring a bell???!

OK so this is gonna feel a little different in flavor from high school.

Alright~~~, then —

let’s look at surjective, injective, and bijective.

★ Surjective function (surjective, onto)

(Each archer has exactly 1 arrow. Having 1 arrow each is literally the definition of a function.

A function can’t have a single domain element shooting out two image values into the codomain. If you plug $1$ into $f$ and both $3$ and $5$ come out — that’s not a function. Only when it maps to one value do you get to call it a ‘function’!!!)

For a surjective function, the order goes out to all the archers in $X$ (the domain). And the order is:

“I don’t care if a target gets hit by two arrows or three. Just make sure there are no empty targets.”

A function that obeys that order is surjective.

You’ll have $|X|$ (size of the domain) $\geq |Y|$ (size of the codomain), right?!?!?!

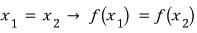

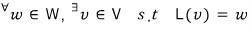

Cleanly in math:

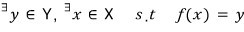

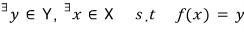

You can also read surjective the other way around:

“Pick any target you like — there’s an arrow that hit it (or some archer who shot at it).” That reading works too!!!

And now —

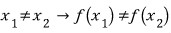

★ Injective function (injective, one-to-one)

Same archers-and-targets analogy, different order:

“(Empty targets are fine.) Just make sure no target gets hit by two or more arrows.” — that’s the order this time.

This gives you $|X|$ (size of the domain) $\leq |Y|$ (size of the codomain).

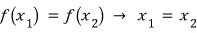

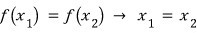

In nice mathy notation:

that’s it. Now let’s also write the contrapositive of that statement:

If I read this — “if a target has multiple arrows in it, those arrows are actually the same arrow”???

Eh, it’s just saying only 1 hit it.

And the long-awaited —

★ Bijective function (one-to-one correspondence, bijection)

This is the command that makes both previous commands hold at the same time.

That is: “Each target gets exactly one arrow stuck in it — and no empty targets either!!!!!”

So you’ll have $|X|$ (size of the domain) $= |Y|$ (size of the codomain).

But here’s the interesting bit. When $X$ and $Y$ have the same number of elements — if you satisfy either the surjective order or the injective order, the other one comes along for free.!!!!

OK so from here —

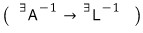

what we were calling $x_1, x_2, f(x_1), f(x_2)$ above,

we’re gonna carry that whole thing over~~~~ to matrices, exactly as is.!!!

Bring it over exactly~~~~ as is.

Surjective = “no empty targets >_<”

The one we had before,

rewritten to fit our situation:

we can write it like this, right!?!

So — pick any element $w$ from $W$, and there has to exist a $v$ such that $L(v) = w$.

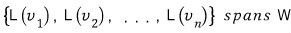

Which means we can also write the same thing like this:

No matter which $w$ we grab, $w$ can be written as a linear combination of the vectors $L(v_i)$.

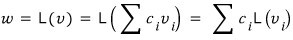

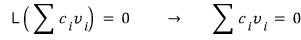

Why is this the same statement? Because $L$ is linear, so

holds, and because of that, whichever $w$ we bring,

it can be expressed as a linear combination of those vectors!!!!!

Injective = “no target with two or more arrows >_<”

The one we had above,

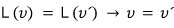

let’s rewrite this one too, to fit our setup:

if the function values of two vectors $v$ and $v'$ are the same, then the two vectors are the same (?) (Two different elements can’t share a target.)

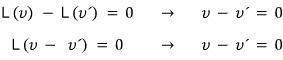

This statement —

can also be expressed like this, so

we can write it like this,

and since this means —

we get to say this.

Now let’s drop in the condition “when the dimensions are equal” and ride this all the way to the conclusion.

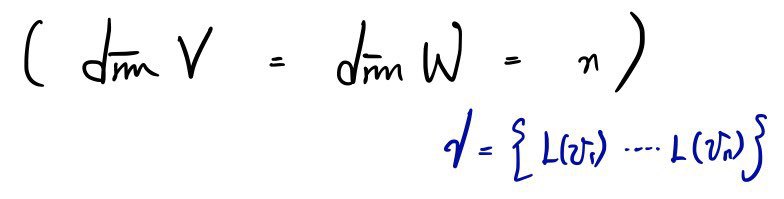

If $V$ and $W$ have the same dimension $n$,

in that setup, if $L$ is surjective, then??????

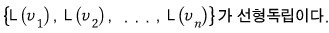

Didn’t we say $\text{span}(\gamma) = W$?!?!?!

Which means every element of $\gamma$, from $L(v_1)$ all the way to $L(v_n)$, got used — not a single one wasted.

So it becomes the statement that $L(v_1)$ through $L(v_n)$ are linearly independent, i.e. $\dim(\text{span}(\gamma)) = n$.

That’s what this is saying.

So $L$ also satisfies the injective condition, and we can see $L$ is bijective.

If $L$ is injective, that means $\gamma$ is linearly independent, right???

Which means nothing inside $\gamma$ overlaps,

so $\text{span}(\gamma) = W$ holds!!!!!

That is, $\dim(\text{span}(\gamma)) = n$,

$\text{span}(\gamma) = W$.

So $L$ is automatically surjective,

and therefore $L$ is bijective!!!

Conclusion:

When $V$ and $W$ in $L : V \to W$ have the same dimension,

① If $L$ is surjective $\to$ injective condition holds for free $\to$ $L$ is bijective.

② If $L$ is injective $\to$ surjective condition holds for free $\to$ $L$ is bijective.

“Has an inverse matrix” ?????

A matrix has an inverse matrix

$\downarrow$

means the linear map it represents has an inverse function

$\downarrow$

which means that function is bijective. (1:1 correspondence)

(cf. Everyone knows a 1:1 function and a 1:1 correspondence function are different things, right?!?!)

Surjective, injective, bijective — these come back later. If you skim past them now, you’ll get hit with the misfortune of having to backtrack later — which is exactly what happened to me lol lol lol. I was studying something further down the line and had to come crawling back to the front to study this stuff again, that kind of thing heh heh heh heh heh hehe.

Originally written in Korean on my Naver blog (2016-01). Translated to English for gdpark.blog.

Comments

Discussion happens via GitHub Discussions. You'll need a GitHub account to comment.