Isomorphism and the Dimension Theorem

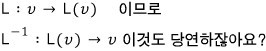

Kernel and image lead us to isomorphism — two vector spaces with the same dimension and a bijective map between them are literally just the same space in disguise!!!!

There’s so much more we can squeeze out of kernel and image!!

Today I’m using them to dig into isomorphism and the dimension theorem!!!!

OK so. Not every square matrix has an inverse.

Only special ones do, and this whole time we’ve been talking about linear maps represented by exactly those kinds of matrices —

so let me pull everything together using the proper word for it: invertible matrix.

A matrix that has an inverse is called an invertible matrix.

Which means: the linear map represented by an invertible matrix is a bijection.

Honestly… that’s pretty much everything we’ve covered up to now T_T

And here’s what an invertible matrix looks like, feature-wise:

The kernel is 0. ($\ker L = 0$)

The image spans everything.

The third one I haven’t proven yet, so just take it on faith for a sec. We’ll get there.

- If $A$ is invertible, then $A^T$ is invertible too.

Oh, and there was this:

When $V$ and $W$ in $L: V \to W$ have the same dimension,

① If $L$ is surjective → it automatically satisfies injectivity → so $L$ is bijective.

② If $L$ is injective → it automatically satisfies surjectivity → so $L$ is bijective.

OK now let’s say $V$ and $W$ have the same dimension!!

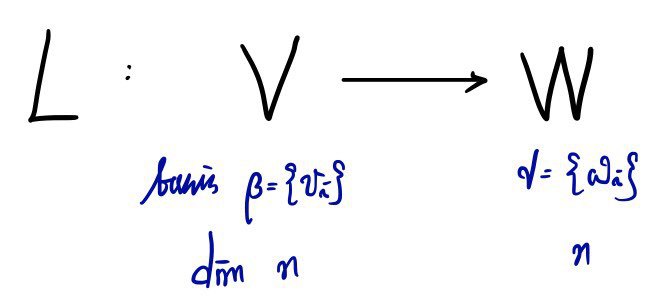

If $L$ is bijective, an inverse exists, and

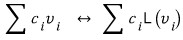

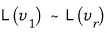

Also, $v_1, \ldots, v_n$ in $V$ and $L(v_1), \ldots, L(v_n)$ — all linearly independent.

So what I’m trying to say is: $V$ and $W$ are actually

just the same vector space expressed in different bases. That’s the punchline.

When this happens, we say $V$ and $W$ are isomorphic.

(Quick etymology aside — the prefix “iso-” means “same.” Like, “isotope” = same place on the periodic table. So “isomorphic” = same shape, basically.)

The linear map that ties two isomorphic vector spaces together is called an isomorphism!!!!

OK so let me reframe everything with this in hand.

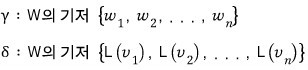

Say $V$ has basis $v_1, \ldots, v_n$ and $W$ has basis $w_1, \ldots, w_n$,

and call the matrix of this linear map $A$.

Since $L$ is bijective, $A$ is invertible!!!!

So now, take some element $v \in V$,

and let’s push this vector through $L$ over to $W$.

Looks like this.

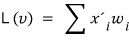

But let me rewrite the left side this way:

Using that, I mean —

Now what does this mean?

It means: what was expressed in the $\beta$ basis can also be expressed in $\gamma$!!!

Remember we said the ordered tuple of basis coefficients is the coordinates?!?

The tuple of $x_i$’s is the coordinate vector in $\beta$,

and the tuple of $x_i'$’s is the coordinate vector in $\gamma$!!!

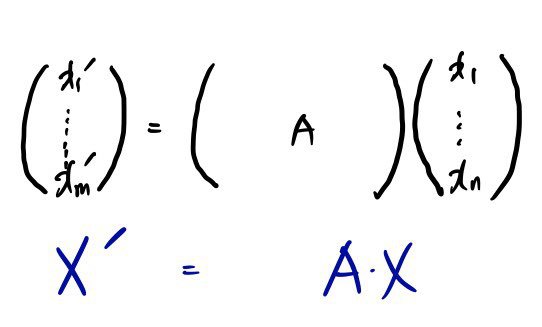

Stack those coordinates as a column vector, and

it can be written as a matrix.

You’re probably wondering — why did that just pop out of nowhere?

Don’t worry, this picture will start to make sense. Just keep going!!!

Now, since $L$ is bijective and linear,

$v_i$

expressed in the

$\beta$

basis:

(meaning: “$v_i$ written in the $\beta$ basis~~~” — that’s what the little subscript $\beta$ on the bracket is doing.)

Then by the same logic,

Oh ho~~~~~~~

Didn’t we just say these are linearly independent?!?!?!

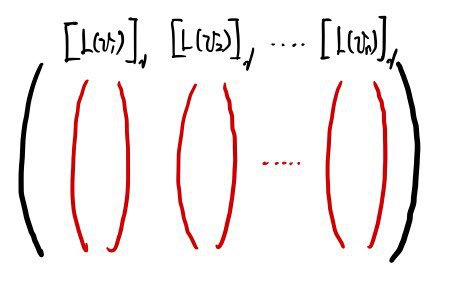

Let’s pull those linearly independent vectors out as columns~

Wait — what is this?!?!?!!!! Isn’t this matrix $A$?!?!!!1

(Or you can think of it the other way: split $A$ into its $n$ columns, and those column vectors are linearly independent of each other, and when they span, they cover all of $n$-dimensional space —

wait?! What?! Each of those column vectors is

isn’t it?!?!?!?! So you can read it that way too.)

Therefore $A$ is invertible!!!

Let me say the same thing one more time, differently.

The function $V \to W$ takes the basis vectors of one vector space straight onto the basis of another!

That is: “right, $v_i$ is a basis of $V$, but after I run it through $L$, it also becomes a basis of $W$!!!!”

We’ve just dug up another basis of $W$, on top of

$w_i$

!! (Remember a vector space can have a bunch of different bases at once?!)

And since $L$ is bijective,

we can pair up every vector in $V$ with every vector in $W$.

The pairing looks like this!!!

So the message is: $V$ and $W$ differ in name only. They’re literally the same vector space!!!

Following so far?? T_T

When this happens we say $V$ and $W$ are isomorphic, meaning:

the two have the same algebraic structure.

So why do we care about isomorphism?

Plain and simple — because change of coordinates, which is the very next thing we’re going to do, is itself an isomorphism.

Like, a single vector in 2D can be represented in tons~~~ of different ways depending on which basis you pick.

The map that does that re-representation!!!!

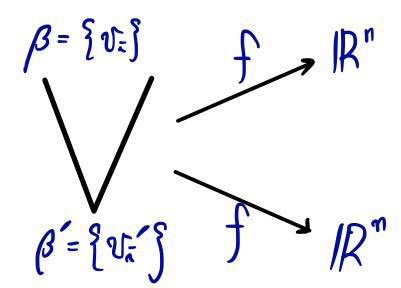

So here’s a picture we’ll use later:

the map $f$ and the map $f'$ are actually both isomorphisms representing the same vector space!!!!

You’ll learn later that this is the change-of-basis matrix,

and the very important conclusion is: the change-of-basis matrix is an invertible matrix.

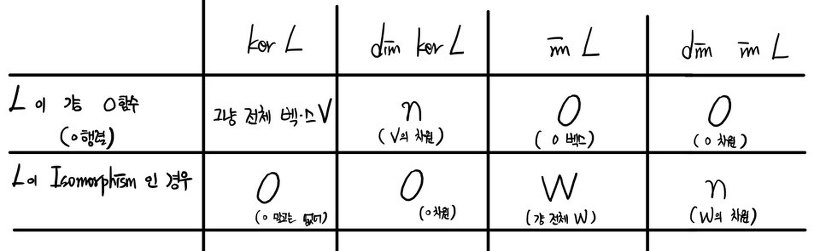

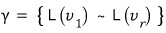

If we say “isomorphism” in the language of kernel and image:

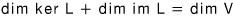

Now let’s see how this kind of thing holds for an arbitrary $L$. (This is where the dimension theorem starts.)

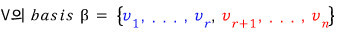

Simplest example first:

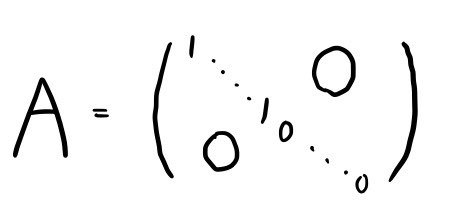

the matrix $A$ of the linear map looks like

(Get the picture? It’s saying: up to some diagonal entry it’s all 1s, after that diagonal entry it’s all 0s, and everything off the diagonal is 0~)

If you tally up all the 1s and 0s on the diagonal, you get $n$.

Call the count of 1s $r$, and the count of 0s $n-r$.

Now when we push

through the linear map,

$v_1$ through $v_r$ behave like this,

and $v_{r+1}$ through $v_n$ become

.

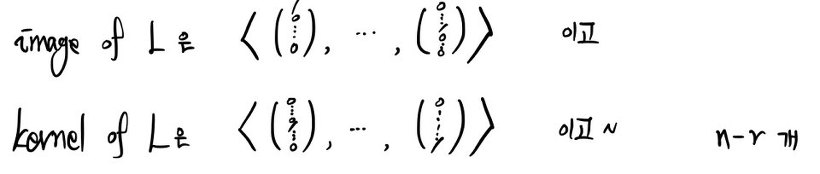

Let me write that in kernel/image language:

Restricted to this case,

we can write it like this.

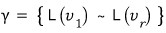

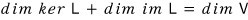

OK now the actual Dimension Theorem.

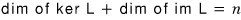

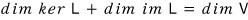

Let me drop the conclusion up front:

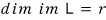

.

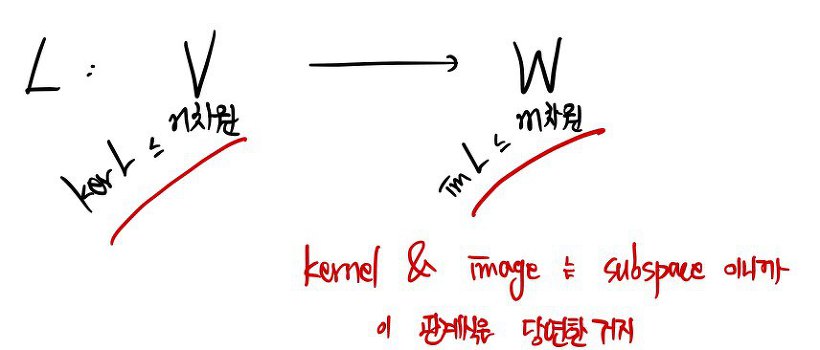

Let me kick it up to the fully general setup.

Say the basis of $\ker L$ has $n-r$ elements.

When we declare these to be the basis of $\ker L$, two things are baked in: they’re linearly independent of each other, and they’re a subset of the whole vector space $V$… that’s all implied.

But the basis of $V$ has to have $n$ elements!!!

Because $V$ is $n$-dimensional.

So the fact that the kernel’s basis has only $n-r$ elements means

the kernel, sitting inside $V$, doesn’t have enough elements to be a basis of all of $V$.

So with this currently linearly independent set $\beta'$,

I’ll just keep tacking on linearly independent elements one by one~

until it grows into a full basis of $V$!!!!

While I’m padding it out:

the freshly added ones are in blue,

and the original kernel basis vectors are in red.

From here on out,

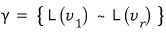

I’m going to argue that this is the basis of $\operatorname{im} L$.

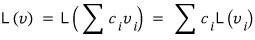

Any element $v \in V$,

can be written as a linear combination of $v_1, \ldots, v_n$.

When we shove these over to $W$ via $L$,

writing this out as a sigma sum:

there it is.

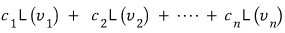

Oh ho?!?!? I’ve seen this thing somewhere before?!?!?!!!!

Ahhh~~~ but wait, the values $L(v_{r+1}), \ldots, L(v_n)$ are all zero. Zero! Because

those guys are in the kernel of $L$!!!??

OK got it.

Which means:

elements of the subspace $\operatorname{im} L$ can be written as a linear combination of $r$ vectors,

makes sense!

Let me bundle them into a set.

Can the things bundled into this set $\gamma$be a basis?

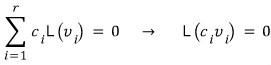

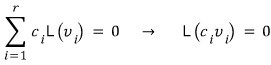

Are they linearly independent?!?!!!

If they are,

this has to hold,

and since $v_1, \ldots, v_r$ are vectors outside the kernel,

for

to be true, the coefficients $c_i$ in front have to be 0.

So,

these are linearly independent of each other. (If that step lost you, peek back at the earlier post on “linear independence.”)

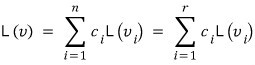

Therefore,

So,

this equation holds~~~~!

And that, right there, is the Dimension Theorem.

Originally written in Korean on my Naver blog (2016-01). Translated to English for gdpark.blog.

Comments

Discussion happens via GitHub Discussions. You'll need a GitHub account to comment.