Change of Basis

Same linear map, totally different matrix depending on your basis — let's dig into why that happens and how A and A' are actually related.

OK so, let me set up one general situation.

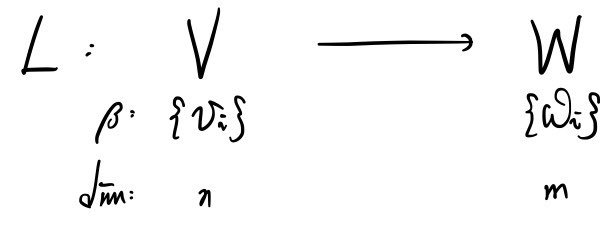

Here’s our linear map.

Now I really want to actually understand what this thing is doing.

From the very start I’ve been preaching the same gospel: if you want to understand a linear map, just track where the basis vectors go. Once you know that, you know everything~~~ about where any element ends up.

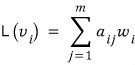

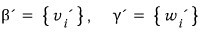

So here’s how I’ll write it:

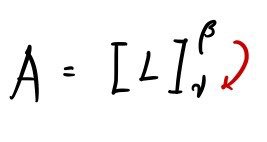

Let a(ij) be the entries of matrix A, and let’s say this matrix A represents the linear map L!! Cool? Cool.

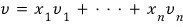

Now now now now — take some element v in V. Expand it in one basis of V:

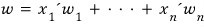

And some element w in W, written using the chosen basis of W:

That’s how we’ll write them.

And — suppose we found another basis β’ for V besides β. And let’s say we also found another basis γ’ for W besides γ.

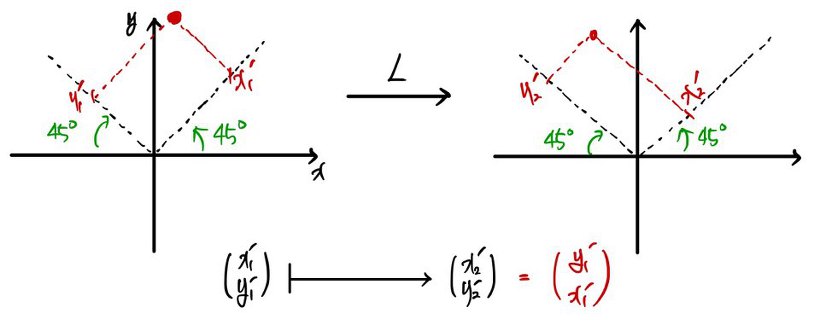

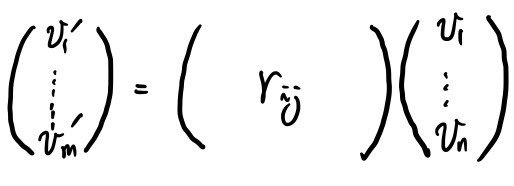

Then obviously we can write things this way too:

Obvious, right?!?!

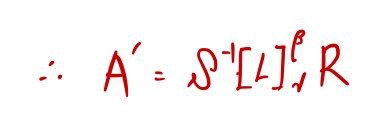

We’ll call this matrix A’. And A’ also represents the linear map L!!!!

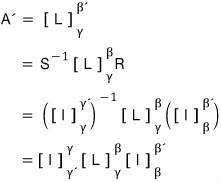

So now — the relationship between A and A’. (Let’s derive A’ from A!!!)

Hold up, before that —

Let me give you a little more intuition.

The same linear map gets a different matrix when you change the basis. OK, but how different? In what way?

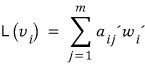

Here’s one example. Take a left-right reflection map L, and let’s see how the matrix shape changes depending on which basis we pick.

The point

after the reflection becomes

and

becomes

— same idea on each axis.

So the matrix A representing this L looks like:

<When using the standard basis>

(See why the matrix has that shape now?! Take the column vector before the map, hit it with the matrix, and yeah — out comes the reflected point!!)

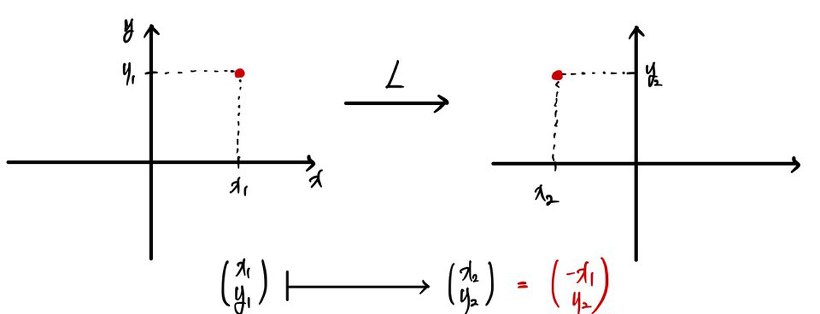

Now let me tweak the basis a bit and run it through the same reflection map.

Not the standard basis — a slanted, crooked one. Convince yourself the matrix A’ representing the linear map is

right?!?!

Same reflection map. Different basis. Different-looking matrix.

OK, so — depending on how the basis changes, how does the matrix shape change? Hmm, well… let’s see if there’s a clean connection.

This is “coordinate transformation” territory.

What does it even mean to express a point P in a different basis? Well, two different expressions → two different coordinate tuples. Remember what a coordinate is? When you write some element in terms of basis vectors, the ordered tuple of scalars you multiply each basis vector by — that’s the coordinate.

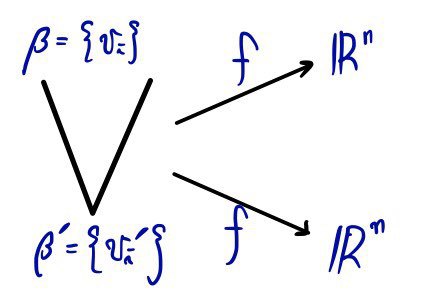

What this picture is saying: whether you write element v in V using the v(i)’s, or using the v’(i)’s —

— there’s a relationship between the two coordinate tuples!! Because the two bases are related to each other!!

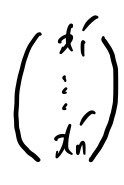

Each element of β’ is itself an element of V, after all. (Both bases linearly independent! Don’t forget~)

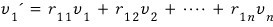

Let me just check the first row.

Get it?!

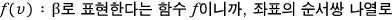

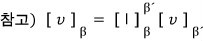

One element of β’ can be written as a linear combination of the elements of β. And the ordered tuple of coefficients in that linear combination is — yep — a “coordinate”. Stack that coordinate as a column vector and you get

— that’s all this is saying~~~

Anyway, big idea: every element of β’ is a linear combination of the β’s.

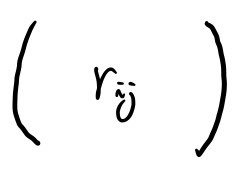

So what we need to take away here is

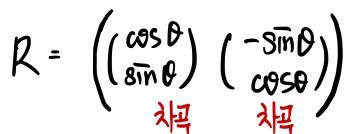

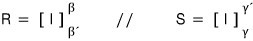

— this matrix right here is exactly the coordinate transformation matrix. And how do you build it?

You just stack those column vectors we found above, side by side~~

In plain English: take the basis vectors of the coordinate system you want to land in — the target space — express each of them as coordinates in terms of the existing basis, and then stack them as column vectors, one after another, in order, neatly. Boom — there’s your matrix.

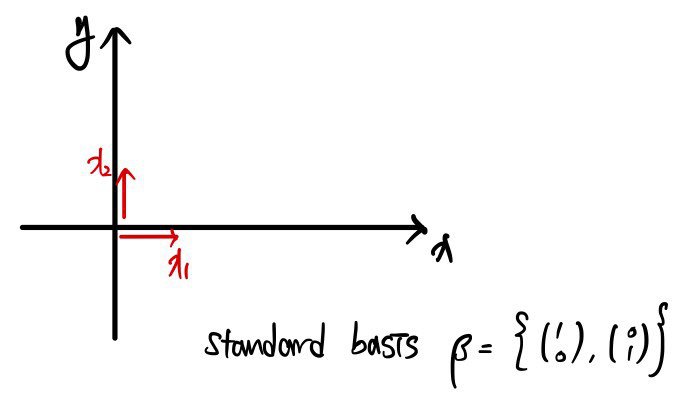

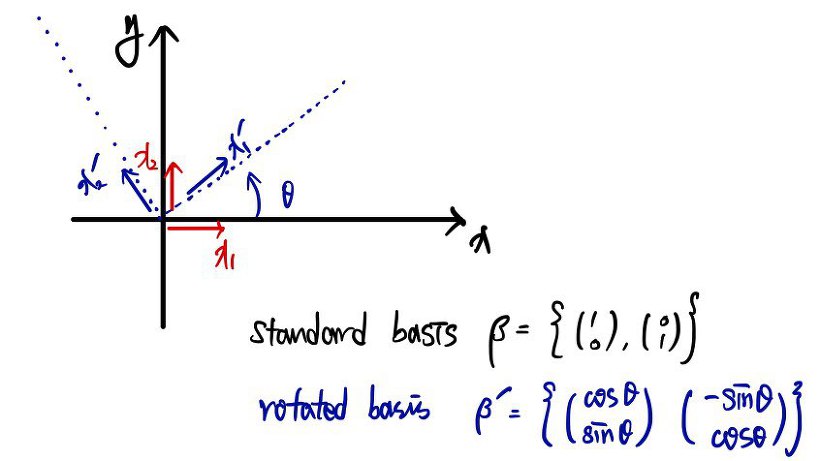

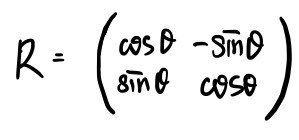

Let me sanity-check this with a rotating coordinate system.

If you want to transform into the coordinates of a rotating frame, does stacking the basis vectors of the rotating frame as column vectors really give you the coordinate transformation matrix you already know?! Or is it total nonsense?! Let’s check!!

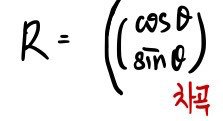

The basis of the target space is the stuff inside β’ here, and stacking those one by one should give the coordinate transformation matrix — yeah~~?

Let me stack them.

Whoa?!?!?!!!!!!!!!!!!!!!!!!

It really is the coordinate transformation matrix we already know!!?!?

How clean is that?!

OK now I’m switching to completely~~~ different notation and starting a new discussion.

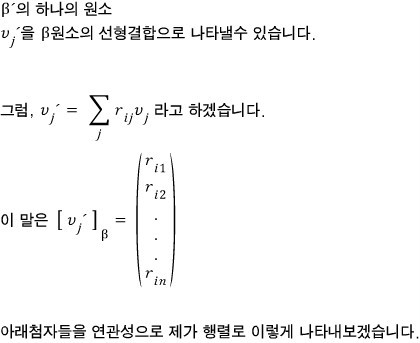

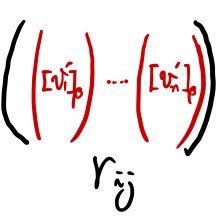

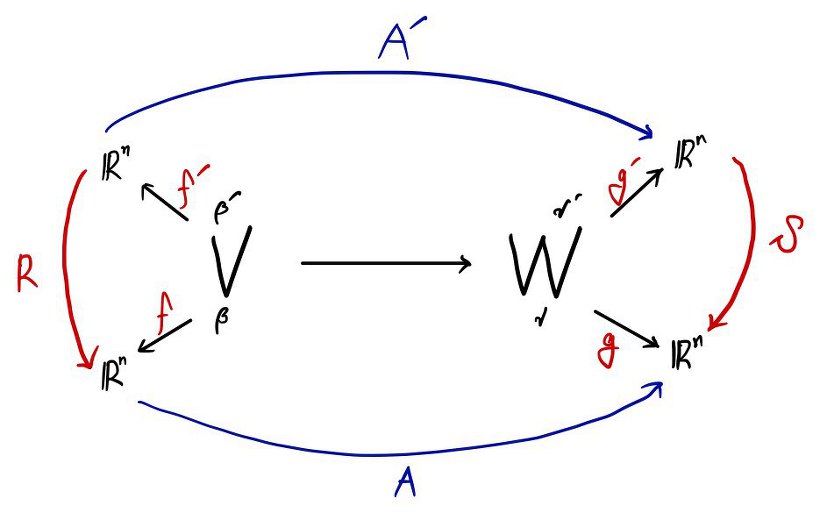

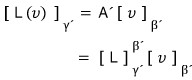

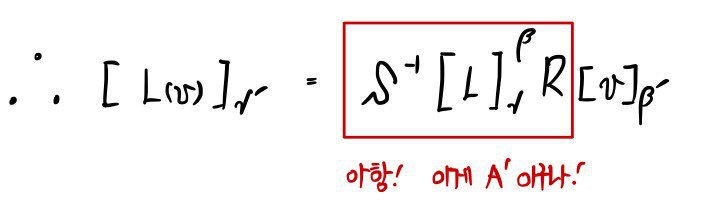

Throwing this picture down:

Inside V space, matrix R is doing a coordinate transformation between the β basis and the β’ basis. Same deal in W space — that’s what the picture is telling you. And we’ve got the function L connecting V and W, and the matrix representing it is either A or A'.

Let’s set L(v) = w.

If we want to express that w using the γ basis — well, following the picture above, we should write v in the β basis, and then push it through matrix A!!

As a formula:

Look at the picture above and you’ll see why this formula works.

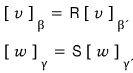

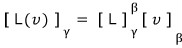

Now — about A. Let me show you how to write, formula-style, the statement “matrix A represents linear map L, and it’s nailed down with bases β and γ”~~~

Don’t forget: the map goes from the superscript basis to the subscript basis.

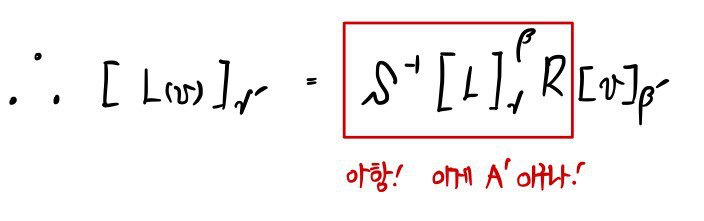

If we plug this notation into the formula above:

That’s how it gets written.

Now let’s do the same thing for A’. Same principle:

That’s it. So:

Now I’ll rewrite the left side as a coordinate transformation inside W space, and the right side using a coordinate-transformed basis inside V space.

(Hard to put in words, so let me just throw up a picture.)

Now move S over to the right side as its inverse — like this!!!

!!Wait!!!!!!

What if matrix S has no inverse?!?!?!

↓

Don’t worry, not even a little. S is an isomorphism, so of course it’s invertible!!! The inverse exists, guaranteed~~~

Anyway — since S and R are coordinate transformation matrices, we can think of them as identity maps (copy maps), apparently.

Clearly, R was taking us from the normal basis to the prime basis. And S was going the other way — from the prime coordinate system back to the normal one!!

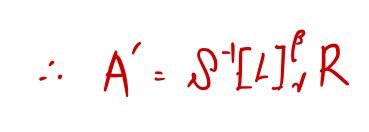

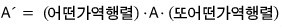

So the formula above is!!

This stuff we just did? It comes back later. Hard. Don’t!! Don’t ever!! Treat it carelessly.

So to make it land cleanly when it shows up again — let me organize one more thing I haven’t said yet, in the form it’ll get used later.

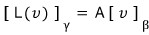

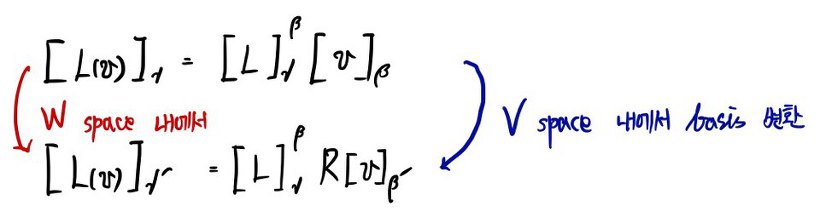

Remember this picture?

Focus right here:

Now —

Look at it like this:

Multiplying an invertible matrix on the left of A — what was that doing?? What was the role of S-inverse?

It was changing the basis of W — the target space.

And multiplying an invertible matrix on the right of A — what was that one doing? What was R’s job?!

Yep — changing V’s basis. Right?!?!

This is a theorem that comes back later, so lock it in!!

- Multiplying an invertible matrix on the right of a matrix → changing the basis of V.

- Multiplying an invertible matrix on the left of a matrix → changing the basis of W.

And the thing you must absolutely, absolutely never forget!!

How do you build a coordinate transformation matrix?!

Recipe for R: take the basis vectors of the target space and stack them, neatly, as column vectors.

Please — keep this one in your head!!

Originally written in Korean on my Naver blog (2016-01). Translated to English for gdpark.blog.

Comments

Discussion happens via GitHub Discussions. You'll need a GitHub account to comment.