Elementary Row Operation Matrices (EROM)

Back at it with the general solution of AX = B — recapping RREF, breaking down dependent vs. independent variables, and chasing down X_0 and ker A.

Alright, picking up where we left off — now I’m gonna talk about the ‘general solution’ of a non-homogeneous equation.

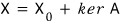

When $AX = B$, the solution we call

(Technically this is sloppy notation — the left side is an element and the right side is a set, so strictly speaking the equals sign is wrong.

But everyone writes it this way, your brain doesn’t choke on it, so let’s just roll with it.)

We already covered this earlier — so why am I lifting up this skirt again?

Should I copy-paste the old stuff….. fine, I’ll copy-paste just the parts we need.

<Copy-paste start>

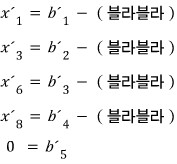

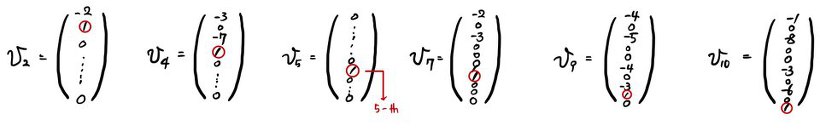

We were in the middle of solving $AX = B$.

Drop the variables tied to the ‘first 1’, shove everything else over to the right side, and write it out:

Note) If $b'(5) = 0$ isn’t satisfied, the solution set becomes the empty set!!! Heads up, heads up.

The thing above isn’t just the answer~~~~~~, so I’ll write it out as a solution set.

The thing about the variables $x'(1), x'(3), x'(6), x'(8)$ tied to the Leading 1 (the first 1) is — once you fix the values of all the other variables, these guys snap into place automatically.

Let’s chew on this for a sec.

It would be weird to write $x'(7) = $ something.

Why? Because $x'(7)$ shows up all over the place in other rows too… that’s the point I’m making here.

Those guys don’t control other rows.

Those variables depend on other variables!!!!!! That’s what I wanted to say!!!!

So those are called ‘dependent variables’, and the variables $x'(2,4,5,7,9,10)$ above are called ‘independent variables’, apparently~

Starting from $x'(10)$ and walking straight up one at a time to find the elements of the solution set is a piece of cake.

These are independent unknowns, so it doesn’t matter what you plug in.

Just shove in values and adjust as needed so the equation works out.

Now this beautifully convenient matrix form is called Row Reduced Echelon Form —

in Korean it’s called something like ‘reduced row ladder form’ or ‘reduced row echelon form’ —

and from here on I’ll just call it by the initials: RREF.

<Copy-paste end>

Hmm…. OK so, instead of finding $X_0$ from the RREF above,

let’s go back to the original matrix form

— this guy —

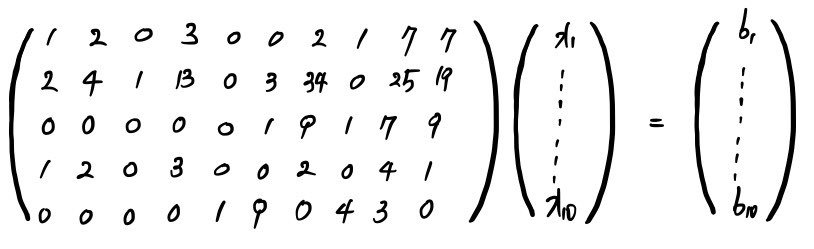

and by jamming zero into all~~~ of the independent variables, I’ll dig $X_0$ out of the solution set. Watch~~~~

Oh, and when you plug it in:

Which is to say — can’t we just call this one of the $X_0$’s????

Now what’s $\ker A$?

In the situation we’re in right now, all I can say is “$\ker A$ is a vector space.”…(sob)

Let me write it out.

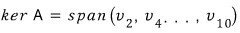

There. Wrote it like that.

The subscripts somehow line up with the independent variables….. ignore that for now and just Follow me!!!!!

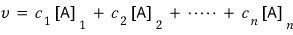

What that expression means is —

nothing more than: it’s the set (vector space) of all elements you can write as a linear combination of $v(2)$ through $v(10)$.

So what would those $v(2) \sim v(10)$ guys be?

I’ll think about which vectors we can span up to build $\ker A$.

($\ker A$ is the set of $X$’s satisfying $AX = 0$. Burn that into your brain.)

(Which is to say — finding the $X$’s that work when you plug in $0$ for all the $b'$ values!!!!!)

How do we cook up $v(2)$?

We can’t touch the dependent variables, so we mess around with the independent ones.

For $v(2)$:

$x(2) = 1$, all other independent variables $= 0$.

For $v(4)$:

$x(4) = 1$, all other independent variables $= 0$.

That’s how you build them all~~.

Let me just dump everything we made into one place:

The important thing is — making this isn’t the point, so let’s keep moving~~~

Like I said, plugging in $B = 0$,

these are the vectors we built one at a time, carefully.

But hold on — there’s a wrinkle we need to address.

Didn’t we change the original very-first $5\times 10$ matrix into RREF???

So in the form $AX = B$, by sliding around and multiplying rows by constants

and adding/subtracting rows to flip it into RREF,

it’s not $AX = B$ anymore — it’s $A'X = B'$.

The reason I’m bringing this up:

it means the solution set isn’t $X = X_0 + \ker A$, it’s $X = X_0 + \ker A'$.

Which is to say — we’ve been sailing along this whole time on the belief that $\ker A$ and $\ker A'$ are the same thing!!!!!!

(I’ll prove it in a sec. Hang tight just a tiny bit for the fact that $\ker A = \ker A'$.)

OK~~~~~~ let’s go step by step.

First, $\dim \ker A = 6$. Since it’s a space spanned by 6 vectors from $v_2$ through $v_{10}$, the dimension of that thing is, sure enough, 6.

And since $\dim A = 10$, by the dimension theorem, $\dim \mathrm{Im}\, A = 4$.

Up to here makes sense, right?!?!?!?!!!

Ahem~~~~~~ now let me toss a thing out there.

The dimension of $\mathrm{Im}\, A'$ is 4.

Out of nowhere, right??? lol lol Let’s look.

Since we’re dealing with $A'$, gotta look at the RREF.

Here’s the RREF, hehehe — now look here.

The bottom row is the zero vector, so no matter what element you plug in for $X'$, the last entry $b(5)'$ of the $B'$ vector is rock-solid $0$.

Which means $\dim \mathrm{Im}\, A' = 4$.

Let me state the conclusion first:

$$\dim \mathrm{Im}\, A = \dim \mathrm{Im}\, A' = 4$$OK, now at this point let’s learn a little more about the transformation rules.

The transformation rules we use while doing Gaussian elimination are just: multiply by a constant, then add or subtract. (Subtraction is actually just multiplying by $-1$ and then adding!!?!)

Honestly this is just the elementary-school way of cranking through systems of equations….

But applying that principle in the direction of making the first $1$ (Leading 1) appear is exactly the method for making RREF.

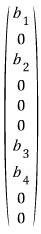

① Multiply one row by a constant.

② Swap two rows, or just leave them where they are.

③ Add a constant multiple of one row to another row.

This is literally elementary-school stuff — but let me tell you what it actually is.

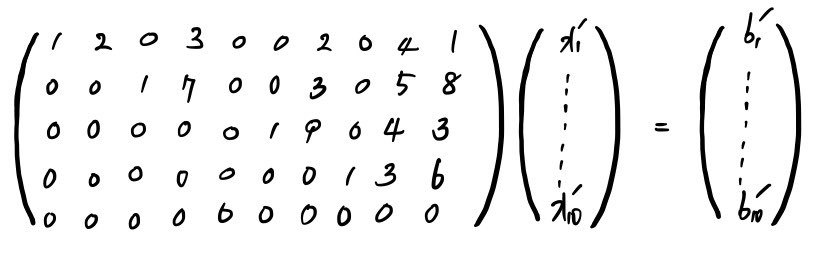

Look closely. I’ll do this with an $n \times 2$ matrix.

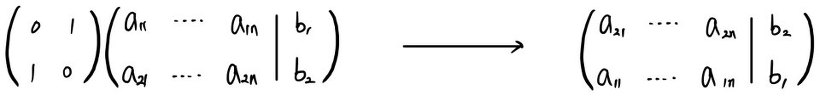

Swapping the two rows looks like:

This is fine, right?? Even if you do it like this????????

But the meaning of swapping the rows like that —

— you can also say it’s multiplying by the matrix $\begin{pmatrix}0 & 1 \\ 1 & 0\end{pmatrix}$.

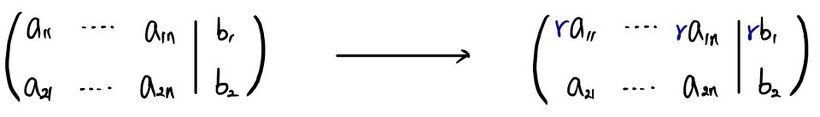

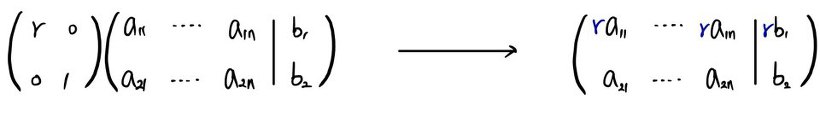

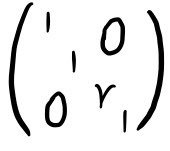

Multiplying one chosen row of the matrix by a constant — that means:

Doing this doesn’t break the system of equations, right?????

But multiplying by a constant like that is actually:

— which is the same as multiplying by the matrix $\begin{pmatrix}r & 0 \\ 0 & 1\end{pmatrix}$.

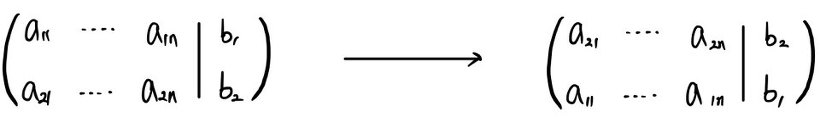

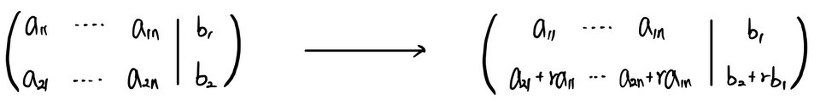

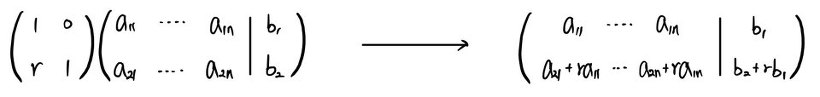

And finally, adding a constant multiple of one row to another row:

Doing this doesn’t break the system either, does it?!?!?!?!?

But this move is actually:

— same thing as multiplying by $\begin{pmatrix}1 & 0 \\ r & 1\end{pmatrix}$.

These transformation rules — even on a huuuge~~~ matrix — at the end of the day,

they boil down to picking two rows out of however many and doing one of those moves.

That’s why I just drew it as an $n \times 2$ matrix with 2 rows.

And the point I’m making here isn’t “eyeball it and do this~ do that~” —

what I want to drive home is: “The act of multiplying by a particular matrix on the left is the act of applying a transformation rule.”

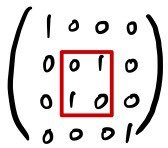

cf. You might be thinking “this won’t scale to a big matrix” — so just to nail down the intuition, here’s a $4\times 4$ example:

If you want to swap row 2 and row 3 in a $4\times 4$ matrix,

— it’s the same as multiplying by this matrix.

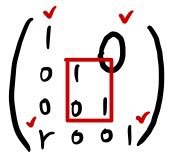

If you want to multiply row 3 by $r$,

— same as multiplying by this matrix.

And if you want to add $r$ times row 1 to row 4,

— it’s the same as multiplying by this matrix.

These 3 types of row transformations are called Elementary Row Operations in English,

and performing them

is the same as multiplying a special matrix on the left, right????

That special matrix is called the Elementary Row Operation Matrix. (I’ll call it EROM.)

So basically — multiply some unorganized matrix $A$ by various EROMs $k$ times in a row, and you get RREF.

(For sure $k$ is finite — not infinite, just to head that off.)

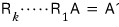

Which is to say:

OK now here is where I’ll truly cough up the fact that $\ker A$ and $\ker A'$ are the same.

The process by which $AX = B$ turned into $A'X = B'$ — that was multiplying both sides of $AX = B$ by EROMs one after another, identically on each side.

So just:

— take this whole thing —

— and treat it as this.

Then again — $\ker A$ is

the set of $X$ satisfying $AX = 0$,

and $\ker A'$ is the set satisfying $A'X = 0$,

i.e., the set of $X$’s satisfying $RAX = 0$.

But since $R$ is invertible,

the $X$’s that satisfy that are exactly the same as before.

So the conclusion: $\ker A = \ker A'$ ~~~~~yay~~~~

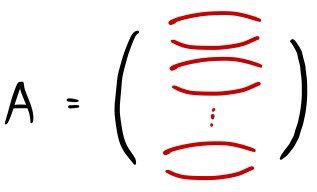

OK and let’s also pick up one more thing called the Row space.

Take some matrix $A$ and rip it apart~~~ row by row, treat each torn-off row as a row vector,

and the space you span up out of those row vectors is what they call the Row space, apparently.

The notation:

— I’ll write it like this.

But then — if you turn that $A$ into its RREF $A'$, obviously there’s also a row space you get from ripping apart the rows of $A'$ and spanning them, right???

This one, this one.

Are the two vector spaces the same? Or different??????

Since we just learned that $A'$ is the result of multiplying EROMs onto $A$,

we can pretty easily say: “the two vector spaces are the same!”

Why? Any element $v$ inside $V(A)$ can be written as

— like that,

and the elements inside $V(A')$ — well, $A'$ was born from $A$ getting multiplied by EROMs, no matter how you cut it,

which just means the constants in $A$’s entries got jiggled a little, right???

(I feel like what I just said came out a bit wonky, but I trust everyone gets the gist. If not, I’ll patch it up later…..)

(Oh, and also — the subscript under the brackets $[\,]$ means ’the $k$-th row vector’ — we did this way back at the start~~~ but in case anyone didn’t read from the beginning, just sliding in a quick refresher~)

So the conclusion: the row space of $A$ (

) and the row space of the RREF $A'$ (

) are the same as each other! That’s the punchline.

OK, I’ll cut it here for now.

Originally written in Korean on my Naver blog (2016-01). Translated to English for gdpark.blog.

Comments

Discussion happens via GitHub Discussions. You'll need a GitHub account to comment.