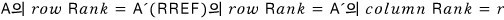

The Rank Theorem

We poke at whether RREF is unique, then laser in on invertible matrices to show their RREF has to be the identity — and that's the Rank Theorem taking shape!

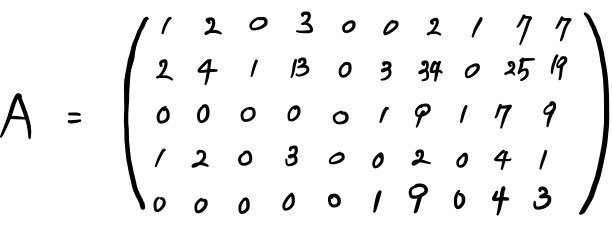

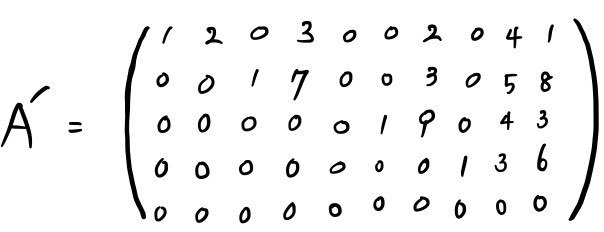

The RREF $A'$ we got by multiplying a bunch of EROMs together —

we got as far as the fact that the row spaces of these two,

$V_A$ and $V_{A'}$, are the same. This is a crucial fact we’ll lean on later. Tuck it away.

OK so before we move on, there’s one more thing we should poke at.

Does an RREF always exist????

I mean — yeah, no question. We can always crank one out, no conditions attached.

But wait — couldn’t there be more than one way to make an RREF, besides the route we took??

And every time we did it, would the RREFs that pop out be the exactly same?

In other words: is the RREF unique, or not?

We don’t know yet.

And here’s another thing we don’t know:

We confirmed that the Row space doesn’t change when you convert to RREF, but we have no idea yet whether the Column space changes or not.

We’ll figure it all out step by step, but not right now —

right now we’re going to laser in on something called an invertible matrix $A$.

We’ll come back to those open questions later!!!!! Promise!!

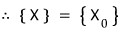

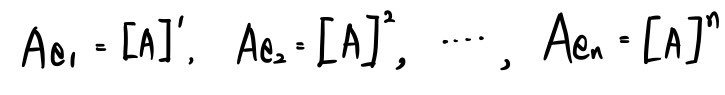

First up: when the $n \times n$ matrix $A$ is invertible, if we tear $A$ apart into its ‘columns’…

the column vectors that come out,

,

, . . . ,

are linearly independent. Because $A$ is invertible.

Also, if $A$ is invertible, its transpose $A^T$ is also invertible.

Which means the column vectors of $A^T$ are also linearly independent (because, again, invertible).

And saying the columns of $A^T$ are linearly independent is the same as saying the rows of $A$ are linearly independent. (Transpose = swap rows and columns, right?)

In other words —

the dimension of the column space of $A^T$ being $n$ means the dimension of the row space of $A$ is $n$!!!!!!!

OK so now: when we have an $n \times n$ invertible matrix $A$, and we call the RREF we get by row operations $A'$ — would there be an all-zero row in $A'$?!?!?!?!?!

Nope. We just said invertible, so we can’t have a zero vector hanging around with all entries 0. If there were a zero row, you’d be trying to span an $n$-dimensional space with only $n-1$ row vectors — impossible!!!

And where do the leading 1s of $A'$ have to live?!?!?!

The first leading 1 has to sit at $a_{11}$.

If it didn’t, that would mean a zero row gets stuck somewhere below, so a leading 1 has to be there. Following that logic all the way down, the RREF of this $A$ has no choice but to be the identity matrix.

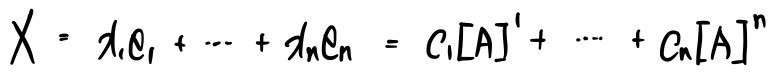

If we look at this from the “multiplying EROMs” point of view,

Let me throw in a concrete example.

I’ll find the inverse of this $A$.

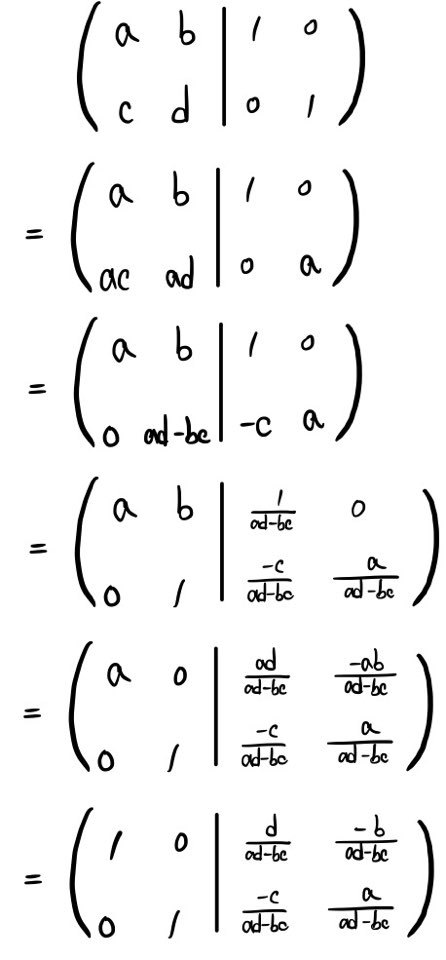

Writing it out like that, I’ll find it by chaining EROMs on both the left and the right side.

(You can also just think of it as continuously writing out the transformation rules — same thing.)

Because $A$ is invertible, taking it to RREF lands us on $I$ (the identity), and what’s sitting on the right side is $R$ — and the identity of $R$ is, precisely, $A^{-1}$.

Want to double-check on something we already know cold?!?!

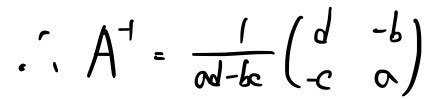

Same principle, here we go!!!

If $ad - bc = 0$, that means it doesn’t exist, right?!?!?!?

Which gives us the result that there’s no inverse!!!

That’s why for the $2 \times 2$ case we call $ad - bc$ the determinant, right?!?!?!?

This is, you know, the stuff everybody learned ages ago.

There’ll be more on this later, so don’t be too let down. The real focus right now is somewhere else~~~

Now let’s look at the solution of $AX = B$ when $A$ is invertible.

For the non-homogeneous equation $AX = B$, the main concern was: does a solution even exist???? — that’s it.

For the homogeneous equation $AX = 0$, the main concern was: does a non-trivial solution exist??!?!! — that was it.

But now, with the extra assumption that $A$ is invertible, for the non-homogeneous $AX = B$ —

$X = A^{-1}B$, so a solution unconditionally exists.

And for the homogeneous $AX = 0$,

$X = A^{-1} \cdot 0$ becomes

$X = 0$,

so a non-trivial solution does not exist.

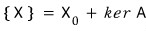

Earlier we said the solution set for the non-homogeneous equation is

the particular solution $X^{(0)}$ definitely exists, $\ker A$ has nothing in it but $0$, so

there’s only one solution!!!!

So: in $AX = B$, when $A$ is invertible — one solution!

In $AX = 0$, when $A$ is invertible — one solution!

Alright, NOW let’s get into the real main event — the Rank Theorem.

Before we dive in: the Row space doesn’t change when $A$ goes to its RREF $A'$. We don’t yet know whether the RREF is unique. We don’t yet know whether the Column space is invariant either!!!!

We don’t even know whether the dimension of the Column space is invariant. Don’t know it yet!

Please keep all of that in mind!!!

OK, let’s actually go go go go Rank Theorem!!

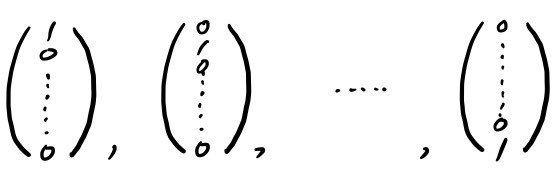

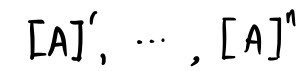

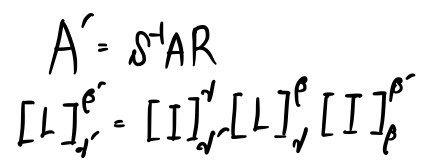

What if, in the $X$ slot of $AX$, we plugged in

(unit vectors)

something like these?!?!?!

Probably the column vectors are gonna pop out, right.

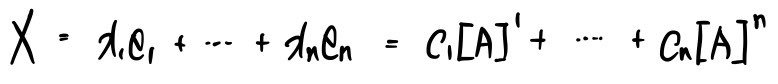

The unit vectors we just multiplied —

we’ll write them like this:

Then this will probably click too.

What I just said above is exactly what the picture above shows, the whole thing —

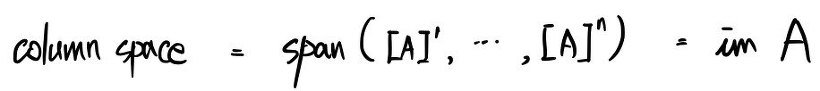

The reason I said it was: whatever $X$ is,

it can be written as a linear combination of these.

And the reason I said that in turn —

was to give you a feel for the idea that the image of the linear map that $A$ represents is exactly the space spanned by the columns of $A$.

In other words —

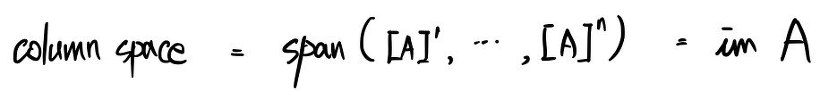

OK so now that we know Row space and Column space, let’s see what Rank is and what the Rank Theorem says.

First — what is Rank?

These Row spaces and Column spaces have a dimension, right????? That dimension is what we call Rank.

dim of Row space = Row Rank.

dim of Column space = Column Rank. So they say.

1. If $A$ is an $n \times n$ invertible matrix —

since it’s invertible, the columns are linearly independent among themselves, and so are the rows, right???

So the dimension of the span is $n$ for both.

Therefore Row Rank = Column Rank.

2. When some matrix $A'$ is in RREF —

The dimension of the Row space of $A'$ is the number of non-zero rows.

But also — look at the leading 1s.

Everything above and below that 1 is 0!!!! Because it’s RREF.

Which means the row that has the leading 1 can’t be built out of the other rows!!

So Row Rank = number of rows with a ’leading 1’!!!

By the same logic for Column Rank, the minimum Column Rank is the number of columns that are tied to a leading 1.

But could there be more?!?!?!!!!!

Look at the row attached to a leading 1: nothing before the 1, and after it there may or may not be numbers…

Ahhh!!!!! If we just take the columns tied to the leading 1s, we can build the others as a linear combination!!!

(I’m a little uneasy doing this in words alone with no diagram — are y’all reading my mind…? T_T I’m nervous.)

So we can write it like this:

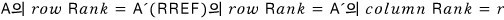

Row Rank = Column Rank = number of leading 1s.

3. If it’s a general rectangular matrix —

first off, we can always pin it to RREF, no conditions.

Once we do, Row Rank and Column Rank are equal by case ② above.

But — as we said — we don’t know whether the Column Rank changes or not when we go to RREF.

We do know Row Rank doesn’t change, right???? Call Row Rank = $r$.

The Column Rank of $A' = r$ means dim of $\text{Im}\,A' = r$.

(Following along?? If you’ve been with me from the start you probably are, but…? I dunno if I should just mumble through this and keep going…? T_T)

Ohhh~~~ but we do know that dim of $\text{Im}\,A$ and dim of $\text{Im}\,A'$ are the same, even after going to RREF, right?!?!?!?!

From dim of $\text{Im}\,A$ = dim of $\text{Im}\,A'$, applying the dimension theorem gives us

dim of $\ker A$ = dim of $\ker A'$ — boom, we can deduce that!!!!!!

Now apply the dimension theorem to $A$:

dim of $\text{Im}\,A$ = $n$ − dim of $\ker A$, right!!!?

For an $n \times m$ matrix, what’s the dimension — is it $n$, or $m$?!?!?!1

It’s $n$, $n$!!!! And this $n$ is the horizontal length!!!!!

In other words, the number of columns.

We said above that dim of $\ker A$ is the number of the leading 1s!!!

Which is the same as saying it’s the number of dependent variables.

So I’ll rewrite dim of $\text{Im}\,A$ = $n$ − dim of $\ker A$ as:

Column Rank = $n$ − (number of leading 1s) = $n$ − Row Rank.

$$\text{Column Rank} = n - \text{Row Rank}$$This result — looking suspiciously like the dimension theorem — is what we call the Rank Theorem.

And along the way we accidentally figured something out:

the Column Rank doesn’t change either when we convert to RREF.

Or in other words, the dimension of the Column space doesn’t change.

Alright then — finally — let’s check on the uniqueness of the RREF.

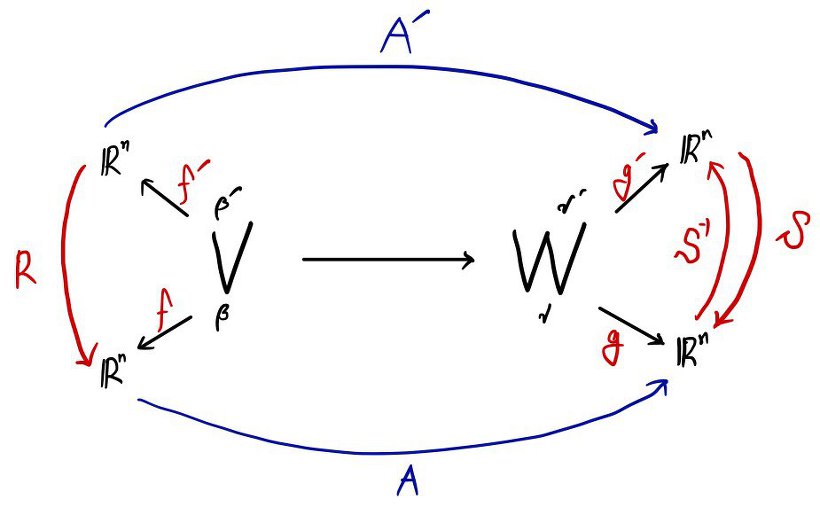

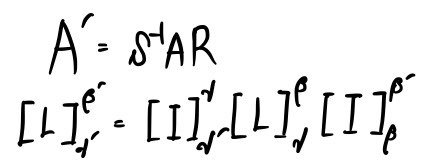

Didn’t we say that taking $A$ to its RREF $A'$ is the same as multiplying some invertible matrix on the left side?

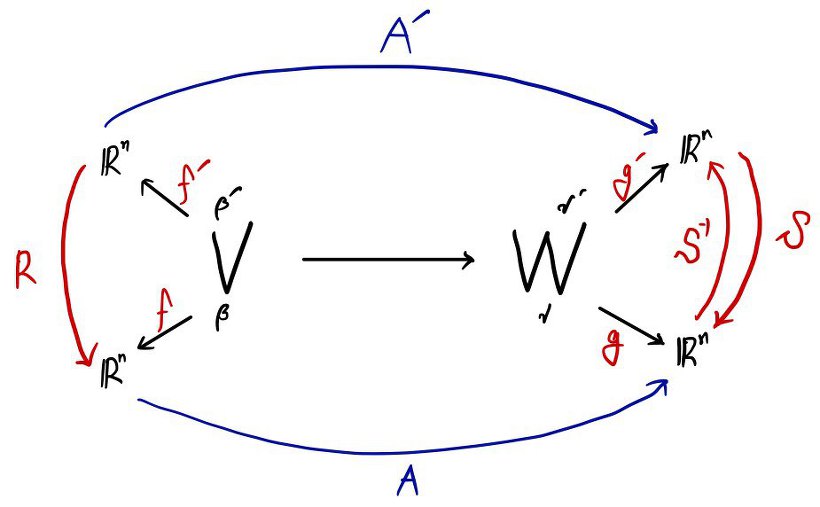

And waaay back in the ‘change of basis’ part,

we said that multiplying some invertible matrix on the right of a matrix can be read as changing the basis of $V$,

and multiplying an invertible matrix on the left of a matrix can be read as changing the basis of $W$.

We absolutely, definitely concluded it like that at the very end of #8 and wrapped up that post.

Multiplying some invertible matrix on the right of a matrix = changing the basis of $V$.

Multiplying an invertible matrix on the left of a matrix = changing the basis of $W$.

OK so —

to sum it up:

Going to RREF = multiplying invertible matrix EROMs on the left of $A$ = changing the basis of $W$ (the target space).

Meaning — we find an appropriate basis of $W$, and the linear map expressed under that basis is precisely what $A'$ was.

Therefore the shape of $A'$ is unique!!!!!!!!!!!!!!!!!!!! Everything tied up now!!!!!!!!!!!!

Originally written in Korean on my Naver blog (2016-01). Translated to English for gdpark.blog.

Comments

Discussion happens via GitHub Discussions. You'll need a GitHub account to comment.