Multilinear Forms, Alternating Forms, and the Epsilon Symbol

We finally tackle *how* to tell if a matrix is invertible by wading through multilinear forms, alternating forms, and the epsilon symbol — all the groundwork for determinants!

OK so up to this point we’ve been waving our hands and going “well, if the matrix is invertible…” and rolling the discussion forward from there.

Now we’re actually going to figure out how to tell whether a matrix is invertible or not.

You already saw it coming!!! The determinant!!!

To get there we first need to talk about something called a Multi-linear Form.

Heads up right out of the gate: this is not a linear function. lol

I know, I know — “linear” is right there in the name, but nope, not a linear function. T_T

Don’t stress about it, you’ll get the feel for it as we go!!!

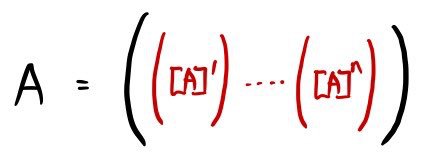

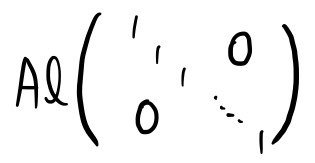

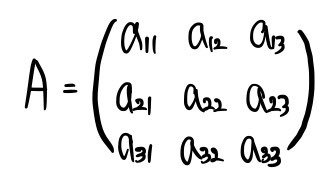

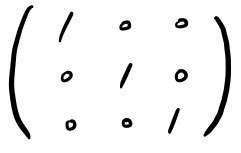

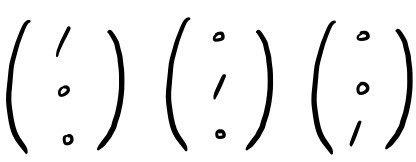

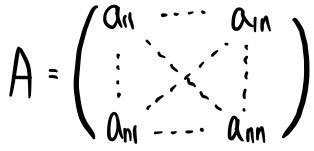

OK. First let’s write an n × n matrix A in terms of its n column vectors.

We’re going to think of the matrix A as an ordered tuple of n vectors!!! Same vibe as writing out the coordinates of a vector in a specific order.

And we’re going to define a function Ml.

What does it actually do?

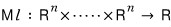

It eats those n n-dimensional vectors as an ordered tuple and spits out ‘some number’.

Now now now now now — that’s not the part we need to take away from “Multi-linear Form”.

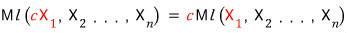

The part we actually carry forward is the characteristic property of Ml.

Ml is “a linear function with respect to any one column.”

That is —

when we feed this ordered tuple into Ml and get a number out!!!!

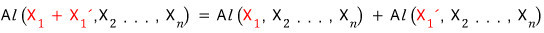

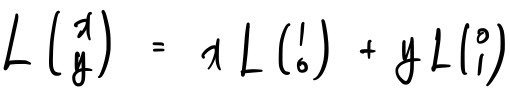

what does “linear with respect to any one column” mean? Let me show it with the first column.

I showed it for the first column,

but the same property works for the second, third, …, nth column — all of them,

and a function with this property is called a Multi-linear Form.

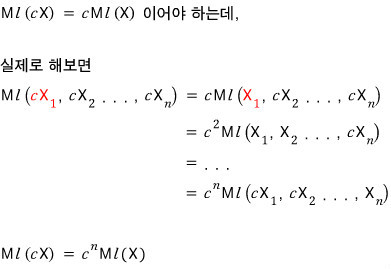

So — see why Ml isn’t actually a linear function?!?!?!? Here’s why it’s not a linear function:

Anyway, not a linear function, but it goes by the name Multi-linear Form. Sure, why not.

Apparently there’s quite a variety of Multi-linear Forms out there. Same way there are loads of linear maps L sending V to W!!!!

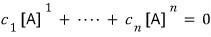

Out of all the Multi-linear Forms, the one we actually care about here is the Alternating Form.

Yeah, that name is doing a lot. lol

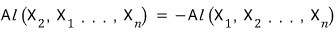

Anyway — since the Alternating Form is itself a Multi-linear Form, it inherits all the properties from above, and it tacks on one more property on top:

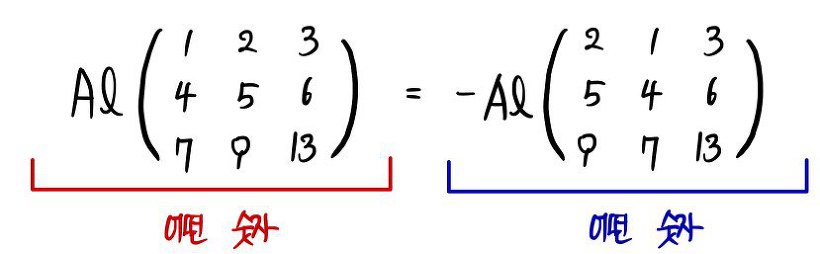

“if you swap any two of the vectors, the Al value becomes (-) of what it was before the swap.”

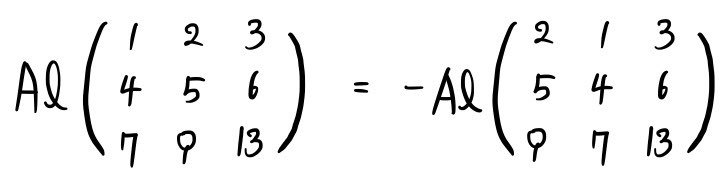

That probably sounds abstract, so here’s an example with a matrix.

Now — there are also cases where swapping two columns leaves the matrix totally unchanged, in which case Al for that matrix shouldn’t change either.

It’d be exactly a case like this.

Right????????????

For both sides of that equation to come out as the same number — what on earth could ’that number’ up there possibly be?

Yep yep yep yep. It has to be 0.

Both sides are some value, and the only way they can be equal even when one of them gets a (-) slapped on it is if both are zero.

So: when two columns of a matrix are the same, the Alternating Form value is 0!!!!!

Oh, and just to be safe — since Al is itself a Multi-linear Form, all those properties from earlier still hold, right? Let me write them out before moving on.

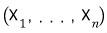

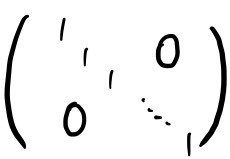

OK so. Remember when we had a linear function L, and to fully pin down what L does to anything, we just plugged the basis vectors into the map?

Apparently the same kind of logic works for Al.

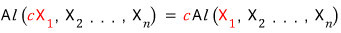

I used a 2 × 2 as the example, but the gist is: if you want to know what Al does to any matrix, you just need to figure out Al on the identity matrix, and you’ve cracked the whole thing!!!!

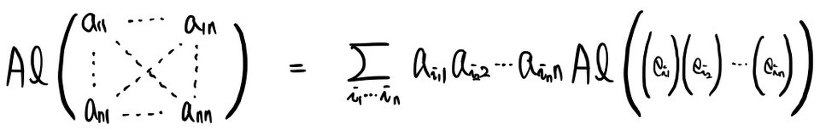

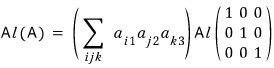

Like —

this!!!!!!

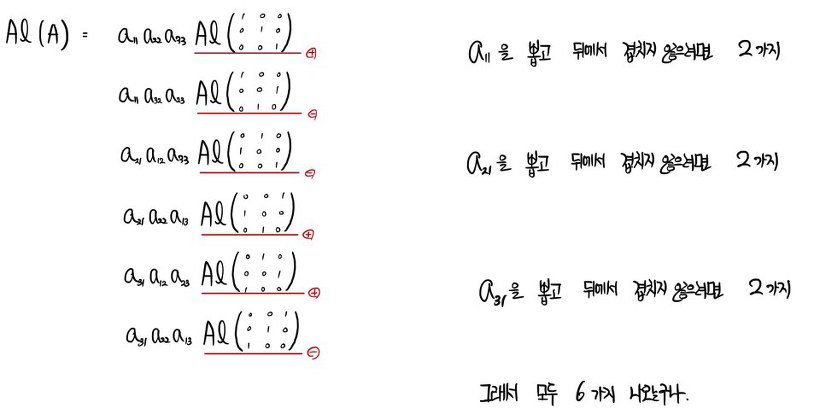

Try it yourself for a 3 × 3 matrix!!!!!!

And for a general n × n you supposedly land at the same conclusion — you just need Al on the identity matrix (mathematical induction let’s go let’s go let’s go).

So we write it like this.

Now — but since we’re going for full generality here, the numbers in

— don’t picture them as neatly going 1, 2, 3, 4, …, n in order. Picture them as the numbers 1 through n all jumbled up!!!!! (n! possibilities)

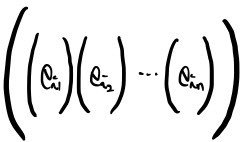

Then take the n × n matrix at the very end,

and try to massage it into the identity matrix.

To get to that nice clean identity-matrix shape,

we don’t yet know

but we’re going to need some number of swaps to get there, right????

Whether it’s 0 swaps or n swaps —

if you make that clean shape with an odd number of swaps, a (-) gets attached,

and if you make it with an even number of swaps, a (+) gets attached,

and I’ll punt on the exact count for now.

Anyway, the one value you get when you expand all that sigma stuff and add everything up —

isn’t it just a constant multiple of

?

?

So here’s the focus for the next little while:

if you just know

,

,

you know every value of Al!!!

That’s the part we’re zooming in on.

And so we call

the Determinant!!!!!!!!!!!!

(Actually — and this is a next-post thing — the Determinant is defined as the Alternating Form for which Al(I) = 1. Not the focus of this post.)

(Physics kids like me are going to be in absolute shock and horror — this is the rigorous definition of the determinant?? haha)

(We’ll dig into it more in a bit, don’t panic.)

Conclusion up front: if A is non-invertible, then Al(A) = 0.

Let me explain why.

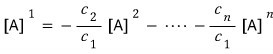

Suppose the column vectors of A are not all linearly independent. Then —

— this can be satisfied by some choice of c(1) through c(n) where they aren’t all zero.

So at least one of c(1), …, c(n) is nonzero. If c(1) is nonzero, then —

— we can also write the first column vector in this form…… and using that as our example,

we write the matrix in this form, and then use the fact that the columns aren’t linearly independent to check the Al value.

So: if the column vectors are linearly dependent, Al is zero,

and therefore if Al = 0, that means the matrix is non-invertible.

OK!!!

So we can say: if Al ≠ 0, then A’s columns are linearly independent!!!!!

And if A’s columns are linearly dependent, then Al = 0.

Now what about the converse???? That is, can we say that if Al = 0 then A is linearly dependent??????

Yeah, that’s right.

I dunno lol lol. For now let’s keep moving.

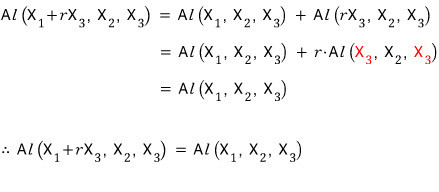

And let me mention one more property of Al.

“The Al value of a matrix where you’ve added a constant multiple of one column to another column equals the Al value of the matrix before adding.”

Isn’t that completely obvious????? (What if it’s not obvious to you…) hmmmm,,,

Got it!?!?!??!?!!?!

This plays a role later on….. we’ll meet it again when we hit the determinant.

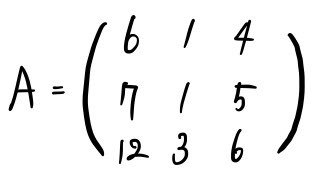

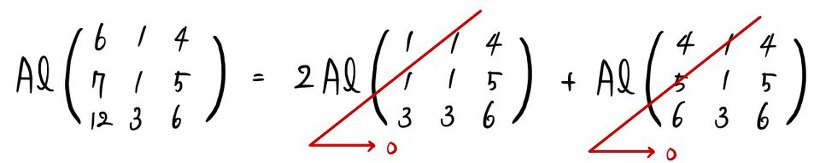

OK that’s enough of that~~~ Let me actually compute Al for a 3 × 3 matrix concretely.

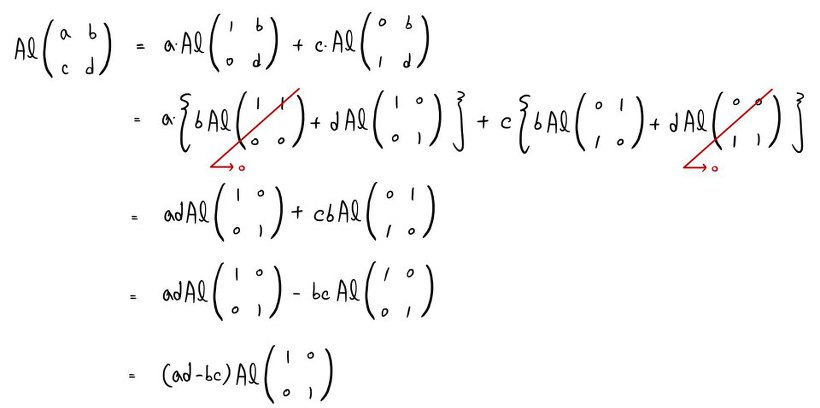

For this A, let me write out all the Al pieces we end up with.

I left off the plus + signs there.

If you sum all those values up, you get Al(A)!!!

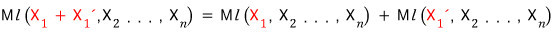

Now I want to construct

and bundle the whole thing up in one go~~

I’ll think of the column vectors as labeled with the numbers 1, 2, 3 respectively.

The number of swaps it takes to get an arbitrarily arranged 1, 2, 3 back into the order 1, 2, 3 — that’s exactly what decides whether the sign is (-) or (+), right??????

Something might be jogging your memory here.

We can express it with the epsilon symbol!?!?!

For folks who don’t know the epsilon symbol….. maybe you can pick it up from context here….. I’m just gonna roll with it lol lol lol lol. The epsilon symbol isn’t actually that hard a concept!!!!

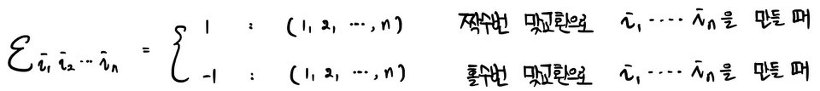

How many swaps does it take to get an arbitrarily arranged i j k into the order ijk?

If you can reach ijk in an even number of swaps,

If you can reach ijk in an odd number of swaps,

For example,

123 reaches 123 in 0 swaps.

132 reaches 123 in 1 swap, so -1.

This one also reaches 123 in 1 swap.

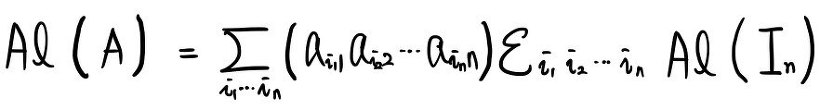

Using this epsilon symbol, we can write all those expanded Al(A) terms as a single term.

We were talking about an arbitrary 3 × 3 matrix, but if we say the same thing for an arbitrary n × n,

Are you all squared away?????????

The only thing nagging at us is whether the epsilon symbol is actually well-defined?!??!?!?!

So I think there are 3 things we need to check.

① No matter which

we start with, can we always get it into sorted order via some sequence of swaps?!?!?!

↓

Yep, absolutely true.

When we start swapping

to get it into the order 1 through n,

First swap: bring 1 all the way to the front.

Second swap: bring 2 into the second slot.

Third swap: bring 3 into the third slot.

.

.

.

.

'

Going like this, there’s no way we can’t end up at 1 through n.

② Once the permutation is fixed, is the number of swaps fixed too?!?!?!?

↓

Of course not!!! The count is not unique.

You could do an efficient swap or an inefficient swap, right?!?!?

But — there’s definitely one most-efficient way, no?

The method I wrote in ① seems like it’d be the most efficient one.

③ For the epsilon symbol to actually work, the parity (odd-or-even-ness) of the number of swaps has to be guaranteed~~~?

↓

Apparently you get the answer by thinking of swap-parity as the number of inversions.

The number of inversions = “the number of pairs where a bigger number sits in front of a smaller one.”

For example, to get the number of inversions of 7416253, let’s count the pairs where a bigger number sits in front of a smaller one:

(1,7), (1, 4), (2, 7), (2, 4), (2, 6), (3, 7), (3, 4), (3, 5), (3, 6), (4, 7), (5, 7), (5, 6), (6, 7)

Number of inversions = 13.

Once a particular permutation is given, the number of inversions is unique, right?

If the inversion count is odd, then all of the many possible swap-counts are odd; if it’s even, all of them are even — that’s how you can think of it.

That is, the epsilon symbol behaves itself just fine, no malfunctioning.

Originally written in Korean on my Naver blog (2016-01). Translated to English for gdpark.blog.

Comments

Discussion happens via GitHub Discussions. You'll need a GitHub account to comment.