Similar Matrices

Before we can actually crunch a determinant, we load up on similar matrices — plus why det A = 0 is the snap-decision test for invertibility.

OK so. Now we’re really getting into determinants.

Wait — no, to be precise, this post is about laying down the background knowledge before we go calculate a determinant for real.

So first, let me hit you with the definition.

How am I going to hit you with it? Well — like I half-spoiled earlier:

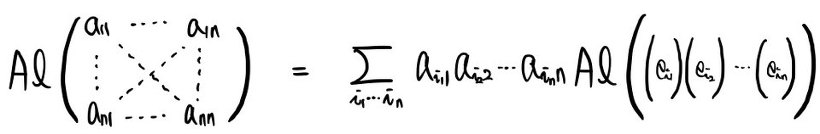

The Alternating form that satisfies this — that’s what we define as the determinant.

Note:

So,

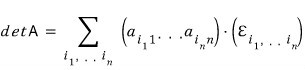

det A is

That’s the thing.

OK let me organize the properties of this determinant guy that you can basically just see.

(These hold for n × n in general, but for the sake of explanation let me show them on a 3 × 3.)

I’ll skip writing the proof out. (It’d get way too long.)

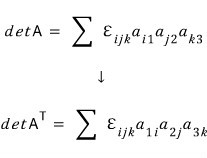

And the second property:

(Skipping that proof too.)

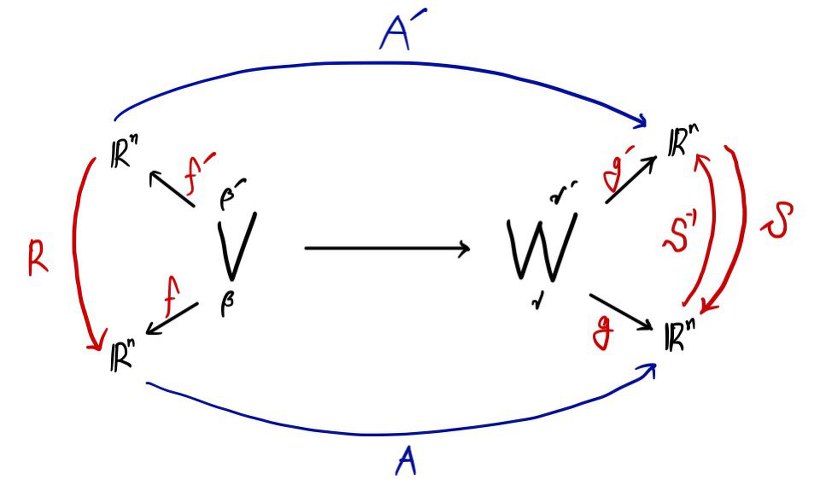

So if you organize the determinant’s properties this way — and if A is an invertible matrix —

which means

that’s what it says!!!!

So so so so:

A is non-invertible ↔ det A = 0

A is invertible ↔ det A ≠ 0

Both of these become legit, working statements.

Whether the determinant is zero or not zero — snap!!!!!!!! — you can decide right then and there whether the matrix is invertible or not.

Didn’t I say this post was about laying down background knowledge???

Background knowledge for what, you ask?

I said: background knowledge for charging toward “let’s find — snap!!!!!! — the exact value of the determinant!!!!!!”

What I’m saying is, we need to load up the concept of similar matrices.

So — let’s go find out what similar matrices are.

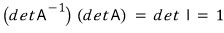

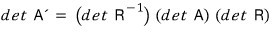

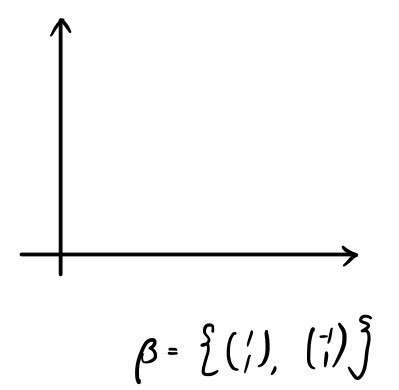

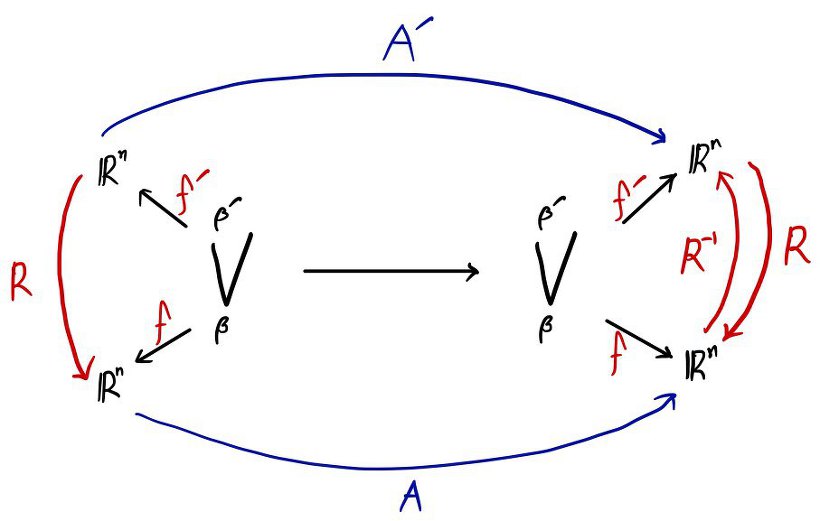

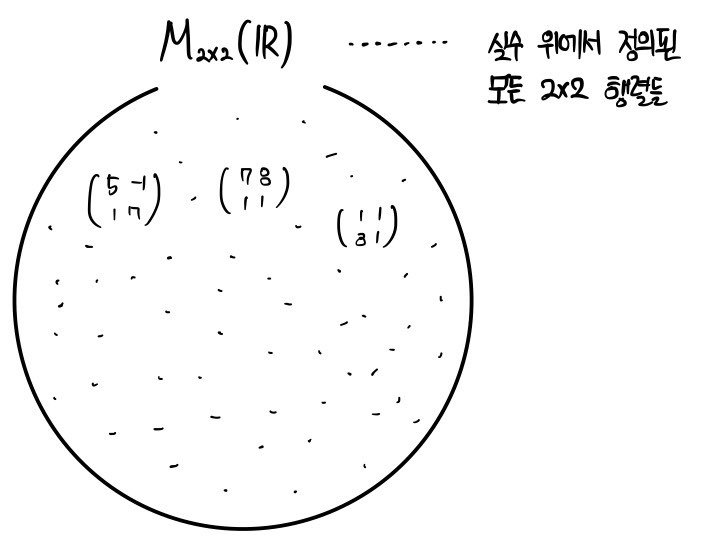

First, let me summon this figure one more time.

And this is the summary we organized earlier!!!

One more thing we absolutely must not forget!!! Let me copy-paste from an earlier post.

In post #8, here’s how I wrapped up at the end:

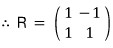

The way to build the coordinate transformation matrix R is to gather the basis vectors of the target space as column vectors, one by one.

But I said we’d be loading up similar matrices here, right????

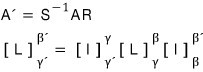

Let’s slap on a few more constraints and look at a special case.

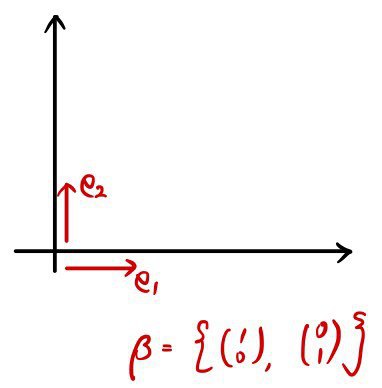

The special case I want to look at — with these added constraints — is:

Catch this: the vector space on the right is V,

and the basis is not gamma — it’s beta!!!!!!!!

Say there’s a linear map L that sends vector space V to itself, vector space V.

Let’s understand it the same way as before.

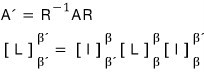

There were 2 ways to express this A'.

* One thing to especially keep in mind:

please, please keep β→β, β’→β’ in your head!!!!

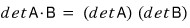

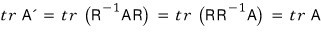

Now let’s take the determinant of both sides of the first equation.

You can’t just freely swap matrices on both sides of an equation — but since the determinant is “some number,” you can shuffle the order of the multiplication.

Huh??????????? What is this?????????lol lol

WHAT IS THIS!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!

Now now now now now now — please, don’t get too mad.

We’re in the middle of loading up similar matrices right now, so please don’t get mad.

Looking at that formula, it doesn’t quite click yet, does it?????

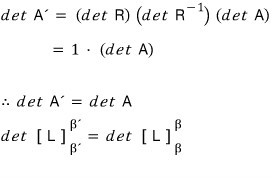

OK let me give a concrete example.

Never, ever forget this:

The way to build the coordinate transformation matrix R is to gather the basis vectors of the target space as column vectors, one by one.

Here we go!!!

The linear map that sends from the space defined by this basis to the space defined by this basis —

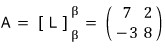

let’s say, for example, it’s

this.

The determinant of this matrix is ad − bc = 56 + 6 = 72.

Then,

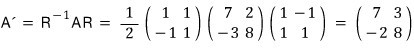

there’s a linear map A’ that sends from the space defined by this basis to the space defined by this basis,

and that linear map is in this relationship —

— let’s say it’s in this relationship.

Meaning, A’ can be written in terms of A.

Now now now now now — how did we build the matrix R??????

We said: stack the basis vectors as column vectors, one by one.

And

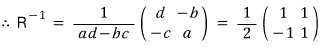

I’ll also find the inverse of R, which we need for the calculation.

So,

Now — what’s the determinant of this A’!?!?!?!?!!!!

Yep… it’s 56 + 6 = 72.

Right???

The determinant of A, and the determinant of A’ (which we built using the coordinate transformation matrix) — they’re the same.

OK then.

Let’s get into the definition of similar matrices.

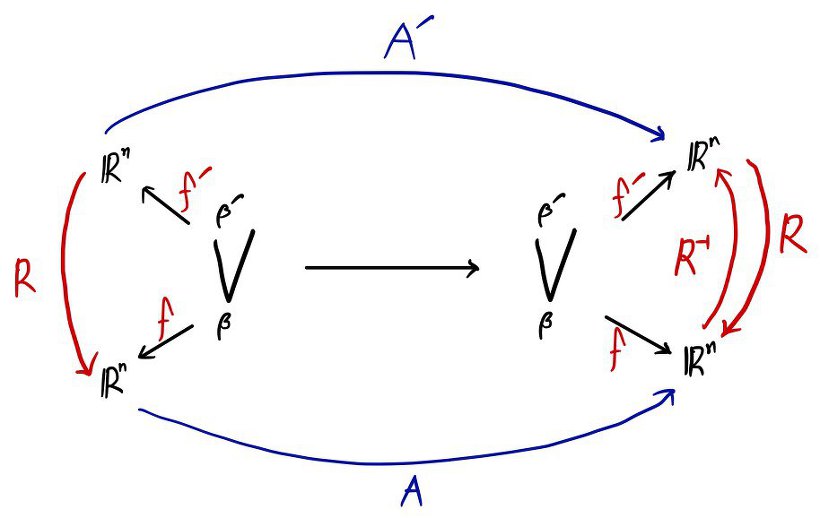

If a square matrix A’ satisfies

then we write

and this means “A and A’ are similar.”

Property: The determinants of similar matrices are equal.

Second. (We’ll come back to this later.) The traces are also equal.

Let me organize a few more properties.

Properties of the similarity relation.

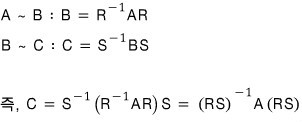

A ~ B : A and B are in a similarity relation.

Then,

① A ~ B → B ~ A

If A and B are similar, there’s also a similarity relation from B’s side to A. (Meaning — it’s a horizontal relationship, not a vertical one.)

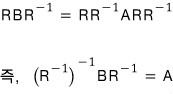

Proof.

A ~ B means

and it’s totally fine to multiply both sides of an equation by the same matrix.

If we multiply like this,

Make sense?

② If A ~ B and B ~ C, then A ~ C.

Let me prove this too.

Proof done!

Easy, right!!!

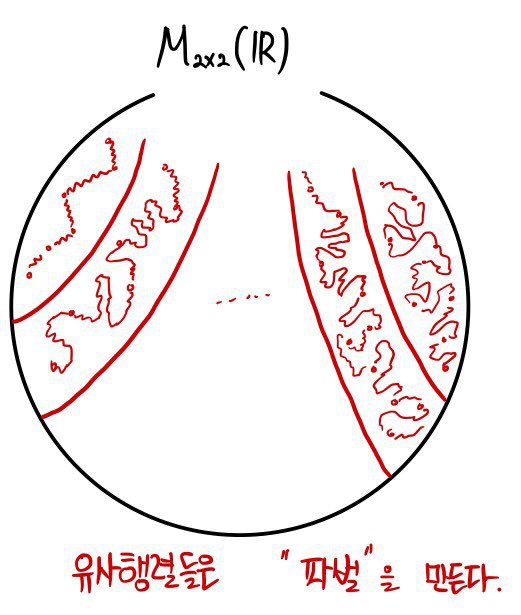

Let me sketch what we just did as a bigger picture.

↓

OK now — let me summarize all this in the language of determinants.

First:

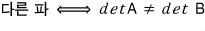

if matrices belong to different factions, their determinants will no question be different.

And matrices whose determinants don’t match will, without exception, belong to different factions.

OK then, by the same logic — if matrices belong to the same faction, the two will naturally have the same determinant.

OK OK???

BUT!!!!!!!!!!!!!!!!!!!! Can we say that if the determinants are equal, they belong to the same faction!?!??!!?!!!

Turns out — nope.

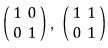

For example:

These two matrices both have determinant 1, but they are not similar.

Meaning — you cannot make them equal by multiplying some matrix and its inverse on both sides.

And let’s also look at the trace.

Similar matrices have the same determinant, and I said earlier the trace is also the same, right??????

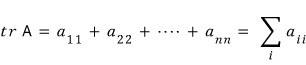

What’s the trace, you ask?

The trace is written as Tr.,

and it means “the sum of all the diagonal elements.”

That is,

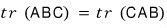

What are the properties of the trace?

The order doesn’t shuffle around randomly — it rotates, in cyclic order — and the punchline is, the order of the matrices doesn’t matter.

So,

OK doing up to this point… the post has gotten long again…. ugh… ;_;

“Finding — snap!!! — the determinant quantitatively” — that one’s for the next post.

Originally written in Korean on my Naver blog (2016-01). Translated to English for gdpark.blog.

Comments

Discussion happens via GitHub Discussions. You'll need a GitHub account to comment.