Block Matrices

A casual dive into diagonalization — what it means to turn a matrix diagonal, why that's such a big deal in physics, and how blocking gets the whole thing started.

‘Diagonalization’ — also showing up everywhere in physics.

It’s an absolutely essential idea in classical mechanics, and especially in quantum mechanics…!!!!

Before we dive in, let me do a tiny recap of the previous post, then we’ll move on.

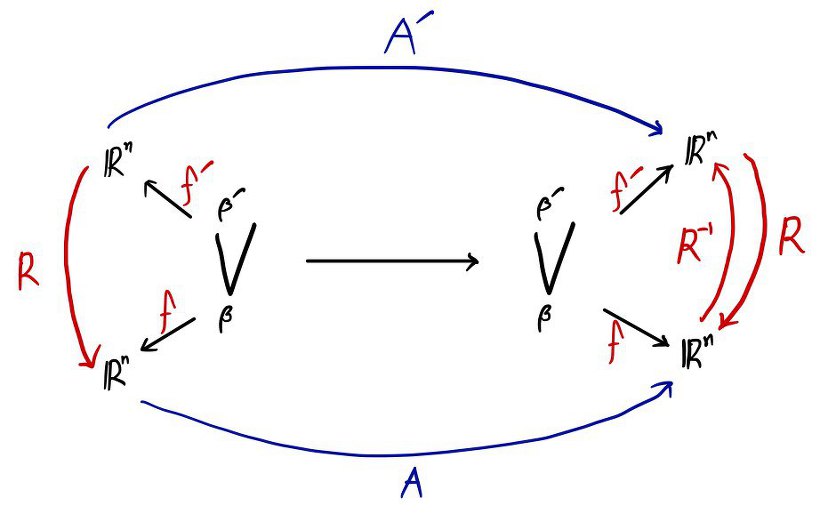

On one side an invertible matrix $R$, and on the other side its inverse — multiply $A$ between them and you get $A'$.

We said $A'$ is similar to $A$, right?

And we covered stuff like this about the similarity relation.

(~ : the tilde means “is similar to”!!!)

$A \sim B$ : $\Leftrightarrow$ $A \sim B$, $B \sim A$

$A \sim B$, $B \sim C$ : $\Rightarrow$ $A \sim B$, $A \sim C$

So the property of any clique connected by these tildes is:

If $A$ and $B$ are in the same clique ( = if they’re similar) → $\det A = \det B$ and $\operatorname{tr} A = \operatorname{tr} B$.

(tr : trace, the sum of the diagonal entries.)

And the thing to be careful about — the converse does not hold.

Meaning: just because some matrix $T$ and some matrix $Y$ have the same determinant, you cannot turn around and say they’re similar!!! That is wrong!!!

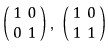

For example,

these two have the same determinant, but they are not similar~~~

OK, let’s keep moving.

As you can tell from the title, today’s topic is ‘diagonalization’.

Which means the matrix we’re going to be staring at is the diagonal matrix.

As the name suggests (sorry if it doesn’t suggest anything to you lol)

a matrix where the diagonal entries are nonzero and every other entry is zero — that’s a diagonal matrix.

(Remember way back when, when you multiplied $A$ by $\operatorname{adj} A$ and the result came out diagonal? Yeah, that.)

Way, way~~~~ back,

we took a matrix like this and

multiplied it by this,

and this,

and got this out the other side, didn’t we????????????????

Here’s what I’m trying to say.

Among all the matrices similar to your matrix — which you can hop between freely with a change of basis —

there might be a diagonal one.

Diagonal matrices have a ton of advantages over your run-of-the-mill matrix, and a bunch of distinctive properties on top of that,

so the move is: take an ordinary matrix and do an appropriate change of basis to turn it into a diagonal matrix.

(That whole process of converting it into a diagonal matrix is what ‘diagonalization’ means.)

OK, now let me drop the big question on you!!!

Our core question: is every matrix diagonalizable!??!?!?!?!!!

Let’s take the first step!

First!!!!!!!!

Blocking

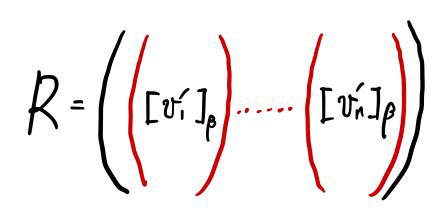

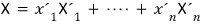

The matrix $R$ that pulls off a change of basis —

we said it was good enough to express the $\beta'$ vectors as column vectors in the $\beta$ basis and stack them up in order, right?!?!!?

You can call this a “coordinate change matrix” or a “basis change matrix”.

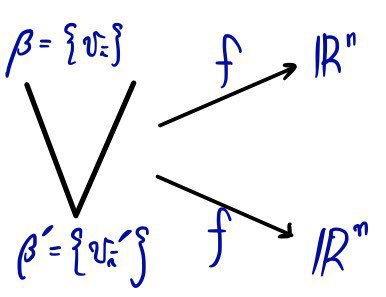

OK, to make the thinking easier,

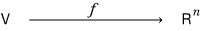

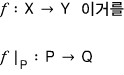

let’s say the $f$ in this relation is the identity function.

That is, $V = \mathbb{R}^n$.

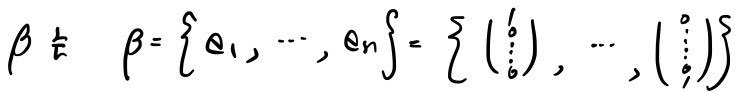

And for $\beta$,

let’s go with this,

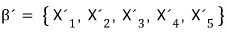

and for $\beta'$

let’s go with this.

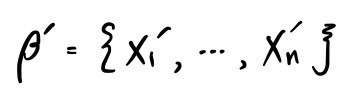

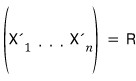

And let’s just bundle all of these up and talk about $R$ again.

(Stacked as column vectors!!!!!! heh heh heh)

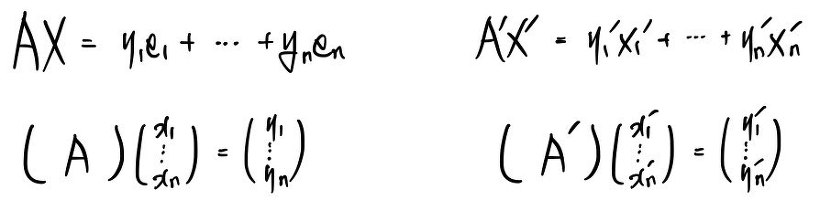

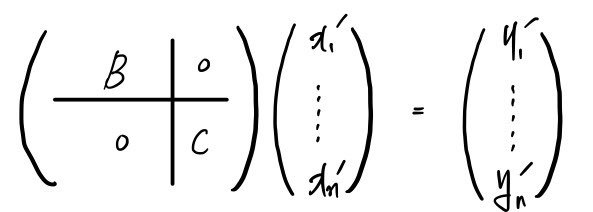

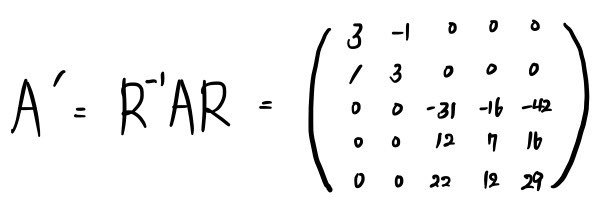

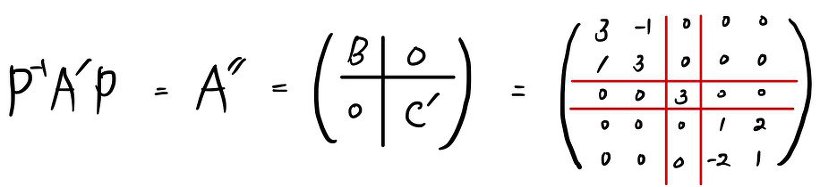

And let’s say when you sandwich $A$ between this $R$ and its inverse, you get $A'$.

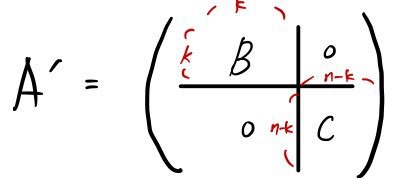

And then suppose the result comes out as

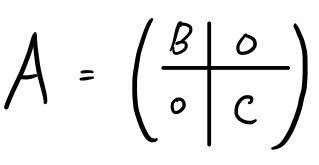

a block-broken form like this.

(“Bro, what kind of an assumption is that?!” — yeah I totally get the reaction. Just bear with me a sec, allow me this one.)

OK, that’s the rough strategy laid out.

Let’s start.

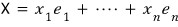

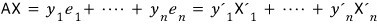

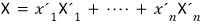

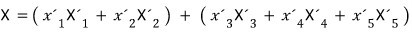

An arbitrary element $X$ in vector space $V$ is

let’s say this.

But since $X$ lives in $V$, it can also be expressed in the other basis $\beta'$ of $V$,

so another expression for $X$ is

we can write it like this too, right?!?!?!

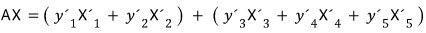

Now let’s take a vector like that and shoot it through a linear map $L$.

Let’s say $L$ acts like this,

with coefficients fixed like that.

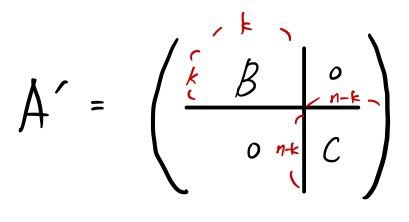

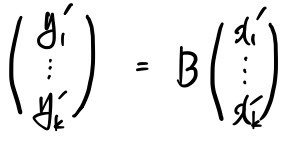

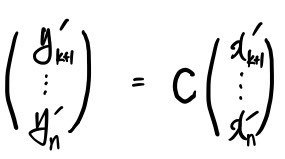

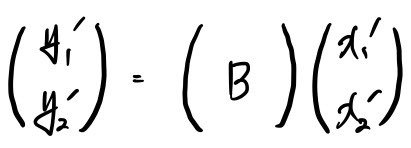

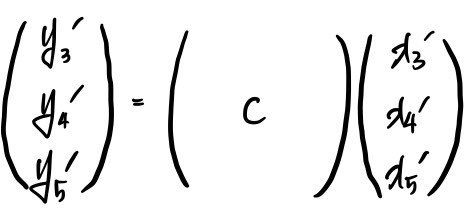

If we write this in matrix form,

what we just wrote with the formula above is exactly what this is saying.

And we had our assumption, right?!

we assumed the result is busted into blocks like this,

so given that assumption,

and given that $B$ is $k \times k$ and $C$ is $(n-k) \times (n-k)$,

it’s fine to split it and draw it like in the figure below.

OK now let’s look at what this means. What’s the significance?

No matter which $X$ in $V$ you pick,

it can be expressed in basis $\beta$ or in basis $\beta'$ — even an elementary schooler gets that part,

and the statement above is

captured by this equation, right?

Now over here

we’ll briefly look at these elements!!!!!! That is, we’re going to look at the elements of $\beta'$!

The $X$ in

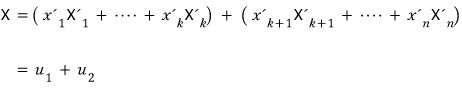

is written like this, and let’s split it into two pieces — bam!!!!

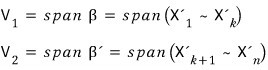

This is saying: any $X$ in $V$ can be written as the sum of two vectors — one a linear combination of basis vectors $(1 \sim k)$, the other a linear combination of basis vectors $(k+1 \sim n)$.

And the way to write it as $u^{(1)} + u^{(2)}$ is unique!!!!

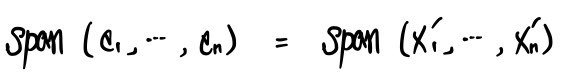

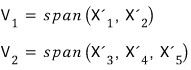

The space you get by spanning

(which gave us $u^{(1)}$),

and the space you get by spanning

(which gave us $u^{(2)}$) —

if we name them like this,

then $V$ is said to be

the direct sum of these!!!!

Direct sum isn’t anything scary —

if you split a 3D vector into $\hat{i}, \hat{j}, \hat{k}$, the spans of each give you

the $x$-axis, $y$-axis, $z$-axis, right????? Direct-sum those axes (spaces) and you get 3D space back… it’s basically saying that.

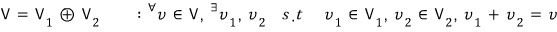

Mathematically, direct sum is

※ Heads up, heads up ※

But — there are lots of ways to split a big space into smaller spaces as a direct sum!!!

Easy example: you could split a 3D vector into 3 spaces — the $x$-axis, $y$-axis, $z$-axis —

or you could split it into 2 spaces, like the $xy$-plane and the $z$-axis!!!

Once you’ve fixed the split, the way of writing a vector is unique — that’s the point!!!!

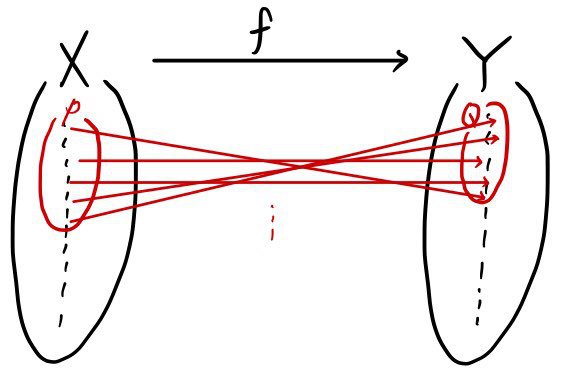

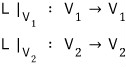

And one more piece of notation!!!!

With the meaning of looking only at the subset $P$ of $X$ and the subset $Q$ of $Y$,

written like this — the meaning is:

$f$ is still $f$, but we mean $f$ restricted to $P$. Say it that way and it clicks.

By convention it’s written

like this.

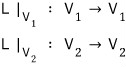

The reason we picked up this notation is —

if we rewrite the same situation as before using this notation,

with the meaning of restricting the linear map to each subspace,

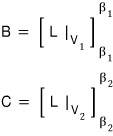

we can say: the identity of matrices $B$ and $C$ in $A'$ is expressed exactly with the notation above.

So from here on, the main concern is

“Ah, so then how do we split $V$?!?!?!?!” — let’s put our attention there…

(How do we split off the basis vectors from the set $\beta$?!?!?!?!)

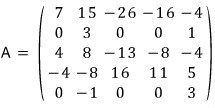

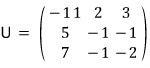

Let’s keep the discussion going with this concrete $A$.

We’re going to find an appropriate basis for this $A$ and convert it to $A'$.

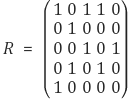

We gathered these basis vectors into a matrix and built the coordinate change matrix $R$.

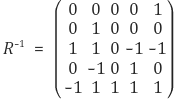

And to make $A'$ we also need the inverse of $R$, so let’s go ahead and make that too.

(No need to spiral with “wait, how on earth did this come out like that!?!??!?!!!!!!!!!!!!!!”

The method for making it is something we’ll learn later.)

So,

you can see it’s been broken into blocks of the $B$, $C$ form, right?!?!?!?

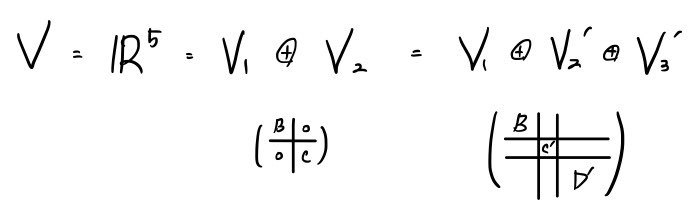

That means $V$, our 5-dimensional space, has been split into

and

If we shoot an $X$ like this through the linear map $L$,

And this

can be written like this, and what it’s saying is exactly —

we’ve done exactly the same thing as what we did above. Exactly!!~~!!!~

Another question —

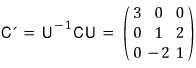

Can we block matrix $C$ even further?!?!?!?!?

Yes we can.

It’s just a matter of changing these bases appropriately again.

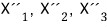

Let’s call them

stack them like this,

and call the coordinate change matrix $U$,

(You’ll see how to build it later — just take it on faith for now.)

So,

looking at $C$ in isolation, we did manage to change it into $C'$… but (sob)(sob)(sob)

can we just recklessly slot $C'$ into the spot where $C$ was?!?!?!?!

Hmm… before we do that, we need some justification for being allowed to.

In isolation it seems obviously fine,

but if we’re not looking at it in isolation and we want to plug it in here, how do we actually do that?!?!?!? How should $U$ be defined?!?!?!? (sob)(sob)(sob)(sob)

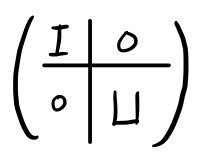

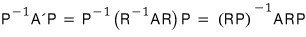

The matrix $U$ applied to $A$, written out as a $5 \times 5$ matrix — the way to write it is

if you build it like this, it acts exactly like it did when we were looking at $C$ alone, without messing with $B$.

Let’s call that matrix $P$.

So yes, it’s fine to swap $C'$ in for $C$,

and the justification — looking at the whole thing — is that

it works out like this.

Let’s tidy up the left side a bit more.

Make sense now??? We did the blocking in two passes,

but really, if we had just used the coordinate change matrix $RP$ from the start, we could’ve gotten the same result in one shot.

And now, how do you express this with a direct sum —

like this, right?

So: “If you keep~~~ blocking, in the end it diagonalizes~~”

But — when you push toward diagonalization through blocking, there are cases where it goes all the way to diagonal, and cases where it doesn’t, apparently.

Among the cases where it doesn’t, there are matrices that simply can’t be blocked from the start,

and there are matrices where it becomes impossible partway through the diagonalization process….

And on top of that, the answer can change depending on the field —

apparently it varies field by field.

(Meaning: there are matrices that can’t be diagonalized over the reals but can be diagonalized once you allow imaginary numbers, i.e. over the complex numbers.)

(Same vibe as how $x^2 + 1$ can’t be factored over the reals but factors just fine over the complex numbers.)

Originally written in Korean on my Naver blog (2016-01). Translated to English for gdpark.blog.

Comments

Discussion happens via GitHub Discussions. You'll need a GitHub account to comment.