Is Further Block Decomposition Always Possible?

Poking at a special case where the minimal and characteristic polynomials match as a power of an irreducible polynomial, and what that tells us about block decomposition.

Today we’re gonna look at some special cases and poke at their properties.

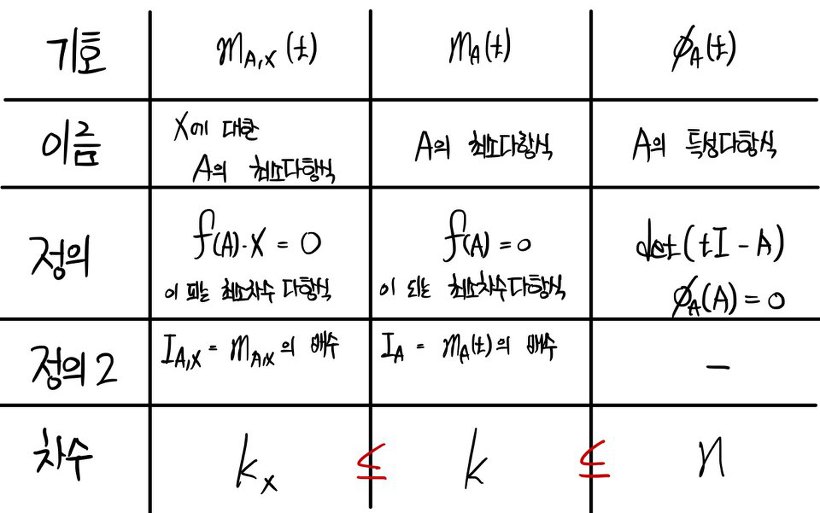

But — if this is the first post of mine you’re seeing, this is gonna feel like it dropped out of nowhere. So let me just paste in the conclusion from the very end of the last post.

Where did we leave off last time —

Right here. This is what we had wrapped up to.

OK so — what special case are we gonna look at?

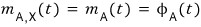

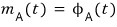

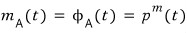

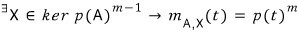

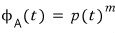

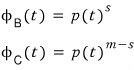

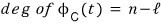

What if this is the case?!?!?! What happens then??

If that holds,

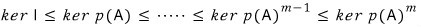

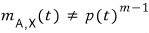

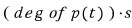

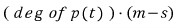

then the inequality that used to be this

collapses into this, like so…

Which means the leading degree of each polynomial is the same, so

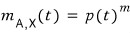

it ends up looking like this.

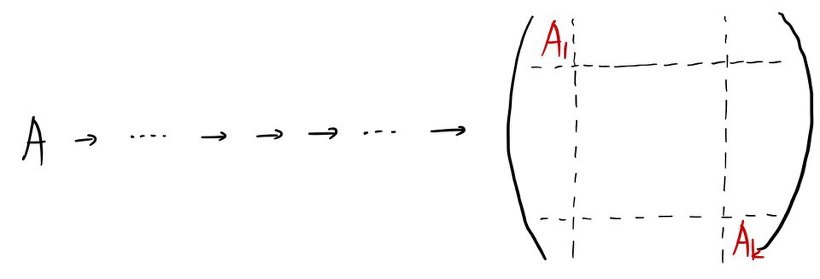

And what this is telling us is — among all the matrices similar to A, there’s an A’ that can be written as a companion matrix.

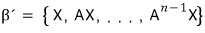

Being expressible as a companion matrix means

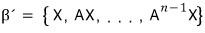

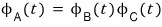

the basis that builds A’ has the shape

like this, and

in order to satisfy this,

it means there exists an X that makes this work, right?!??!!!!

Yep. That’s the one.

There’s an X that makes it happen!!!!

In the case where this holds?!?!?!?!

What this is really saying is

it’s the same as asking what happens when this holds, and

what on earth does that even mean……

It’s not hard!!!!!

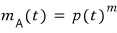

Here, p(t) is an irreducible polynomial.

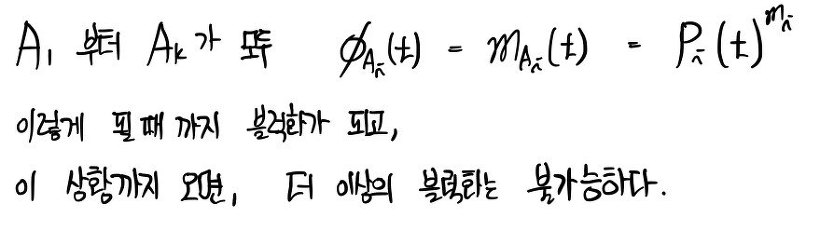

Let’s say the minimal polynomial and the characteristic polynomial are the same — but they’re the same in the form of a power of an irreducible polynomial.

Because once we crack open this special case, we can talk about general properties from it. That’s why we set up the situation above.

ker B is a set of vectors — specifically, the set of X’s satisfying BX = 0.

So then,

if this is the case,

it means the set of X’s satisfying this.

And — even if we slap p(A) onto the left side of the equality above, the same way,

this automatically holds. So the inclusion relationship up there falls out automatically.

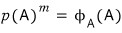

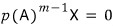

Look at the far left end. X belonging to ker I means X satisfies IX = 0 — so the only thing that fits is the zero vector.

∴ ker I = { 0 } and the dimension is 0…

On the far right,

X belonging to this

means X’s satisfying this,

but is there even an X that satisfies this?!?!?!?!

→ There are a TON of them lol!!!!!

It holds for absolutely every single vector!!! Because!!!!!

because of this it’s just 0!!!!!!!!! So it holds for all vectors.

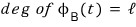

∴ ker p(A)^m is a vector space containing every vector in sight!!!! Dimension is n !!!!!

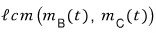

If you draw this as a Venn diagram, apparently it looks like this.

Now I’m gonna toss out a proposition.

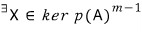

X does not belong to ker p(A)^(m-1), so

we’re talking about a vector X that does not land inside ker p(A)^(m-1) but lives only inside ker p(A)^m,

and for that X,

this holds, and

this is the case!!!

Now — h-o-w-e-v-e-r !!!!!!!!!

outside of this,

does an X like that — one that only lives inside this — actually exist?!?!?!?!

Yes….. it exists with 100% probability lol!!

Because,

this and

this — we assumed they could never, ever be equal.

If the two sets were equal,

it would mean this holds, so the minimal polynomial of A would become

this.

And then the assumption we set up at the very start would be broken. Can’t allow that.

That is,

- an element like this always!!! exists!!!!!

That is,

this is one property !!! Yeah!

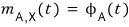

For a matrix A like this, there’s yet another important property.

And that one is~~~~~

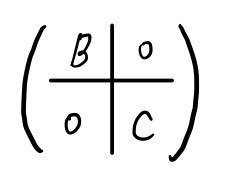

a similar matrix A’ decomposed like this can absolutely never be made.

Let me prove it.

The assumption is “decomposition is possible” — I’ll assume that and roll with it.

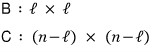

I’ll assume the sizes look like this,

this part is of course obvious,

and from here,

right?!?!?!?! Then

this and

this will each be written as

like this.

OK so,

but that would mean

wouldn’t it?!?!?!?

By the same logic,

=

riiight~~~~

Also,

is a divisor of

this, and

is a divisor of

this.

The ingredients are all on the counter now.

Let’s think about

this.

This would be

this, and if we work it out using the ingredients we just prepped,

the conclusion that pops out is — q has to be strictly less than m.

Whoa~~~~~~

but we said this holds!!!

That is, the result we got is that they can absolutely never be equal — so it’s a contradiction!

What was the contradiction?!?!?!?!

“Assuming decomposition is possible” — that was the contradiction.

(

In the situation where this holds. )

Q.E.D.

quod erat demonstrandum

That was the moment one of those long-simmered, long-simmered questions finally got resolved!!!!!!!!!!!!!!

Our very first question was “Is diagonalization possible for all matrices?!?!?!?!”

↓

“Ohhhhh so if we just keep block-decomposing step by step, it’ll end up diagonalized?!?!”

↓

“Then is there a case where block decomposition is not possible?!?!!?!?!?!?!?!?!”

↓

And now we get to add one more piece of flesh to the bone right here.

Originally written in Korean on my Naver blog (2016-02). Translated to English for gdpark.blog.

Comments

Discussion happens via GitHub Discussions. You'll need a GitHub account to comment.