Moment Generating Function

Turns out mean and variance are just the 1st and 2nd moments — and the MGF is the tool mathematicians built to pull any moment out, cleanly, anytime!

So you get a bundle of data. What do you do with it? You compute stuff — mean, variance, that kind of thing.

It’s just… you’re trying to pull out the characteristics of the data, right? So…

Calculating the mean and the variance and whatever doesn’t feel weird at all.

But here’s the thing — “mean” is actually called the first moment (1st moment).

And “variance” is called the second moment (2nd moment).

So what’s that 1 and that 2 hooked to —

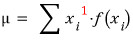

The definition of the mean is

this, right? And it’s tied to that ‘1’ I colored red up there.

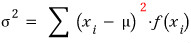

And the definition of variance is

this, and turns out it’s tied to the ‘2’ I colored red.

OK so far I’ve only talked up to the 2nd moment — and that’s where high school stops —

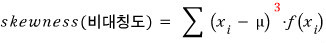

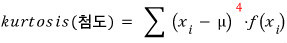

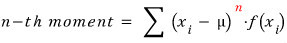

but if there’s a 1 and there’s a 2, then what about 3? What about 4? What about $n$????????????

Yep! They exist.

.

.

.

And this part right here — this is the ‘moment’ part of moment generating function, isn’t it??

Like the name itself says, mathematicians cooked up the moment generating function so they could pull moments out cleanly, in any situation, anytime.

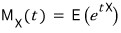

The MGF is written as

and the definition is

You’re asking why it’s defined like this??? Super simple.

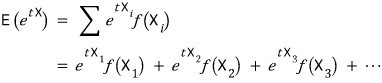

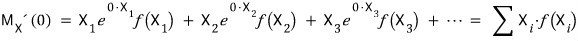

by the definition of expected value, is

That is,

and there it is.

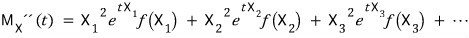

Differentiate both sides with respect to $t$:

Differentiate both sides one more time with respect to $t$:

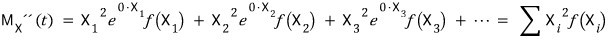

Now — shall we plug $t=0$ into each of those??????????

The thing that has these characteristics is the moment generating function.

OK so to summarize before moving on —

it’s all because of this property:

!!!!

But honestly, the real reason I’m pinning the MGF down here is

a different property of it that we’ll use later on.

What property —

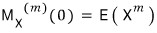

If you’ve got two random variables $X$ and $Y$, with probability distributions $f(X)$ and $g(Y)$ respectively,

and $X$ and $Y$ live on the same space,

→ then their MGFs being equal means $f = g$. So they say.

That is — if the MGF exists, there is one and only one probability distribution that corresponds to it!!!!!!!!!!!!

Also, side note —

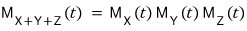

Say you’ve got mutually independent random variables $X, Y, Z$.

Then each one’s MGF is written as

,

,

right??????

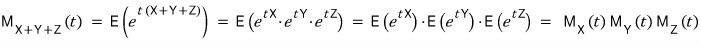

But in this case — the MGF of the random variable $X+Y+Z$ is

that’s the fact.

I used 3 as an example, but it works for any $n$ too.

Yeah, the logic just goes through like that.

Since this MGF thing gets used a bit later on, I figured I’d jot it down simply now before moving on!

Next time — we’re kicking off with ‘distributions’!

Originally written in Korean on my Naver blog (2017-11). Translated to English for gdpark.blog.

Comments

Discussion happens via GitHub Discussions. You'll need a GitHub account to comment.