Derivation of the Exponential Distribution

We skip the normal distribution to derive the exponential distribution — aka the waiting time until the first event — straight from the Poisson!

So we just wrapped up the Poisson distribution, which means — by all rights, by every law of textbook ordering — the next thing we should be doing is the ’normal distribution.’ The Gaussian. The big one.

But… nope! We’re skipping the normal distribution!!!!

We’re doing the exponential distribution!!

You might’ve already noticed something while grinding through Poisson problems — or maybe “noticed” is too gentle a word. Maybe it kind of hit you in the chest, you know?

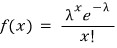

OK so anyway. Recall: the Poisson distribution was

“the distribution of the number of events happening during some reference time period.”

So the exponential distribution, which is the thing we’re doing today, is about something slightly different but very much related — it’s about “the waiting time until the first event happens during a reference time period.”

Alright. So suppose we have a Poisson distribution where the average number of events per unit time is λ.

We’re going to stretch the interval out!!!

We stretch it out to “interval $t$” — and over this interval, how many events occur on average?

Well, since we had an average of λ per unit time,

over an “interval $t$” we’ll have an average of $\lambda t$ events. <This is baked right into the assumptions of a Poisson process, btw.>

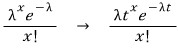

So the probability distribution becomes

— like that. (Still a Poisson distribution, just with $\lambda t$ in place of $\lambda$.)

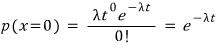

But now watch what happens if I plug $x=0$ into that:

What does this mean? It’s the probability that no event occurs during interval $t$….

Which — wait — is the same thing as saying “the probability that the waiting time until the first occurrence is bigger than $t$”!!!!

And conversely, the probability that an event occurs ‘at least once’ during interval $t$ is

— that’s what it’s gotta be.

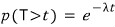

OK so now let’s call the random variable for “waiting time (until the first occurrence)” $T$, and re-read what we just wrote with that in mind:

“The probability that no event occurs during interval $t$”

→ “The probability that $T$, the (waiting time until first occurrence), is greater than $t$”

→ In symbols:

→ And from above, we said

, so therefore

:

Then, on the flip side,

“The probability that an event occurs at least once during interval $t$”

→ “The probability that $T$, the (waiting time until first occurrence), is less than $t$”

→ In symbols:

→ And from above, we said

, so therefore

— and that’s the cumulative distribution, riiight??!??!!?

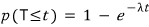

Let me write it like this:

That right there. That is what we call the exponential distribution >_<

And what’s it mean again???????????????

It’s the probability that the waiting time is $t$.

Oh and quick note — ($t$ and $\lambda$ are positive! Don’t forget that.)

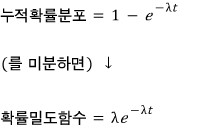

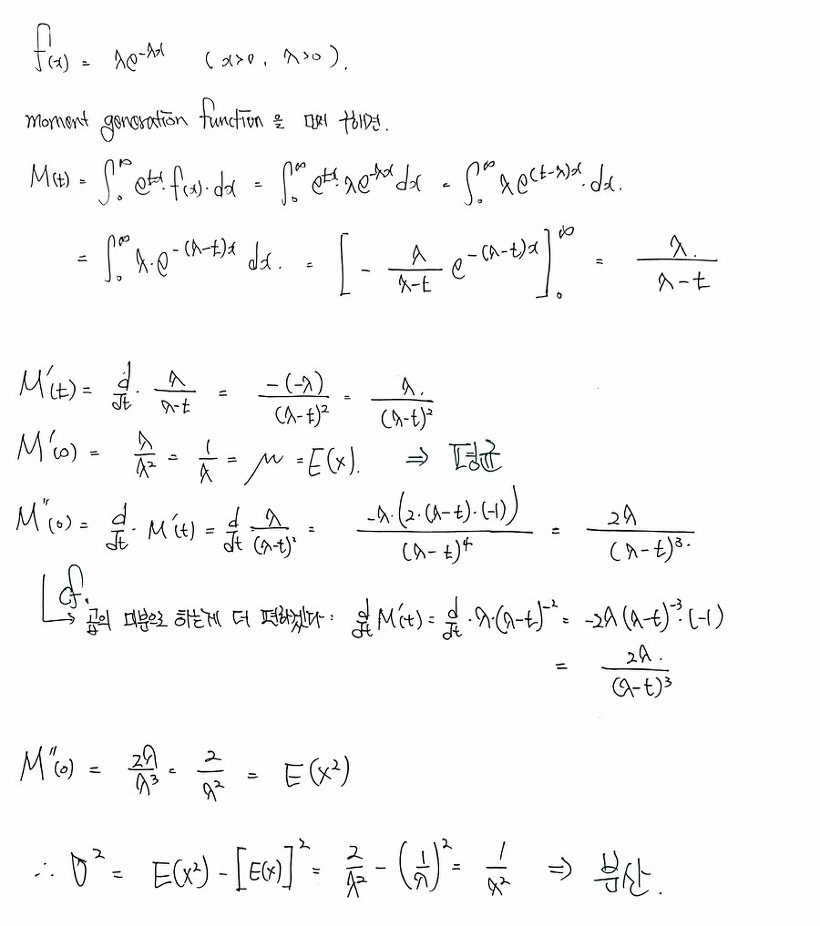

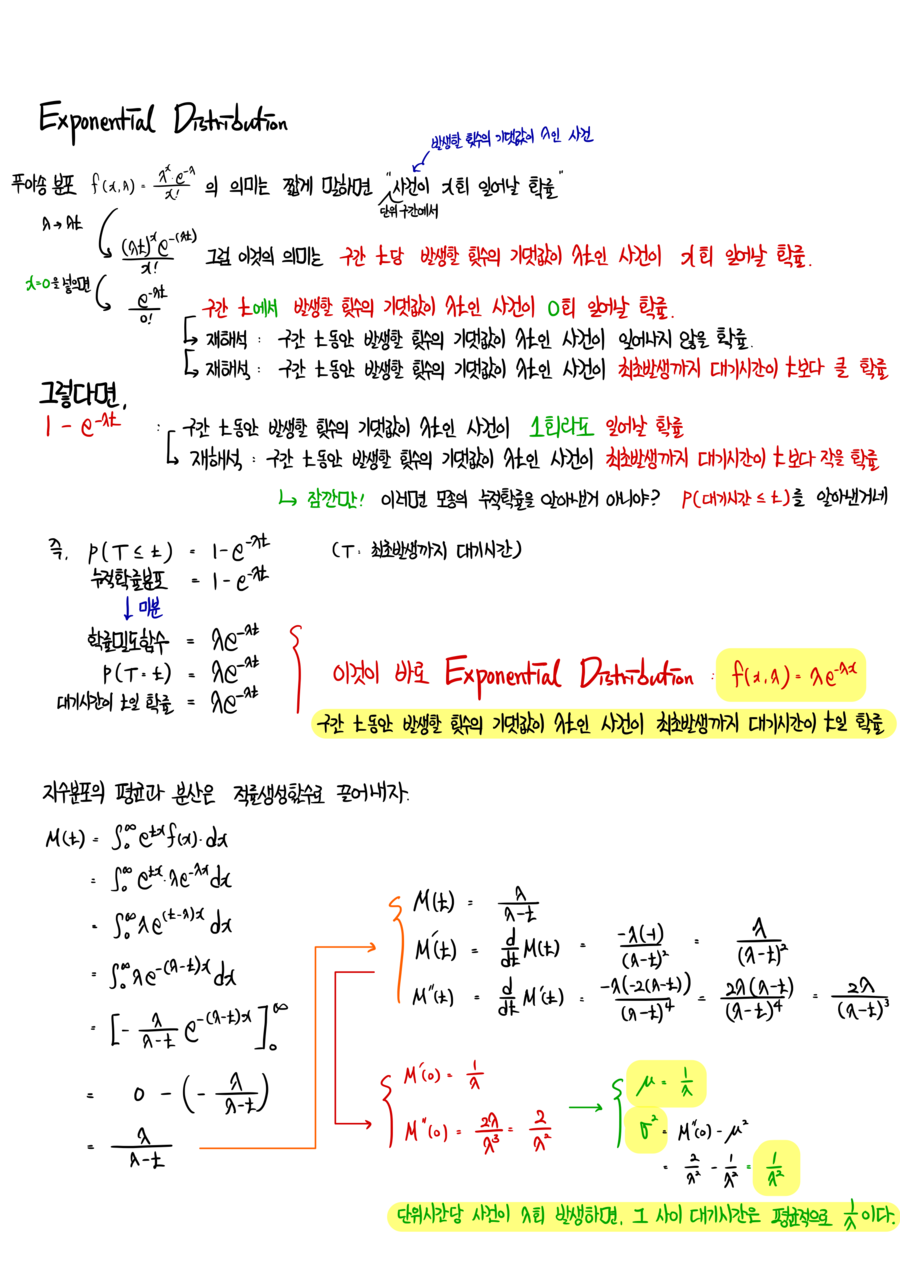

OK now let’s compute the mean and variance of the exponential distribution.

If I type the whole thing out in formulas it’s gonna take forever,…. so I’ll do it via photos.

But “nicely” with photos… yeah I’ll do that one! heh heh heh!

And since we already learned the moment generating function,

we’re gonna use that — way simpler!!!!

(Of course, you could also just grind it out by direct integration — that’s totally doable for this distribution!!!! See over here → http://gdpresent.blog.me/220582073367 it’s all there!!!!)

Down below, using the moment generating function,

I’m going to extract the mean and the variance!!!!!!!!

One quick heads-up: I’m going to temporarily rename the random variable from $t$ (time) to just $x$.

Reason being — the $t$ that shows up inside the moment generating function is its own thing, and I want it to stay as $t$ without colliding.

So please keep in mind: from this point on, the $t$ you see is not time.

And below, $f(x)$ is the exponential distribution whose random variable is time~~~

↓ Cleaned up a bit nicer on iPad:

cf. Poisson process:

For events that happen randomly over time —

The number of events in non-overlapping intervals are mutually independent, and

The probability of one event happening in a short time interval is proportional to the length of that interval, and

The probability of two or more events happening in a short time interval is negligible, and

If the above conditions hold the same way in any chunk of the total time,

— that’s what we call a Poisson process.

↓ Cleaned up a bit nicer and put on a single page on iPad:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

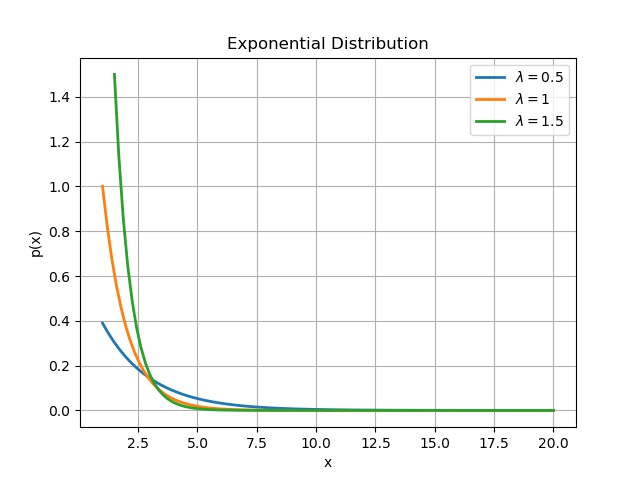

import numpy as np

import matplotlib.pyplot as plt

import os

import scipy.stats as sc

pdp = []

llambda = 0.5

loc = llambda

scale = 1./llambda

pd = sc.expon(loc=loc, scale=scale)

x = np.linspace(1, 20, 100)

for num in x:

pdp.append(pd.pdf(num))

plt.plot(x, pdp, linewidth=2.0, label=r’$\lambda =$0.5’)

pdp = []

llambda = 1

loc = llambda

scale = 1./llambda

pd = sc.expon(loc=loc, scale=scale)

x = np.linspace(1, 20, 100)

for num in x:

pdp.append(pd.pdf(num))

plt.plot(x, pdp, linewidth=2.0, label=r’$\lambda =$1’)

pdp = []

llambda = 1.5

loc = llambda

scale = 1./llambda

pd = sc.expon(loc=loc, scale=scale)

x = np.linspace(1.5, 20, 100)

for num in x:

pdp.append(pd.pdf(num))

plt.plot(x, pdp, linewidth=2.0, label=r’$\lambda =$1.5’)

plt.grid(True)

plt.legend()

plt.ylabel(‘p(x)’)

plt.xlabel(‘x’)

plt.title(‘Exponential Distribution’)

# plt.savefig(‘2.Exponential Distribution.jpeg’)

cs

Originally written in Korean on my Naver blog (2017-11). Translated to English for gdpark.blog.

Comments

Discussion happens via GitHub Discussions. You'll need a GitHub account to comment.