Derivation of the Gamma Distribution

Deriving the Gamma distribution from scratch by connecting it to the Poisson process — turns out it's just waiting for the α-th event instead of the first!!!!

Alright, time to move on to the Gamma distribution!

Funny thing — the Γ-distribution is actually a cousin of the exponential distribution!!!!

Why? Well, the exponential distribution was the waiting time until the first Poisson event in a Poisson process with mean λ,

right????????

Poisson process — quick refresher:

For events that happen randomly over time,

the counts of events in non-overlapping intervals are mutually independent, and

the probability that a single event occurs in a tiny time interval is proportional to the length of that interval, and

the probability that two or more events occur in a tiny time interval is negligible, and

the above conditions hold the same way over the entire time span,

then we call it a Poisson process.

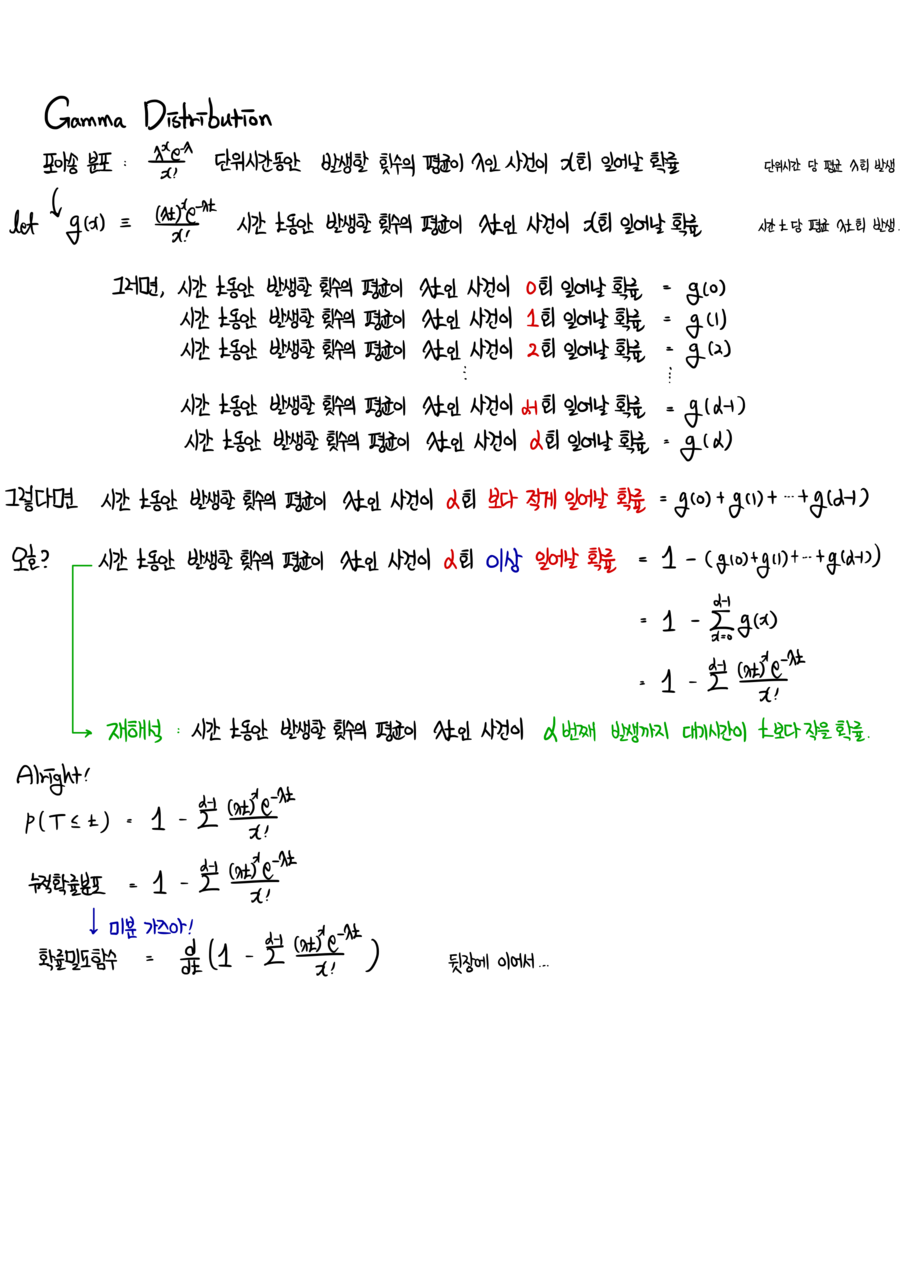

The Gamma distribution

is the distribution of the waiting time until a Poisson event has occurred α times!!!!

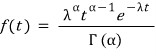

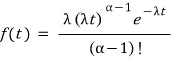

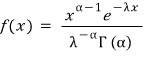

Let me just drop the definition first,

OK… now let’s derive it real quick!!!!

I’m going to follow the exact same playbook as when I derived the exponential distribution!!

Let’s think about the cumulative distribution.

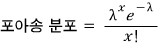

The Poisson distribution was

that one.

This is “the Poisson distribution that says, on average, λ events happen per unit time,” and

then “the Poisson distribution that says, on average, λt events happen over time t” is

like that, and

inside this distribution, the probability of 0 events, the probability of 1 event, ~~~~~~~~~, the probability of α-1 events, and the probability of α events

are g(0), g(1), ~~~~~~~~~~, g(α-1), g(α).

<Wait!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!>

Let me pin something down here.

In the Gamma distribution, what feels meaningful is that

λ is meaningful

AND α is also meaningful!!!!

Which Poisson distribution it came from matters — i.e. how many events on average per time t,

and how many events we’re waiting for ALSO matters!?!??!

I’ll unmask these guys properly at the very end.

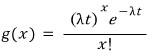

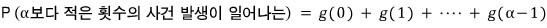

OK so — since I want to recast this as “the time until the α-th event happens,”

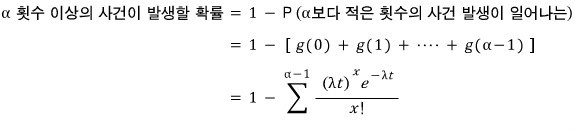

(same trick as in the exponential derivation) I’ll sum up all the probabilities up through “the α-1-th event has happened.”

What this means is

this is what it’s saying!!!!!!

Now treat the variable as time!!!?

Then it becomes “the cumulative probability for the time we wait until the α-th event”!

The probability that fewer than α events have happened —

the probability at t=1, at t=2, at t=3, at t=4 ~~ and so on and so on.

Make sense??????

OK so let’s call this cumulative probability F(t)!

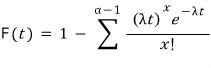

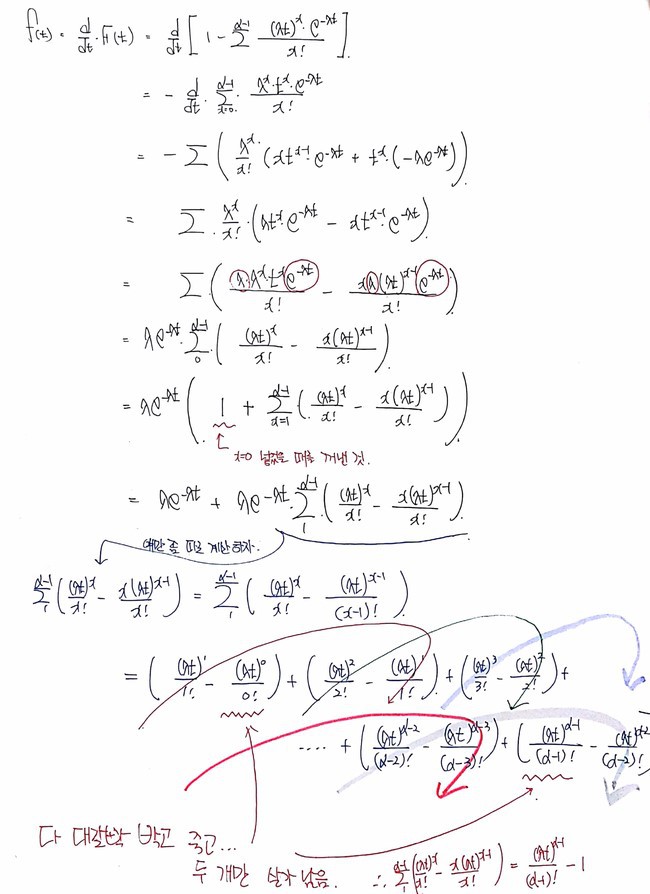

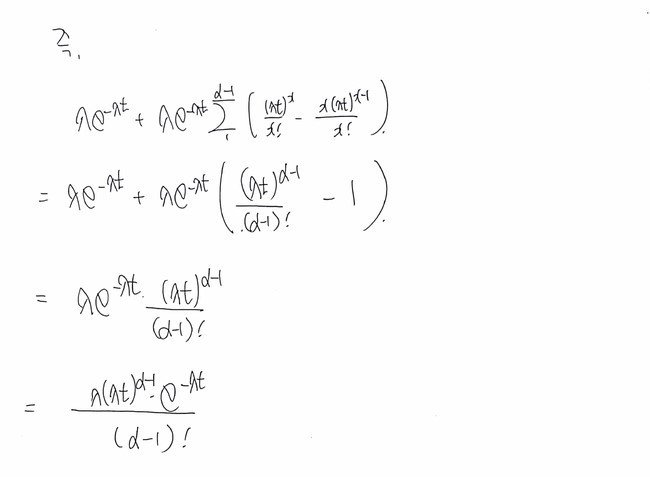

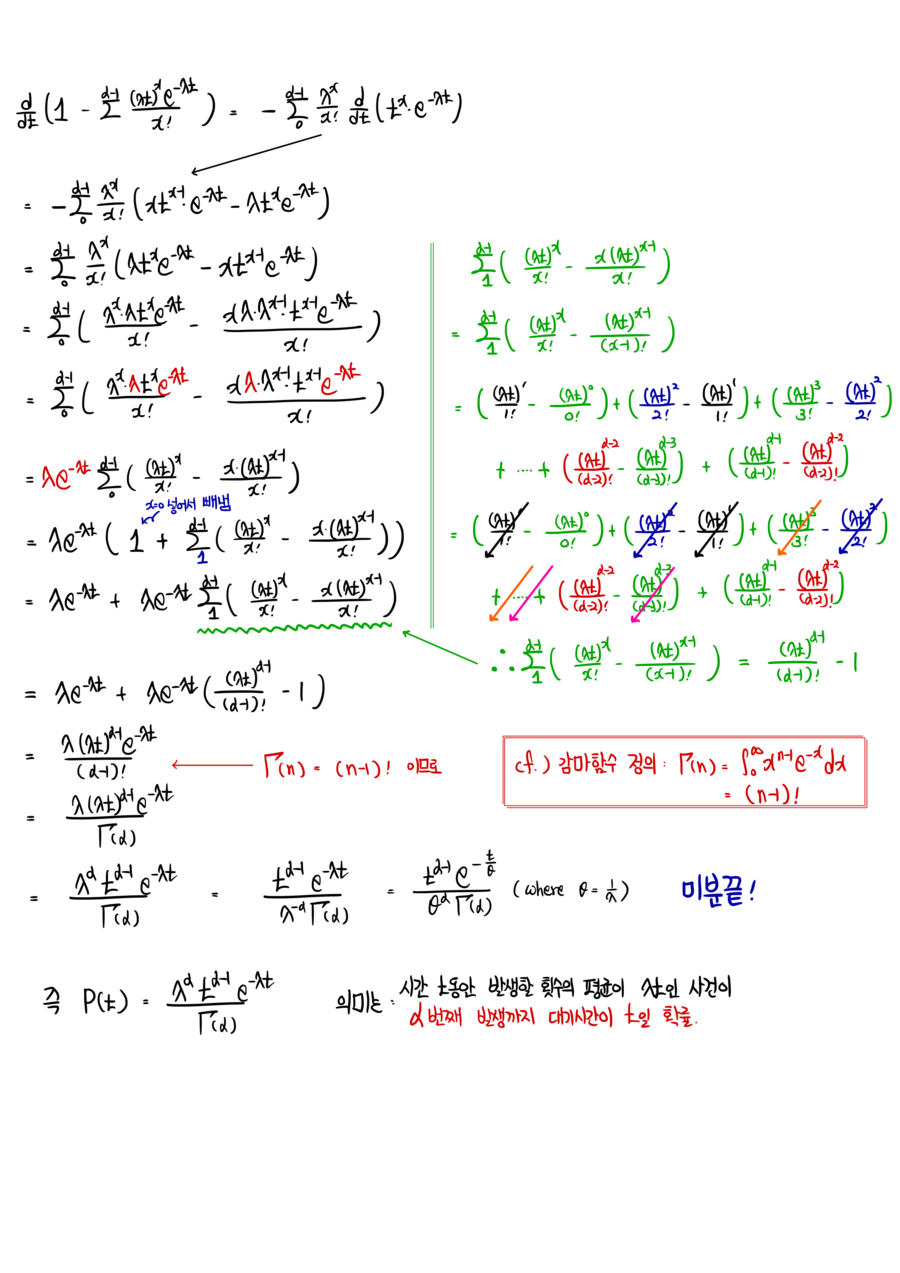

Differentiate this and bam — it’s the pdf!!!?!??!

Now for the differentiation, bye-bye formula editor~!

I’m switching to photos…….

heh heh heh sorry heh heh heh heh

The formula editor is just too… (T_T).

That’s where I got to!!

If we leave that factorial in the denominator like that, the expression is only defined when (α-1) is 0 or a positive integer,

so let’s bring in the Gamma function and write it more generally!!!

(I derived it over here → http://gdpresent.blog.me/220581881465 !!!!)

Stirling’s formula, Stirling approximation [ Statistical Mechanics I studied #1 ]

Finally! In my third year, second semester of the physics department, I took thermal and statistical mechanics! As much as quantum mechanics…

gdpresent.blog.me

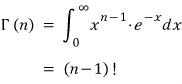

I’ll just throw out the definition of the Gamma function here!

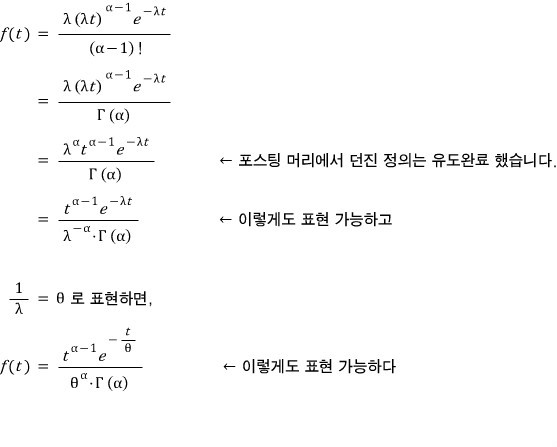

There it is. So now the formula above can be rewritten using the Gamma function!

Let me say it one more time!?!?!? What does f(t) mean?!?!?!?!?

It’s “the probability that the time until the α-th event is t!!!!!”!~

cf.) Of course, if we plug α=1 into f(t), it becomes “the probability that the time until the first event is t,”

flipping that around, “the probability that the first event happens at time t,”

i.e. it collapses right back into the exponential distribution… (sob)!

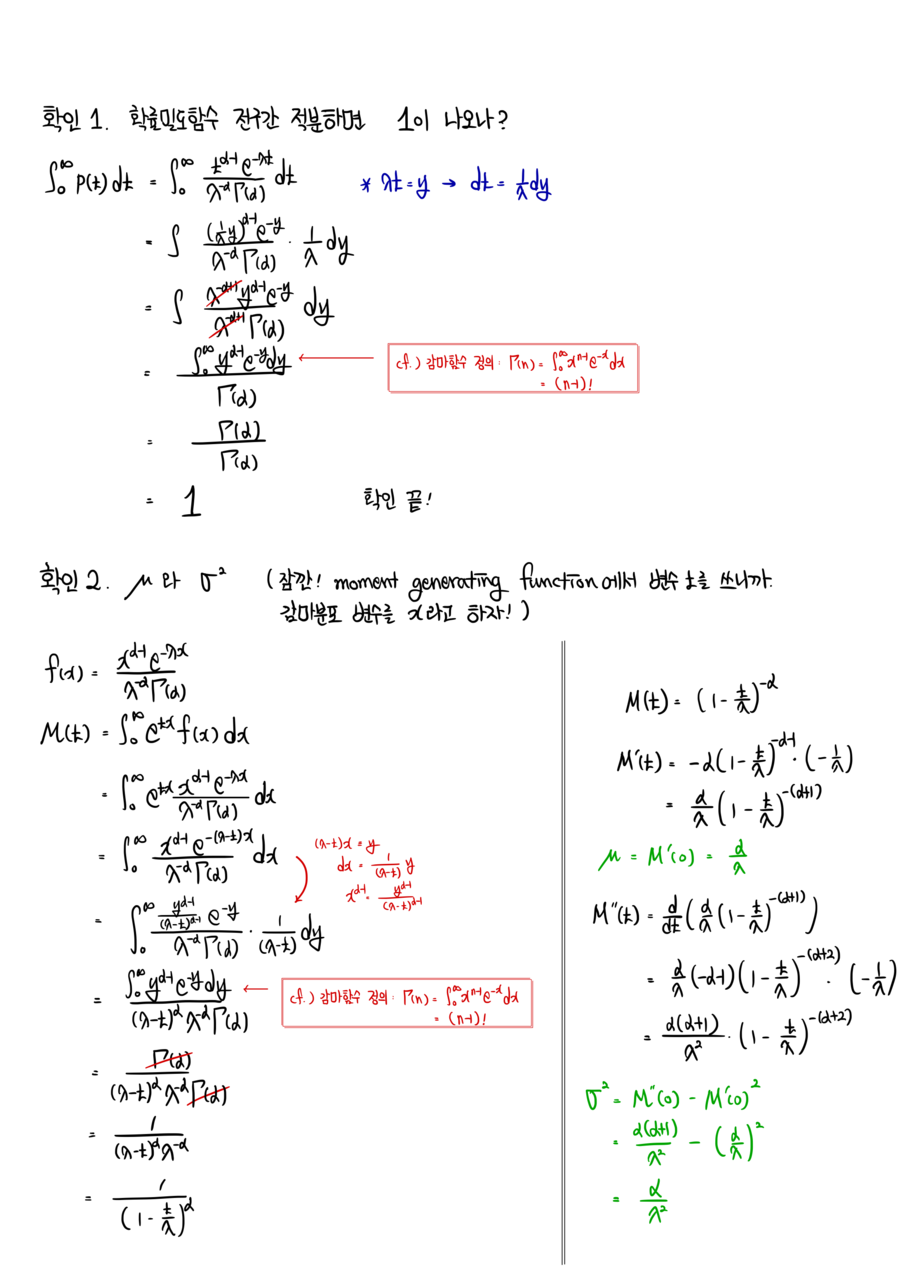

OK, since this is the home stretch, let me finally unmask α and λ.

These are the two ‘parameters’ of the Gamma distribution.

Parameters~?????

What on earth ARE those~?!?

Sorry sorry.

How do I put parameters into words???

If I say “the characteristics of the population?” — that doesn’t really land, right?

Take the Gaussian distribution we all know and love — it also has 2 parameters,

namely the mean and the variance (the values of μ and σ in the Gaussian function are what set the shape and the personality of that particular Gaussian, right?)

Same deal here — what fixes the shape and personality of a Gamma distribution is its parameters, which in this case are λ and α!!!

So what I’m trying to say is:

when something follows a Gamma distribution like this,

it means “the random variable t follows a Gamma distribution with parameters (α, λ)” — that’s how we say it~~~

Alright, moving on~~~ (as for shape parameter vs scale parameter, I’ll just skip… go look that up yourself, haha)

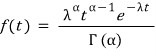

First let’s check that the integral over the whole domain of the Gamma distribution is 1.

The integral of any probability distribution over its full domain has to be 1, right?!~

Because all the probabilities have to sum to 1! let’s go let’s go let’s go

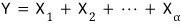

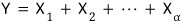

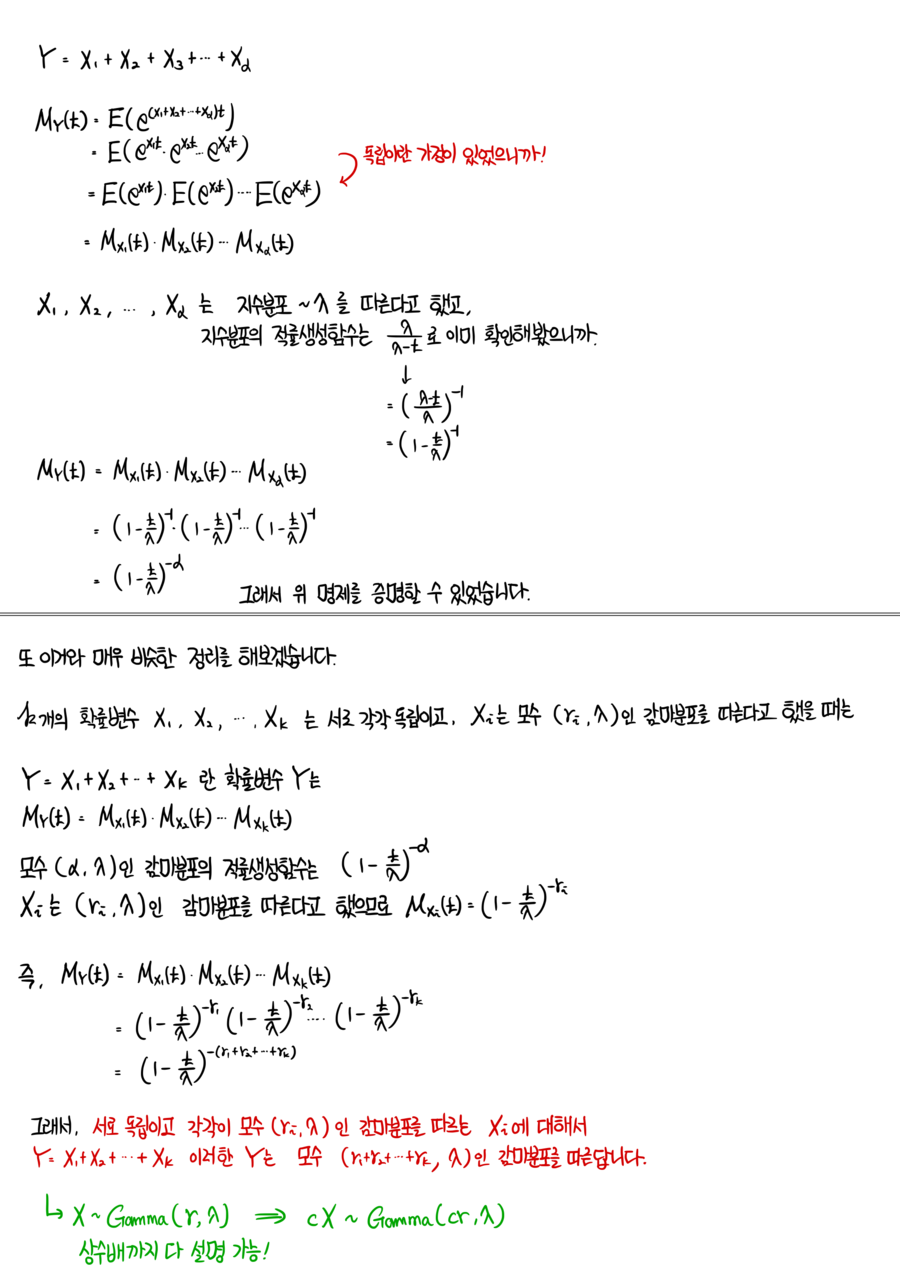

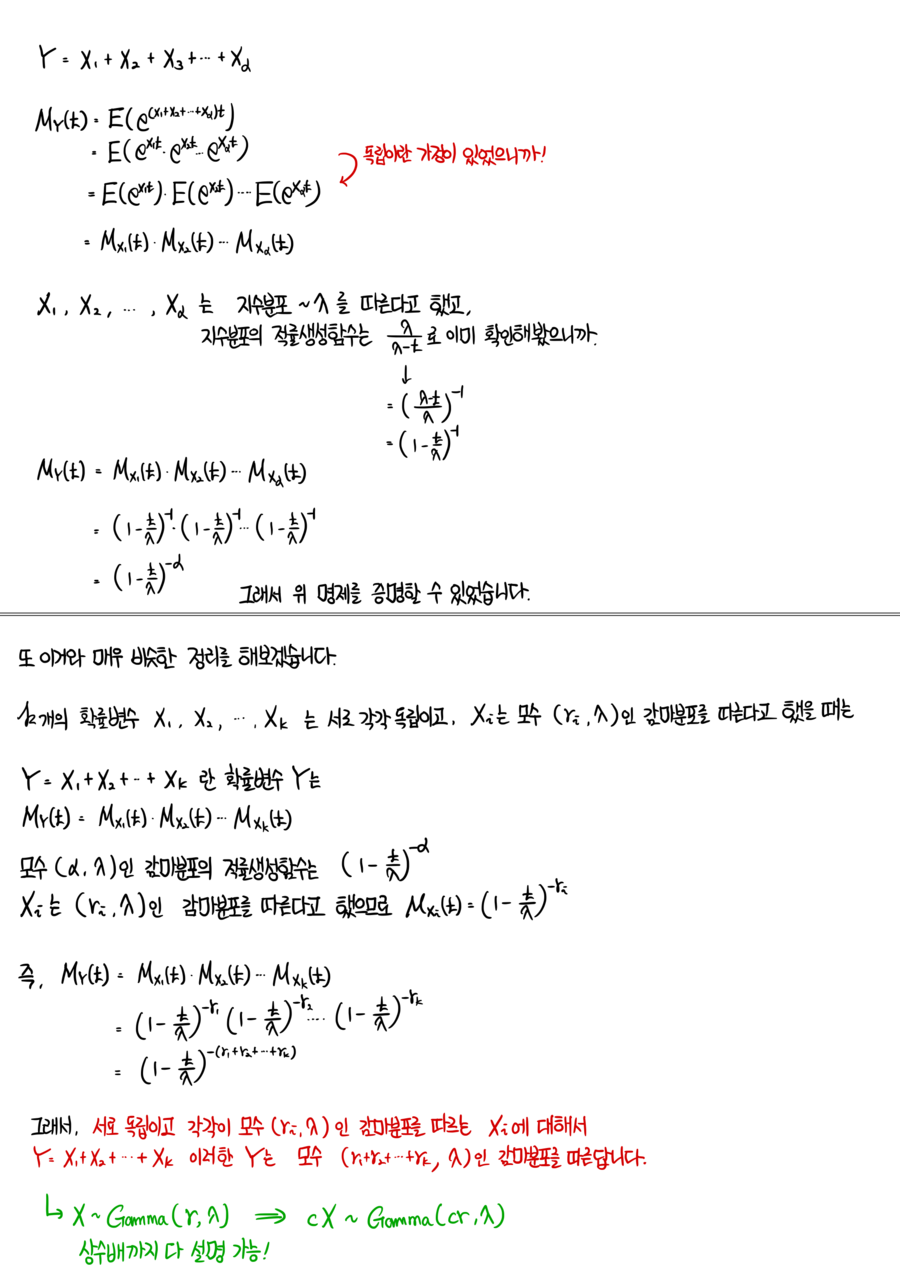

OK, let me wrap up by talking about ‘additivity’!

We said that if a random variable follows

then “the random variable x follows a Gamma distribution with parameters (α, λ),” right????

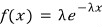

And we showed that α=1 collapses it back to the exponential distribution.

So now, suppose we have α random variables X_1, X_2, ….. , X_α,

each one mutually independent and following an exponential distribution with parameter λ!!!

(You can already kinda feel where this is going, right??? If you can feel it — that feeling IS the Gamma distribution….!!! Sorry!!!!!!!!!!!!!!!)

You haven’t forgotten, have you??????

The exponential distribution with parameter λ is

that one!

Eh well… everyone’s already caught on…..

Let me just spoil the conclusion up front,,,,

The random variable Y defined that way follows a Gamma distribution with parameters (α, λ) — that’s the additivity story.

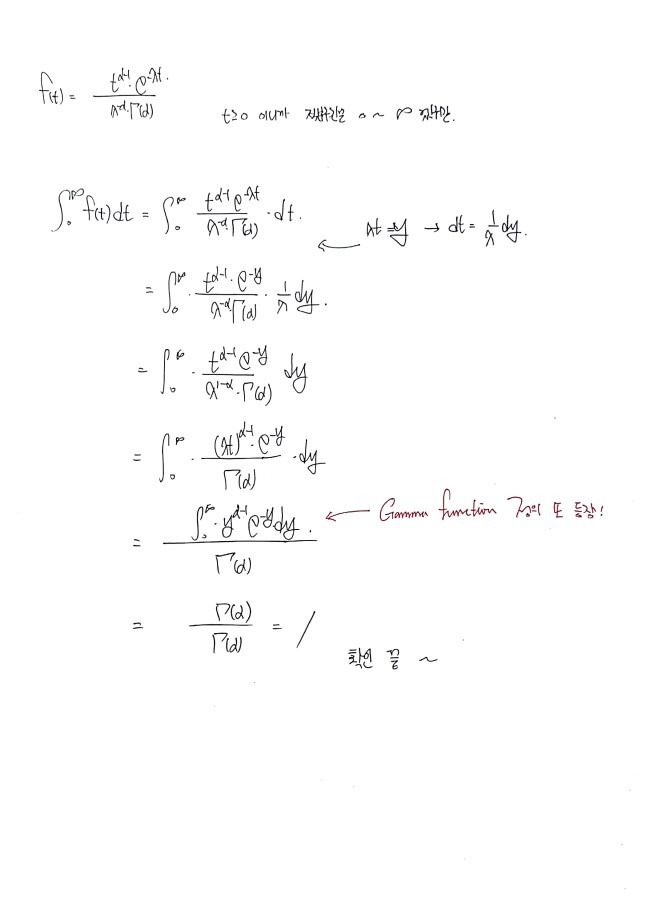

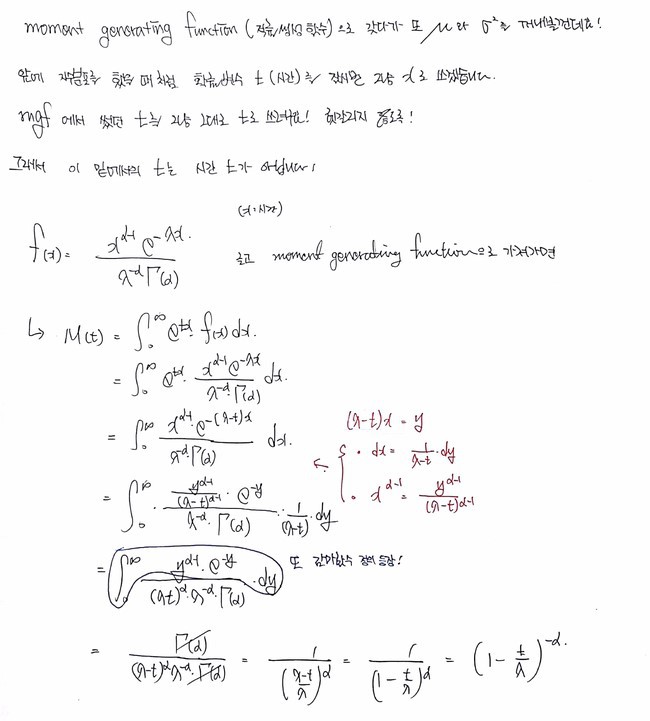

I’ll prove it!

Calling it a “proof” is being generous — it’s super short — but I figured it’d be good to know the principle behind it!!!

Pull out the moment generating function of Y and follow it through,

and from here onwards the formula editor is bye-bye~

Switching to the iPad.

Done~~~~

↓ Cleaned up just a little bit nicer on the iPad! heh

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

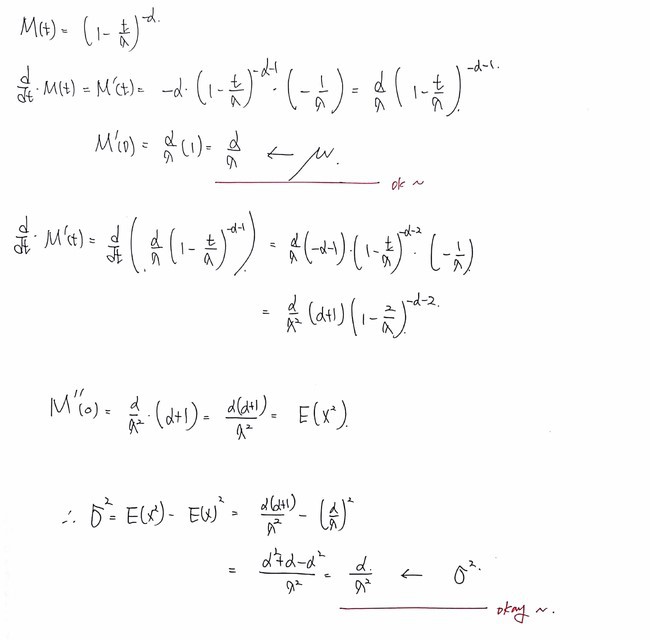

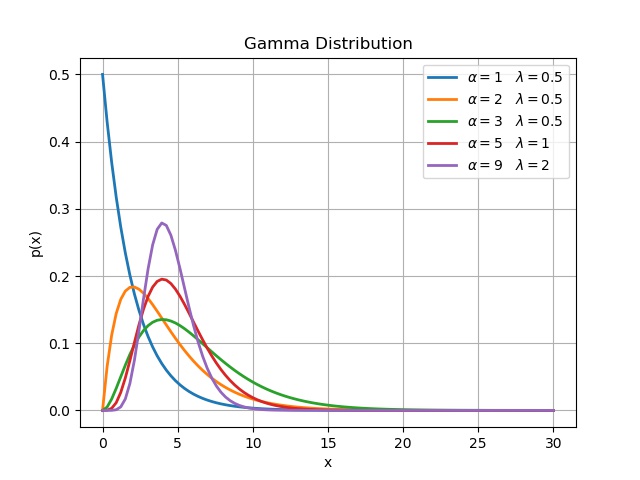

import numpy as np

import matplotlib.pyplot as plt

import os

import scipy.stats as sc

pdp = []

alpha = 1.0

llambda = 0.5

scale= 1./llambda

x = np.linspace(0, 30, 100)

y = sc.gamma.pdf(x, a=alpha, scale=scale)

plt.plot(x, y, linewidth=2.0, label = r’$\alpha =$1 $\lambda =$0.5’)

pdp = []

alpha = 2.0

llambda = 0.5

scale = 1./llambda

x = np.linspace(0, 30, 100)

y = sc.gamma.pdf(x, a=alpha, scale=scale)

plt.plot(x, y, linewidth=2.0, label=r’$\alpha =$2 $\lambda =$0.5’)

pdp = []

alpha = 3.0

llambda = 0.5

loc = alpha / llambda

scale = 1./llambda

x = np.linspace(0, 30, 100)

y = sc.gamma.pdf(x, a=alpha, scale=scale)

plt.plot(x, y, linewidth=2.0, label=r’$\alpha =$3 $\lambda =$0.5’)

pdp = []

alpha = 5.0

llambda = 1.0

scale = 1./llambda

x = np.linspace(0, 30, 100)

y = sc.gamma.pdf(x, a=alpha, scale=scale)

plt.plot(x, y, linewidth=2.0, label=r’$\alpha =$5 $\lambda =$1’)

pdp = []

alpha = 9.0

llambda = 2.0

scale = 1./llambda

x = np.linspace(0, 30, 100)

y = sc.gamma.pdf(x, a=alpha, scale=scale)

plt.plot(x, y, linewidth=2.0, label=r’$\alpha =$9 $\lambda =$2’)

plt.grid(True)

plt.legend()

plt.ylabel(‘p(x)’)

plt.xlabel(‘x’)

plt.title(‘Gamma Distribution’)

plt.savefig(‘3.Gamma Distribution.jpeg’)

cs

Originally written in Korean on my Naver blog (2017-11). Translated to English for gdpark.blog.

Comments

Discussion happens via GitHub Discussions. You'll need a GitHub account to comment.