Temperature

Diving into the statistical definition of temperature — microstates, the ergodic hypothesis, and the genuinely staggering conclusion that falls out of it all!

Statistical definition of temperature

Finally, now the book is going to look at what this thing called ’temperature’ is!!!

Thinking about the definition of temperature,

the conclusion I ended up arriving at is downright staggering!!!! So let’s get started!

First, let me set things up.

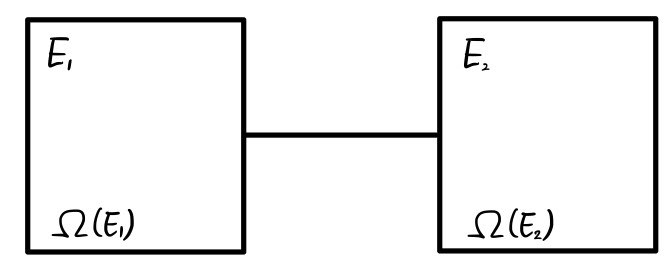

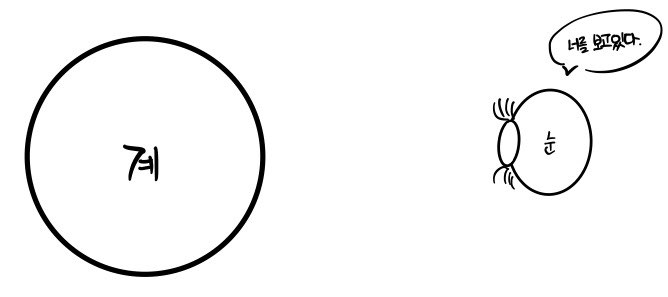

I’ll place two systems,

and those two systems can exchange energy with each other,

but I’ll say they don’t exchange energy with any other system.

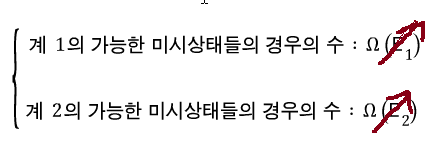

The left (system 1) contains energy

worth of energy, and

what I wrote as this means ’number of cases'.

What kind of number of cases, you ask? When system 1 has energy

worth of energy, this is the ’number of cases of microstates’ it can have.

Alright, then the left system will be in one of the

microstates.

The right system will be the same.

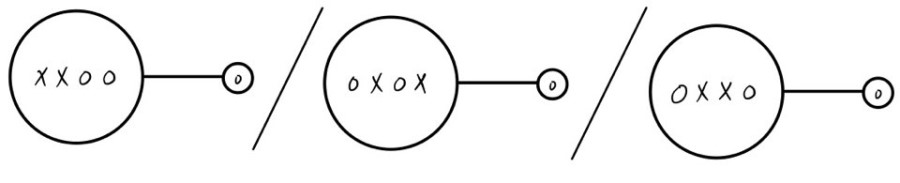

So what about the whole system?

since it has this much energy,

it will be in one of the

microstates.

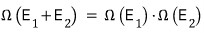

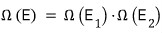

But, that number is

equal to the product of the two numbers of cases!!!!

I’ll say that it’s in one of the

states.

And now we make a few assumptions

(these assumptions have the nuance of “it seems plausible, so let’s assume this is the case!”).

- All possible microstates are equally likely to occur.

(I guess it’s saying we view each event as a single elementary event)

- The motion of ‘certain things’ internal to the system makes the system’s microstate change without stopping.

That is, the internal microstate keeps~ changing.

- (transcribed) Given enough time, the system will explore all possible microstates and will spend the same amount of time in each. (Ergodic hypothesis)

- Adding a bit more explanation to the ergodic hypothesis,

Over there the particle in the right container will keep~ moving,

and it is connected to the left system. (Meaning, they can exchange energy.)

Alright, when the particle moves, can we really say that particle will at some point cross over to the left system?

No. No!!!!

A situation like this is called ’not ergodic’.

Anyway, the conclusion we can draw from those 3 assumptions is

“this system selects a single macroscopic arrangement that maximizes the microstates.”

The book explains it as above, but I think of it like this.

First of all, we can’t distinguish between individual microstates.

However, we can distinguish between macrostates.

That is, when we pop open the lid we can say “aha, it changes like this — this macrostate, that macrostate, and so on!”,

but we can’t distinguish changes in microstates at all, and it has no meaning~.~

That is, even though the system may be changing, we have to say that to our eyes it is highly likely to be in the macrostate made up of the most microstates.

When the system is small, that’s the best you can say,

but when the system is large, they say you can say “the likelihood is insanely, overwhelmingly, no seriously fucking high”.

In our picture, when energy is exchanged, E is distributed to each of E1 and E2,

and when distributing, in the large total system

we can say “it is distributed so that the microstates that are the same macrostate are maximized”.

The reason I forcibly drew arrows is to emphasize that it’s not a constant but an ’energy variable',

and we just need to determine that variable so that the microstates that are the same macrostate are maximized.

We’ll determine the variable that makes it the maximum.

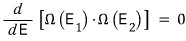

Let’s determine it with calculus!

This function of E

— we just need to find the energy that makes the value of this very function the largest!!!

I guess it’s the same logic as in high school calculus, where we differentiated f(x) with respect to x to find the maximum of f(x) (we’re looking for the extremum).

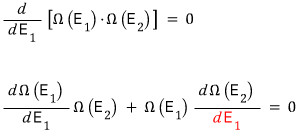

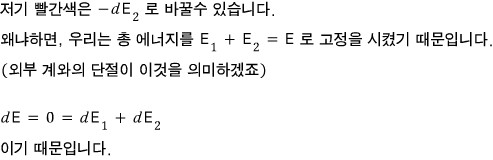

But, let’s differentiate with respect to E1. That’s fine, right?

Energy being distributed under the condition (situation?) like the red one is the condition for the macrostate with the same microstates being the maximum!

Haa…. finally the conclusion

At that moment there’d be a distinct!! temperature!!!! (we said earlier that temperature is one of the ‘macrostates’)

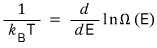

That temperature we measure — the temperature we find!!! when we open the lid — is defined with respect to the above energy.

That is, both sides of the red equation over there are defined by temperature

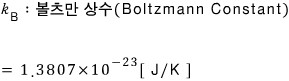

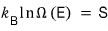

They say the above equation has also been proven experimentally.

Actually,

defining this as ’entropy’ is Boltzmann’s achievement.

But, for now let’s just take this as a listen-once kind of thing and move on.

Ensemble

First I think I need to explain the concept of an ensemble.

What we want to know right now is,

let’s say we want to know about some system given in front of us.

For example, say there are 50 million particles inside, and we want to know the average velocity of the particles in that system.

So, say we made one measurement and got a result

“Can you trust it?”

You’d feel a bit uneasy, right?

So we try to make a second measurement,

but after the first measurement, the system has been shaken once, so all variables are no longer at their initial values….

so the result of that experiment becomes even more and more and more and more untrustworthy. (Meaning, we cannot measure under the same conditions.)

What is the way to solve this conundrum?

We click copy on the initial system and prepare about 2.5 billion identical systems.

Then identical systems have been made, right?!!?!? And we repeat the experiment 2.5 billion times to prepare 2.5 billion data points of average velocity

and if we call the average of these result values

The ensemble is a concept introduced out of this kind of wish.

It’s placing an infinite number of identical systems.

<Actually, it’s the same concept as the ‘statistical probability’ we do in high school probability/statistics class.

When we throw a die and say the probability of getting a 2 is 1/6!!!… it’s actually kind of funny.

You’d have to throw it about infinity times to reach the probability of 1/6…. right?!~>

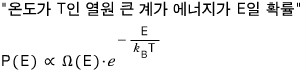

What we’re going to do now is examine “the probability that a system at a fixed temperature is in a particular microstate”.

As the means, we’ll use the Canonical ensemble.

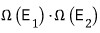

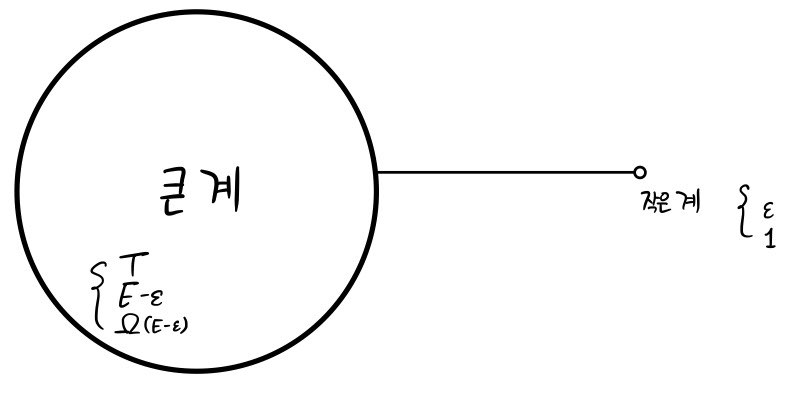

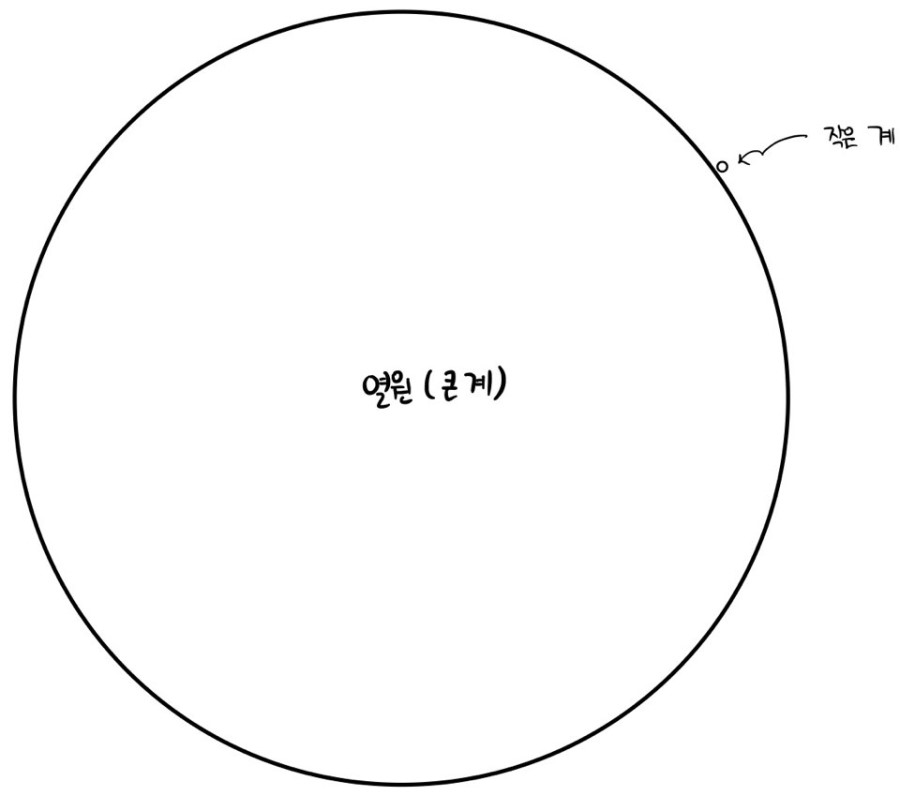

I’ll place a big system like this and a small system so they can exchange energy with each other.

Also, so that the total energy is fixed at E, I’ve set one side’s energy to E-ε this much

and the other side’s energy to ε this much.

And, when the big system has energy E-ε, the number of possible microstates is Ω(E-ε),

and for the small system, I’ll assume the number of possible microstates is Ω(ε) = 1 (that’s how small it is).

If the number of microstates of the big system that enable the small system to have energy ε increases

like this, the more states in the big system that enable the small system to have energy ε,

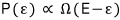

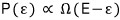

the higher the likelihood that the small system has energy ε, so we can say,

we can write it as proportional like this.

Using the definition of temperature for the right side of this proportionality, we’ll eventually talk about the Boltzmann distribution,

so let’s keep going.

The big system is really absurdly large,

and so

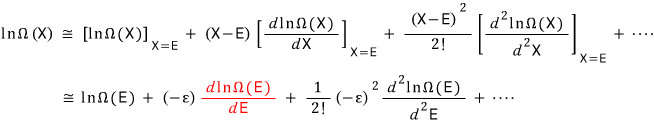

this is a number, and since it’s such a huge number, I’ll take the logarithm and analyze it.

Like this…. the log expression of Ω can be connected with temperature if you massage it just a bit more.

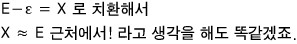

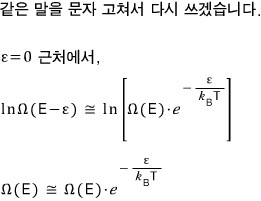

And I’ll Taylor-expand ε around 0. (They say “let’s view the small system as if it’s almost~ not there!”)

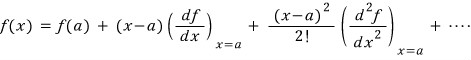

First let me write out the Taylor expansion.

x near a,

Since we’re applying this directly to

around ε=0,

let’s just think of it this way

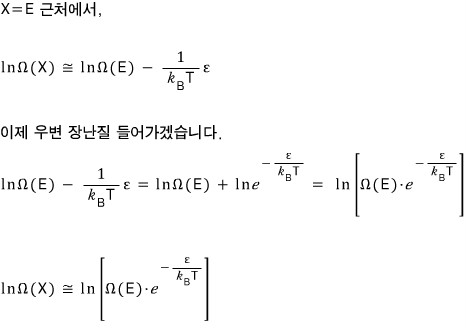

I’ll rewrite the red part using the definition of statistical temperature.

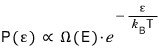

Earlier the proportionality for the probability that the small system has energy ε,

if we rewrite the right side with our approximation,

The important thing is that P(ε) is proportional to that exponential term over there.

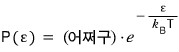

Writing it out like this,

what I want to say is that the probability P(ε) that the small system has energy ε has this kind of “probability distribution”!!!!!

So this content is called the Boltzmann Distribution or the Canonical Distribution

and

is called the Boltzmann factor!!!!

It also means that when systems come into contact, they find equilibrium at that temperature T!!!

Going toward the macrostate with the most microstates….

Still don’t get what this is saying, right!!!!! I too still don’t quite know what on earth this means.

If we solve some problems later, you’ll get a rough feel for it.

You’re probably in a mental-breakdown state right now, so I’ll throw in just one more concept!!!!lolololololololol

This is a probability now, a probability!

For it to properly have meaning as a probability, Normalization is necessary.

“The sum of all probabilities is 1” — the operation of making it this way is called normalization, right?!?!?!

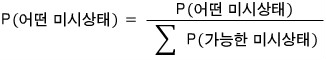

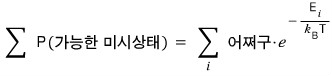

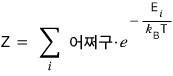

They say we normalize in this way here.

But now, let’s represent the denominator with the Boltzmann distribution we learned above

(we’d have to assume that the system follows the Boltzmann distribution, right!?)

This Z is called the partition function, Z.

I’ve had some thoughts of my own,

and I should record this thought.

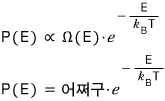

Let me think of it as there being a heat reservoir with temperature T and energy E.

I’ve drawn the picture above more extremely.

When we said we view the small system as having energy ε ~ near 0, the remark I sneakily threw in from the start was to view it as if the small system wasn’t even there, right?

So, thinking carefully again about the equation we brought down via Taylor expansion,

I think it’s probably saying this.

So just because the temperature is exactly!! T, the energy doesn’t need to be exactly!!! E, it’s just a probability.

I think this is what it’s saying.

This is just my own thought, and since I haven’t gotten advice from anywhere,

I’m being quite cautious — please shoot arrows at me!!

Until it’s proven that I’m wrong, I’ll

interpret it as the probability that a system at temperature T has energy E and continue the discussion!!

Then in the next post I’ll solve some problems from Chapter 4 and move on.

Originally written in Korean on my Naver blog (2015-12). Translated to English for gdpark.blog.